Frameworks for the Structural Integration of Artificial Intelligence |

Comparing organizational approaches

| Journal | Industry 4.0 Science |

| Issue | Volume 41, 2025, Edition 5, Pages 144-151 |

| Open Access | https://doi.org/10.30844/I4SE.25.5.138 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

Artificial intelligence is now applied across nearly all areas of business: from production and maintenance to logistics, human resources management, marketing, and customer service (Fig. 1). Companies employ artificial intelligence to automate processes, support data-driven decision-making and unlock new value-creation potential. The diversity of application areas signals a transformation that extends to technology, organization [1], and leadership [2].

![Figure 1: Possible applications of artificial intelligence [3].](https://industry-science.com/wp-content/uploads/2025/09/Stowasser_Figure1-1024x724.jpg)

When companies implement AI, questions of organizational responsibility arise. As part of the Trend Barometer “Working World”, published by the ifaa – Institut für angewandte Arbeitswissenschaft e. V., a study was conducted to examine how responsibility for artificial intelligence implementation is regulated within companies [4]. The results of a survey of 531 employees across various hierarchical levels indicate a significant correlation between company size and organizational clarity (Fig. 2).

In larger companies (500+ employees), responsibilities surrounding artificial intelligence implementation are generally well defined: 30% name a responsible individual, 47% have a dedicated organizational unit, and only 11% have no fixed responsibility. In medium-sized companies (100-499 employees), 50% rely on organizational units and 24% on individuals, while 14% lack clear regulations. In small companies (up to 99 employees), 40% have neither designated roles nor organizational units, and an additional 17% are unsure or did not provide information.

These figures indicate that smaller companies often lack clarity regarding responsibilities for artificial intelligence. As a result, artificial intelligence implementation is frequently unsystematic and lacks structural safeguards, such as governance rules or strategic integration. The following chapter addresses this gap by examining how companies can embed artificial intelligence within their organizations, and which models, roles, and management mechanisms can be used to achieve effective integration.

![Figure 2: Responsibility for the implementation of artificial intelligence [4].](https://industry-science.com/wp-content/uploads/2025/09/Stowasser_Figure23_page-0001-e1758486066197-1024x584.jpg)

Approaches to the organizational positioning of artificial intelligence

When it comes to integrating artificial intelligence into work processes, companies must establish new responsibilities and control mechanisms. Artificial intelligence affects existing structures and requires clear responsibilities, effective governance, and conscious organizational integration.

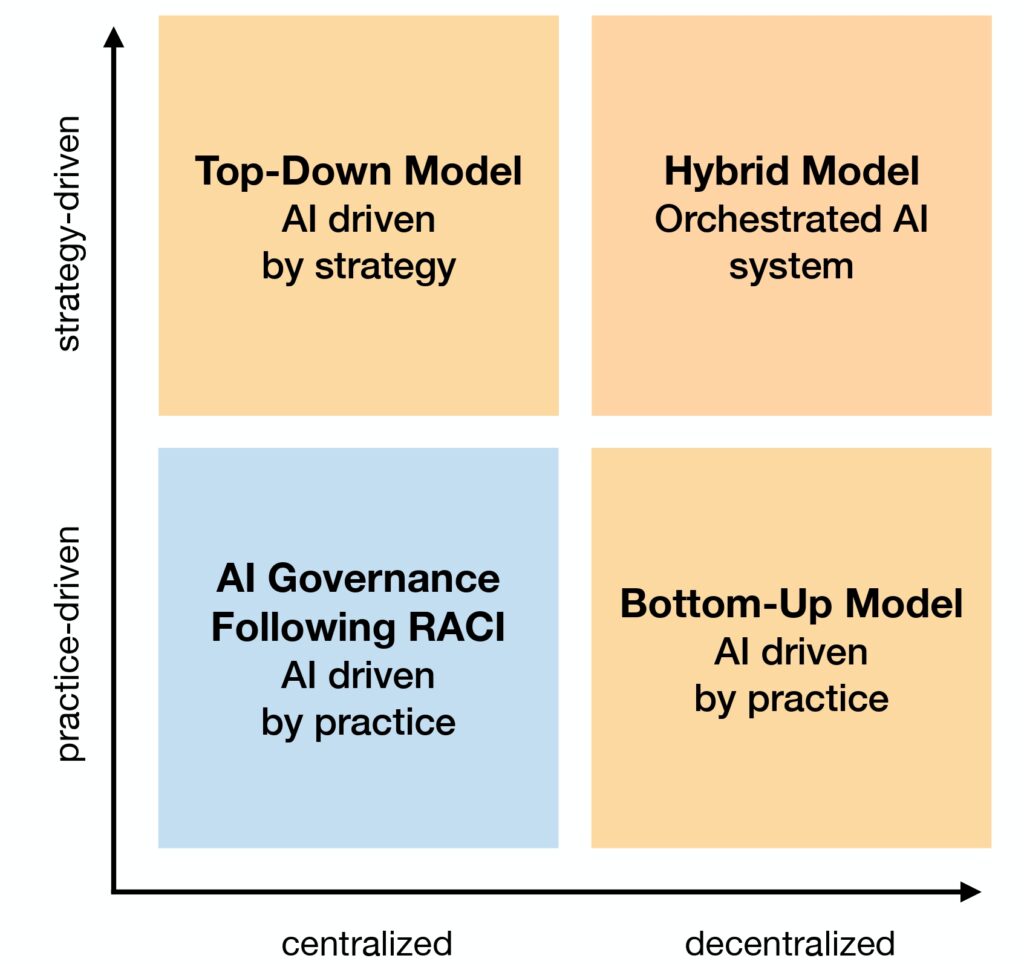

Companies should therefore define clear responsibilities, as these are crucial for the sustainable use of artificial intelligence. Figure 3 illustrates four distinct approaches for integrating artificial intelligence into corporate organizations. These frameworks exhibit tensions between scalability and efficiency on one hand and flexibility and practical relevance on the other.

The following sections present these models and assess their implications for management, scalability, and the sustainable implementation of artificial intelligence.

Top-down model: Strategy-driven responsibility for artificial intelligence

The top-down model follows a clear hierarchical control principle, in which strategic decision-making authority and responsibility are concentrated at the top management level. At the center of this model is the role of a Chief AI Officer (CAIO), Chief Artificial and Data Officer (CAIDO), or Chief AI Transformation Officer (CATO), a position created specifically to reflect the strategic importance of artificial intelligence in the company.

The CAIO typically reports to an interdisciplinary steering committee (for example AI Strategy Board), which represents key areas such as IT, legal, HR, data protection (data governance), and the works council. The committee’s purpose is to define a strategic framework for artificial intelligence use, establish ethical and legal guidelines, and ensure a coordinated implementation. Operational responsibility for executing use cases defined according to the strategy lies with the respective specialist departments, which implement the systems in line with centralized guidelines.

This model offers several advantages: it creates clear responsibilities, enables strategic control across divisional boundaries, and optimizes resource utilization. Centralized oversight helps prevent redundant development and leverage synergies, particularly in comprehensive artificial intelligence transformation programs.

However, this model also presents challenges. Employee involvement is often limited, which can hinder acceptance, especially when operational realities diverge from strategic guidelines. In addition, innovative ideas from specialist departments may be overlooked, as decisions are usually made from the top down. Managers must therefore not only implement centralized guidelines but also serve as feedback channels, conveying operational insights to the strategic level.

The top-down model is particularly suitable for organizations with a lot of regulatory requirements, a clear hierarchical structure, or a strong need for coordination. Successful artificial intelligence use under this model requires strategic oversight to be complemented by effective communication and participatory formats.

Bottom-up model: Designing artificial intelligence based on practical experience

The bottom-up model draws on the innovative strength and practical experience of operational teams. At its core is the assumption that employees who are directly engaged in processes, systems, and everyday challenges are best placed to identify meaningful and effective artificial intelligence use cases. In this model, the impetus for artificial intelligence adoption does not stem from centralized strategic guidelines but emerges from specific problems and optimization ideas ‘from below’.

Operational teams (or, in some cases, individual employees) serve as the starting point for development. They formulate application requirements, test initial prototypes, and drive practical implementation. These teams are supported by communities of practice: self-organized networks from different areas of the company that share knowledge, experience, and tools related to artificial intelligence.

Such communities contribute not only to further develop methods but also to cross-functional coordination and quality assurance. At a higher level, an artificial intelligence competence center bundles technical, methodological, and legal support. It ensures that fundamental requirements such as data protection, IT governance, and ethical standards are met, while also offering training and qualification programs.

A key advantage of the bottom-up model is its strong practical relevance. Artificial intelligence solutions are developed directly where they are needed, which increases employee acceptance and utilization. This often results in creative and resource-efficient solutions that enhance the organization’s innovative capacity.

The main drawback of this model is the risk of fragmentation if strategic alignment is lacking: isolated solutions may emerge that are difficult to scale. In addition, operational teams often lack the resources to advance artificial intelligence projects beyond the prototype stage. Managers must therefore provide support, ensuring that projects remain connected to overarching goals and that governance requirements are met.

The bottom-up model is particularly suitable for innovation-driven companies with flat hierarchies, a strong culture of participation, and a high degree of self-organization. To be suitable in the long term, however, it requires complementary structures for strategic coordination, resource management, and quality assurance.

Hybrid model: integration of strategy and implementation

The hybrid model aims to combine the advantages of centralized control with the capacity for innovation of decentralized implementation. It is based on a division of labor in which a strategically anchored control body, such as an artificial intelligence committee, defines the organizational framework, while operational responsibility remains with the specialist departments. An artificial intelligence team acts as a mediator between these two levels: it translates strategic requirements into operational action, coordinates communication between line management and strategy, and provides support for practical implementation.

Within the specialist department, artificial intelligence representatives, such as data stewards, artificial intelligence multipliers, or “AI ambassadors”, assume a dual role. On one hand, they promote the identification, specification, and implementation of local use cases; on the other hand, they channel requirements, feedback, and insights back into strategic management. This creates a learning organization that continuously integrates technological development, leadership practices, and competence building.

The combination of top-down framing and bottom-up participation enables the enforcement of company-wide standards while simultaneously fostering context-specific solutions. This makes the model particularly suitable for larger, heterogeneous organizations with diverse needs.

At the same time, the hybrid model requires strong coordination capabilities. The involvement of numerous roles, levels, and interfaces require clearly structured interaction. Without suitable communication and control mechanisms, there is a risk that coordination processes may become inefficient, or responsibilities remain ambiguous.

The hybrid model should not be understood as a mere “compromise” but rather as an organizational form that promotes participation, strategic consistency, and learning ability. It is particularly well suited for cooperation- and knowledge-oriented organizations that view artificial intelligence as part of a cultural transformation.

Project-oriented governance based on the RACI model

The RACI model is a well-established tool for structuring responsibilities in projects [5, 6] and can also be applied to the introduction and management of artificial intelligence applications. In the context of artificial intelligence projects, transparency and traceability are particularly important: Who decides, who executes, who is involved, and who is informed? The RACI model addresses these questions by clearly assigning roles for each task or use case: R for “Responsible”, A for “Accountable”, C for “Consulted”, and I for “Informed”.

In artificial intelligence projects, such as the introduction of generative artificial intelligence in recruiting or sales, the RACI model enables a differentiated and transparent allocation of tasks. Actual implementation by a data scientist or operational team is assigned to the “Responsible” role. The “Accountable” role, i.e., overall responsibility, remains with top management or specific roles such as a CAIDO (Chief Artificial and Data Officer).

At the same time, key stakeholders such as IT security, the legal department, or the works council are involved as “Consulted” parties to ensure that compliance, data protection, and internal guidelines are adhered to. Finally, the “Informed” role ensures that affected teams, managers, and external partners are kept up to date.

The RACI model provides clarity: everyone knows both their responsibilities and their sphere of influence. This is particularly useful in sensitive or interdisciplinary artificial intelligence projects, where it can foster acceptance and efficiency. It also offers a high degree of legal certainty through documented responsibilities.

However, the model is primarily suitable for specific projects or sub-processes rather than for governing the overall use of artificial intelligence. It structures roles at the operational level and can serve as a valuable starting point for pilot projects in organizations without established artificial intelligence governance.

The future of organizational anchoring of artificial intelligence

The preceding considerations demonstrate that the organizational anchoring of artificial intelligence is a decisive success factor for its sustainable use. Figure 4 presents the four different models—centralized, decentralized, hybrid, and project-oriented—and systematically compares them to provide companies with guidance on choosing a suitable organizational approach.

It is evident that the discussion about organizational models is still at an early stage. To date, only a limited number of empirical studies have examined how these models are implemented in practice, which challenges arise, and which factors influence their success. There is a lack of reliable data on the operational implementation of these models, on interactions with management and co-determination, and on the integration of governance mechanisms.

The specific experiences of companies with different anchoring models should be systematically documented. This includes both qualitative case studies and quantitative analyses of role profiles, control mechanisms, participation processes, and their effects on acceptance and implementation success. Several open research questions remain, including:

- How are artificial intelligence models implemented across different industries, company sizes, and organizational cultures?

- Which governance formats promote reliability, participation, and innovation?

- In what ways are traditional role profiles and decision-making logic evolving because of artificial intelligence projects?

- What factors contribute to the long-term sustainability of an organizational model?

The original German version of this article can be accessed via DOI: 10.30844/I4SD.25.5.144

Bibliography

[1] ifaa – Institut für angewandte Arbeitswissenschaft e. V.: Arbeitsorganisation neu gedacht – Erfolgsfaktoren für die KI-Einführung. URL: https://www.arbeitswissenschaft.net/fileadmin/user_upload/Broschuere_KI_Arbeitsorganisation_7_final.pdf, accessed 20.03.2025.[2] Plattform Lernende Systeme. Führung im Wandel: Herausforderungen und Chancen durch KI. URL: https://www.plattform-lernende-systeme.de/files/Downloads/Publikationen/AG2_WP_Fuehrung_im_Wandel.pdf, accessed 14.12.2024.

[3] ifaa – Institut für angewandte Arbeitswissenschaft e. V.: ifaa erklärt KI. URL: https://www.arbeitswissenschaft.net/fileadmin/_processed_/c/c/csm_ifaa_erklaert_KI_final_59d9ca43f2.jpg, accessed 12.01.2025.

[4] ifaa – Institut für angewandte Arbeitswissenschaft e. V.: ifaa Trendbarometer Arbeitswelt. URL: https://www.arbeitswissenschaft.net/fileadmin/user_upload/KI-Trendbarometer-2023.pdf, accessed 02.06.2025.

[5] Blokdyk, G.: RACI Matrix – A Complete Guide – 2020 Edition. 5STARCooks.

[6] Brown, J.: The handbook of program management (2nd ed.), 2010 McGraw-Hill.