XAI for Predicting and Nudging Worker Decision-Making |

Feasibility and perceived ethical issues

| Journal | Industry 4.0 Science |

| Issue | Volume 42, 2026, Edition 1, Pages 70-78 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

Human factors and ergonomics research aims at jointly optimizing worker well-being and overall system performance [1]. One example is balancing workers’ scheduling decisions with overall scheduling performance by integrating their preferences into an algorithmic management system [2]. To do this, workers’ scheduling decisions can be learned using artificial intelligence (AI) methods. The resulting models can be viewed as clones of workers’ decision-making behavior and provide an automatic means of eliciting worker preferences from scheduling data. The predictions of these models can be incorporated into an optimization algorithm to account for workers’ preferences.

However, AI models are typically black-box models, that is, worker preferences are encoded in distributed model parameters that are hard for humans to understand. Explainable AI (XAI) methods make the internal reasoning of these methods more transparent, helping humans interpret and understand the causes behind workers’ decisions. This is achieved, for example, by analyzing the sensitivity of a black-box model’s output by systematically varying input parameters and visualizing its response graphically [3]. This, in turn, allows work designers to derive sociotechnical system design and decision-support measures that positively influence workers’ scheduling decisions and overall scheduling performance, such as makespan, tardiness, or total leadtime.

One approach to supporting people’s decision-making is nudging. A nudge is defined as „any aspect of the choice architecture that alters people’s behavior in a predictable way without forbidding any options or significantly changing their economic incentives” ([4], p. 7). In other words, nudging means supporting decision-makers indirectly by altering how decision alternatives are presented to them without actually influencing the decision alternatives or the decision-makers themselves [5].

The approach described, namely XAI-based nudging, seems to be a promising tool for companies. However, it also raises ethical concerns, such as affecting workers’ autonomy and dignity, manipulating people and reducing them to numbers, and failing to ensure transparency and fairness in algorithms, among others [6-9]. For example, Gal et al. [10] analyze algorithmic management, people analytics, and nudging from a virtue ethics perspective, which emphasizes people’s capacity to flourish and develop their character. They argue that the combination can prevent workers from acting as virtuous agents and negatively affect virtue ethics’ three main components—pursuing internal goods, acquiring practical wisdom, and acting voluntarily—because algorithms are treated as having superior knowledge or understanding compared to people.

This paper presents an empirical laboratory study with a student sample that tests the feasibility of nudging people to adhere to a predefined target task sequence in a job shop using XAI. After demonstrating the successful nudging of students’ decisions and the feasibility of the XAI-based nudging approach, recommendations are provided for companies and production managers to consider when implementing this approach in practice.

A laboratory study on the effect of the XAI-based nudging approach

The laboratory study aims to demonstrate the feasibility of nudging people to adhere to a target task sequence in a job shop by altering task characteristics. The study addresses the following research question: To what extent can the XAI-based nudging approach incentivize people to adhere more closely to a predefined target task sequence when making task sequencing decisions in job shops?

Answering this research question in a real-world setting involves many ethical challenges and confounding variables. Therefore, this paper addresses the research question in a controlled laboratory environment using a job shop simulation. Furthermore, access to a sufficiently large sample of real-world workers who can participate in the laboratory study is limited. Therefore, industrial engineering students are selected as a sample due to their accessibility and familiarity with scheduling in job shops. A within-subjects design is implemented, investigating students’ adherence to a predefined target task sequence before and after nudging.

The concordance index [11] is used to measure students’ adherence to the predefined target task sequence and serves as the dependent variable. The concordance index essentially measures the similarity between the task sequence students select and the predefined task sequence. Its formula outputs a value between 0 and 1, where 1 means the sequences are sorted equally, 0 means the predicted sequence has the opposite order to the target sequence, and 0.5 means the selected sequence’s order is random relative to the target sequence.

The independent variable is categorical and has two levels: before nudging (students make decisions in the job shop simulation) and after nudging (students make decisions in the job shop simulation with nudges incorporated). The research question can be operationalized as the following one-sided null hypothesis: The median concordance index of students with the predefined target task sequence after altering task deadlines and priorities in the job shop simulation using the XAI-based nudging approach is not higher (equal to or lower) than before altering these attributes.

To estimate the sample size required to detect a significant increase in the concordance index, a numerical power analysis based on the Wilcoxon signed-rank test is performed. The numerical Wilcoxon signed-rank test is used since previous data collections using the job shop simulation and experiments with students have shown that students’ concordance index distributions are not normally distributed but symmetric, and no closed-form solution exists to compute the statistical power with the Wilcoxon signed-rank test.

The numerical power analysis assumes that students’ concordance with the predefined target task sequence is random (ranging from 0 to 1) before nudging and right-skewed (ranging from 0.5 to 1) after nudging. The power analysis provides a sample size of 15 students required to reject the null hypothesis, assuming the alternative hypothesis is true (50,000 iterations, α=0.05, and β=0.2).

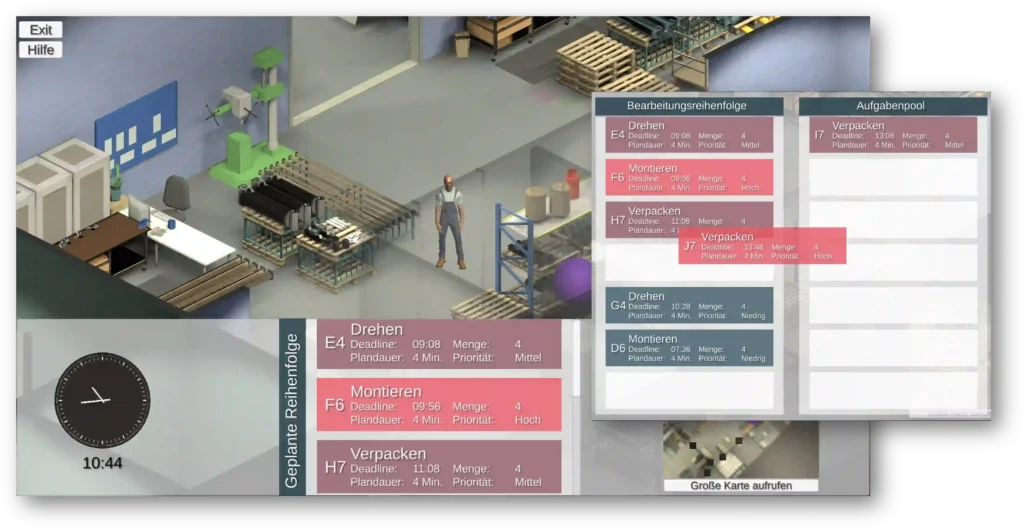

The job shop simulation was developed as a serious game in which players assume the role of a manufacturing worker. It closely resembles the manufacturing environment of a medium-sized hydraulic cylinder manufacturer in Germany. In the game, players can move around the factory during an eight-hour shift (in accelerated time) and process manufacturing tasks.

Before task processing, players must plan the sequence in which to process their tasks in a planning window by dragging tasks from an unordered task pool into a planned sequence. The tasks are simultaneously available in the task pool, characterized by several attributes, such as the deadline (e.g., “11:35 am”), priority (e.g., “high”), quantity (e.g., four pieces), and duration (e.g., four minutes), and new tasks arrive throughout the game. Furthermore, each task is identified by a letter and a number corresponding to its order ID and the production stage (e.g., “B2”). Figure 1 shows the serious game’s main view and planning window.

A process running in the background collects players’ task sequencing decisions. This data is used to train a machine learning algorithm for each player that can predict the player’s individual task sequencing decisions and preferences. New instances of a player’s training data are obtained by extracting the newly planned task sequence each time the planning window is closed.

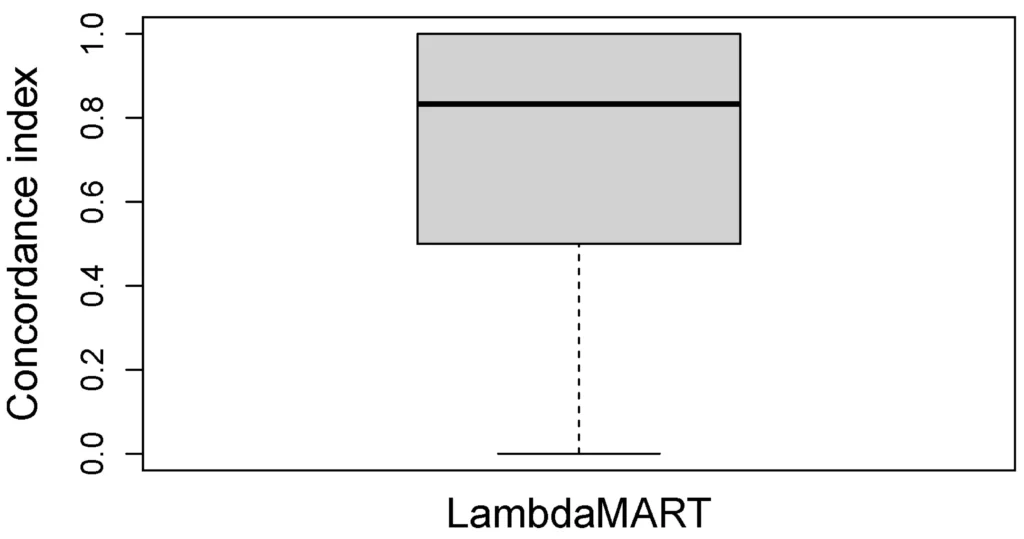

These sequences were used to train the Learning-To-Rank (LTR) algorithm LambdaMART [12], a gradient-boosted decision tree ensemble. The LTR algorithm was fitted and tested with 5×5 nested cross-validation [13]. The algorithm’s hyperparameters were tuned by performing 100 iterations of randomized search. To compare the algorithm’s performance in predicting players’ task sequencing decisions, the concordance index was used again (note that in this case, it is used to compare a predicted ranking with a player’s ground-truth ranking, and not with a predefined target task sequence).

Twenty-eight industrial engineering students played the serious game at a higher education facility in Germany in April 2025. Figure 2 shows the concordance index distribution between the predicted and ground-truth task sequences for students in the serious game using the LTR algorithm. The distribution’s median is 0.82, indicating a high similarity between the task sequences predicted by the algorithm and the ground-truth task sequences selected by students. Hence, it can be concluded that the LTR algorithm has learned students’ sequencing decisions and preferences reasonably well.

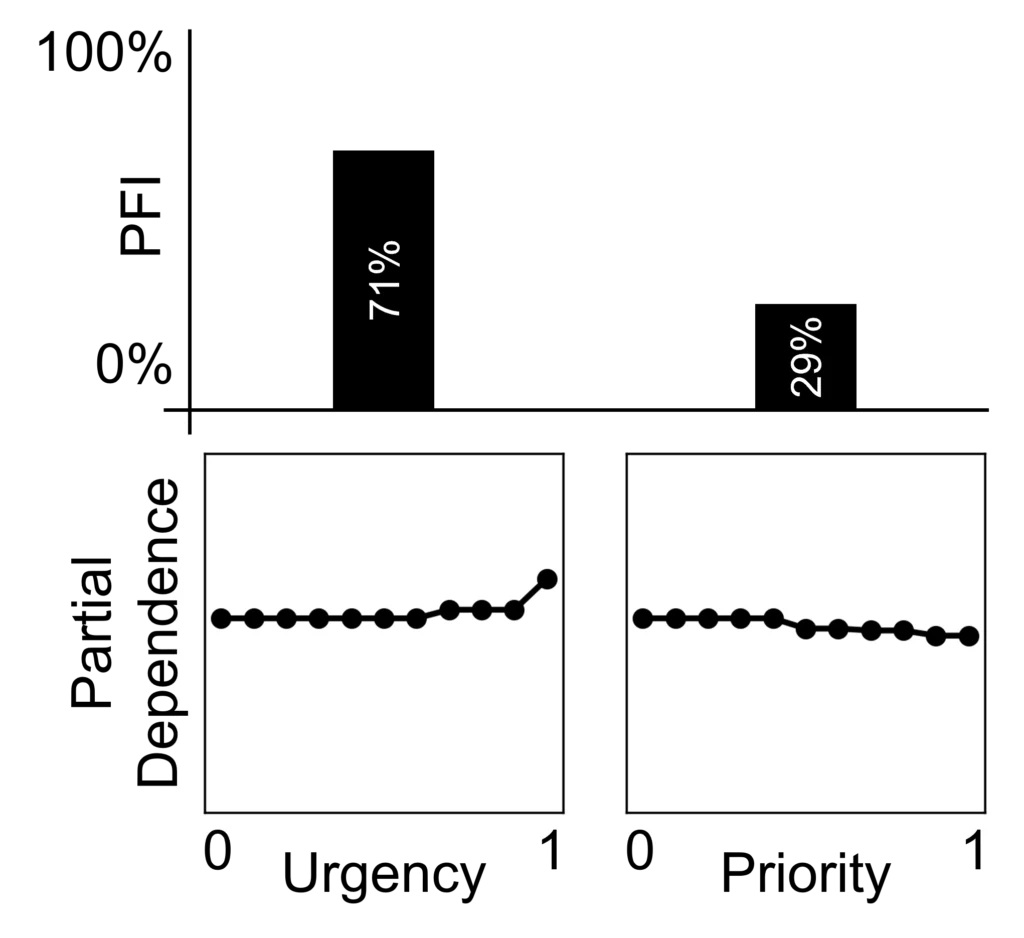

Now, the trained LTR models can be used to analyze students’ sequencing preferences. The preference analysis uses the following XAI methods: Permutation Feature Importance (PFI) and Partial Dependence Plot (PDP). The PFI is calculated individually for each decision-relevant task attribute. The attribute values are repeatedly shuffled across all tasks in the test set, and the average absolute deviation in the LTR model’s prediction performance is determined.

This average absolute deviation quantifies an attribute’s PFI. The PDP is calculated by modifying subsets of task attributes and observing the LTR model’s output. All other attributes remain unchanged [3].

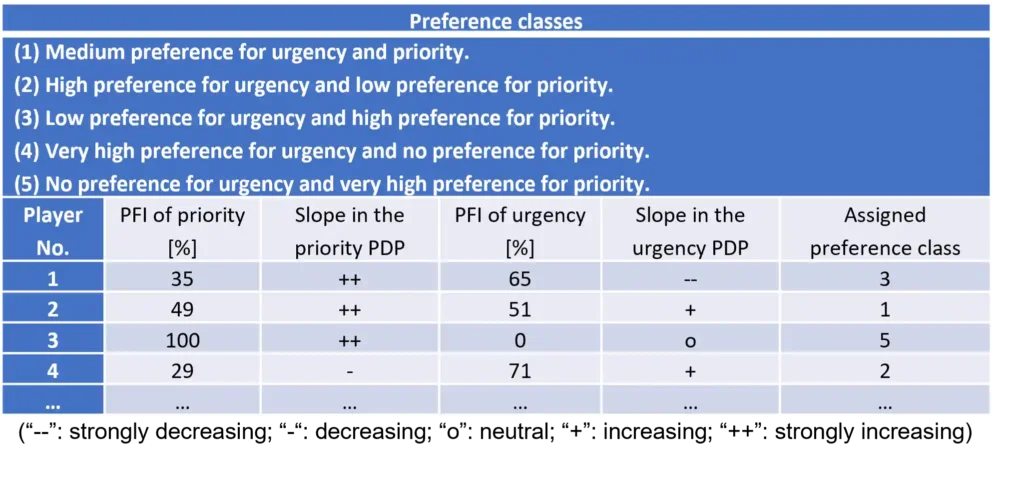

Whereas the PFI indicates an attribute’s overall importance, the PDP shows the functional relationship between attributes and the LTR model’s output. Figure 3 shows an example of a student’s PFIs and PDPs for the task attributes “urgency” and “priority” (referred to as “Student 4” for the remainder of this paper). The PFIs and PDPs enable the classification of students into five preference classes. Based on these classes, task attribute values are altered to nudge students to make task sequencing decisions that adhere to a predefined target task sequence. The students are unaware of this XAI-based nudging process. The only changes students perceive are the altered task attributes in the serious game.

The “quantity” and “duration” attributes were held constant across all tasks to eliminate their influence on students’ task sequencing decisions. Other attributes, such as “distance” (to a task) or “waiting time” (of tasks in the task pool), were not used for nudging and were ignored (though they still influence players’ task sequencing decisions). From Figure 3, we can infer that urgency is more important to Student 4 than priority, and that a task’s utility, that is, the value of processing this task next, increases with increasing urgency and decreases with increasing priority for this student.

Using this preference analysis, all students were broadly classified into one of five preference classes. Figure 4 shows these, along with four examples in which the preference classes were assigned to students based on their PFI values for the urgency and priority attributes and the direction (slope) of their preferences in the corresponding PDPs.

For example, Figure 3 indicates that Student 4 has PFI values of 71% and 29% for the urgency and priority attributes respectively and that the student’s preference for a task increases (“+”) with higher urgency and decreases (“-”) with higher priority. Therefore, the second preference class was assigned to Student 4. The assignment of preference classes to students was a subjective process performed based on the judgment of the first author of this paper.

To test whether students can be nudged to adhere to a target sequence, a game version with adapted deadlines and priorities was created for each of the five preference classes. Together, the first two authors of this paper adopted the perspective of the members of a preference class. They changed the task deadlines and priorities in an exploratory manner, so that the predefined target sequence was the most logical solution.

For example, in Student 4’s preference class two, where urgency influences the student’s decisions more than priority, players were encouraged to process tasks A1, A2, A4, and A5 before H7, H8, and H9 by changing their deadline from “14:00” to “11:40” (which is earlier than that of H7, H8, and H9). In this way, a game version with adapted task deadlines and priorities was developed for each preference class.

In preference classes four and five, the attribute with no importance at all was fully randomized, as it does not influence players’ sequencing decisions. Learning effects of players when playing one of the five altered game versions after the previous unaltered game could be neglected, since the players were not told how to perform well. They may be able to consolidate their task sequencing preferences further; however, this does not affect their adherence to the target task sequence, since it is unknown to the players.

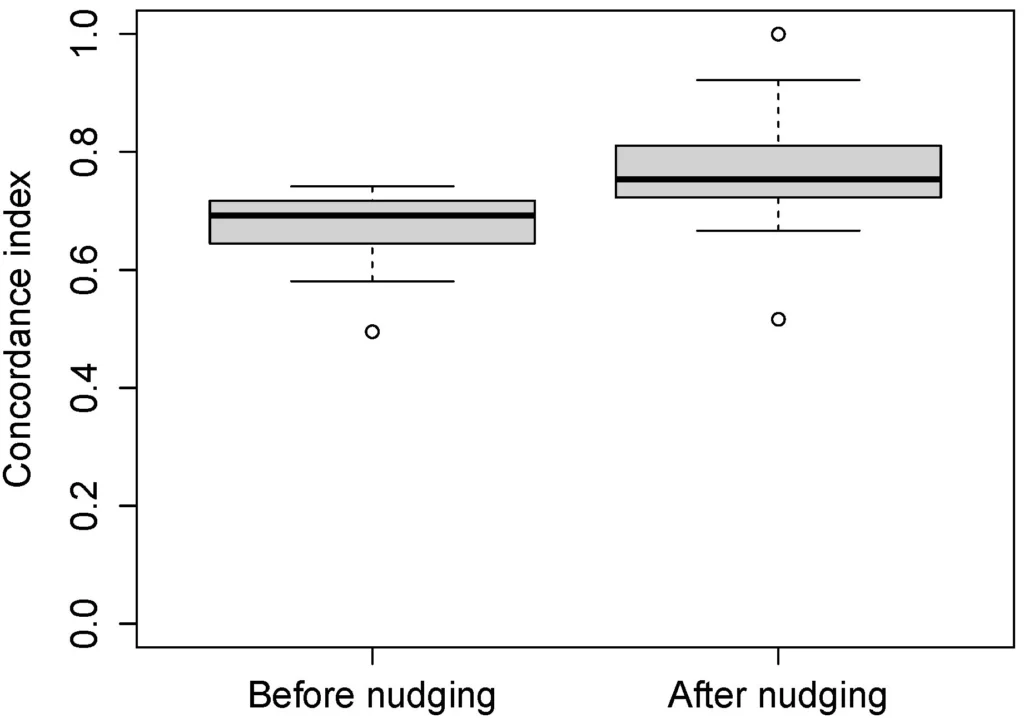

The five new game versions were played again in May 2025 with the same sample of 28 students. Each student received a version of the serious game with altered task deadlines and priorities corresponding to the preference class to which they were assigned. The students were unaware that the authors had trained an LTR model to learn their preferences, analyzed their preferences with XAI, and created new game versions with altered task deadlines and priorities. Figure 5 illustrates the concordance index distribution between the students’ selected task sequences and the predefined target task sequence before and after nudging, with medians of 0.69 and 0.75 respectively.

The median concordance index with the predefined target task sequence increased by 0.06 through nudging. A one-sided paired Wilcoxon signed-rank test confirms that this difference is significant (p<0.001). The effect size is quantified using the rank-biserial correlation with an output of 0.91. With this effect size, the post hoc power analysis indicates high statistical power (0.9999) for the observed effect (N=28, α=0.05, β=0.2).

This demonstrates an increase in concordance with the target sequence through nudging, even when several other task attributes have not been adapted or were randomized entirely. Hence, the null hypothesis—that the median concordance index of students with the predefined target task sequence is not higher (equal to or lower) after altering task deadlines and priorities in the job shop simulation using the XAI-based nudging approach—can be rejected.

Ethical aspects and recommendations for nudging workers’ sequencing decisions with XAI

Companies often create detailed schedules that give workers little autonomy in selecting the processing sequence for tasks. This is particularly true when companies utilize so-called Advanced Planning and Scheduling (APS) systems. Approaches such as those described in this paper allow workers to choose their own processing sequence for their tasks. The tasks’ attributes are influenced by the XAI-based nudging approach presented above. The aim is to nudge workers toward task sequencing decisions that are efficient for the overall manufacturing process while also maintaining their perceived autonomy.

Influencing task attributes, however, undermines workers’ autonomy over the processing sequence. Hence, XAI-based nudging may only increase workers’ subjectively perceived autonomy rather than their actual autonomy. Future studies are needed to investigate this potential discrepancy and its effects on worker well-being and job motivation.

Another concern is undermining workers’ dignity by influencing task attributes and nudging them to make better sequencing decisions [9]. Nudging without informing the recipient can be perceived as manipulation; therefore, it must be implemented and used with high transparency. Workers should be asked for their informed consent regarding the prediction and interpretation of their decisions and be given the option to opt out of the data collection process. Also, all steps in the nudging process should be made entirely transparent to them.

To deploy human-centered AI technologies, production managers should adopt a multi-stakeholder, participatory approach to work design [14]. The implementation process must be complemented with acceptance studies and the derivation of measures to accommodate the XAI-based nudging process to the workers’ needs. Hence, the affected workers should participate in interpreting their preferences, thereby reducing opacity and increasing the validity of the interpretations.

Additionally, workers should be included in discussions about the data collection and storage process. They should receive ownership of their decision data and preference analyses, and other people’s access rights should be established clearly to protect privacy. Works councils and a company’s data protection officer are additional stakeholders who should be included in the process. This ensures compliance with data and worker protection regulations but should also be reflected in company- and case-specific compliance guidelines [6].

Interpreting task sequencing preferences together with the workers mitigates the risk of reducing their personhood and preferences to numerical data. This also reduces the risk of stereotyping and discrimination when analyzing workers’ preferences with XAI. Worker participation and scrutiny will reveal weaknesses in the algorithm’s predictions and deficiencies in the XAI methods used, such as PFI and PDPs. Clear guidelines should be formulated regarding the accountability of workers and algorithms for errors and resulting costs.

Generally, this frames the algorithm differently within companies, shifting from a superior, all-knowing authority to an imperfect system that provides valuable assistance in work design [10]. Algorithmically generated schedules and XAI-based nudges must be viewed as recommendations to inform workers’ decisions, rather than as a means to control them.

XAI-based nudging as a tool

This paper presents an XAI-based nudging approach as a tool for managers to improve how tasks are presented to workers on the shop floor. The approach supports people in making task sequencing decisions that more closely align with a predefined target task sequence in job shops. In this way, workers’ autonomy is maintained while the target task sequence optimizes scheduling performance. The empirical laboratory study with industrial engineering students demonstrates the approach’s feasibility in a job shop simulation, showing that median concordance with a predefined target task sequence can be improved by 9% by altering only task deadlines and priorities.

The employed XAI-based nudging approach relies on human judgment and is limited by subjectivity. Furthermore, the serious game is a simplified, controlled environment that does not reflect the complexity and influencing factors of real-world job shops. Also, students do not fully exhibit the preferences and experiences of real-world workers. Laboratory conditions and the relatively small sample size also limit the generalizability of the results.

Despite these limitations, the paper demonstrates the principle of using AI to learn workers’ decisions and analyze their preferences to provide decision-support through XAI. It offers a human-centered framework that bridges the gap between workers’ perceived autonomy and algorithmic scheduling applicable to medium-sized companies with small series and batch production and job shop organizations, where queues at workstations naturally arise. The approach may also be applied to other use cases and domains where workers must choose between queues, such as sequencing assembly steps in human-robot collaboration, prioritizing order picking tasks in logistics, or vehicle routing in supply chain management.

The main ethical issues discussed concern potentially illusory autonomy, risks of manipulation, and reducing humans to numbers. The paper provides several recommendations for companies implementing the XAI-based nudging approach to mitigate these issues, such as making the nudging process entirely transparent, involving workers in the interpretation process, and protecting workers’ privacy and the possibility of consent. It is worth noting that cultural factors strongly influence perceived ethical concerns. For example, data privacy and manipulation concerns are likely different in other countries. Future research should test XAI-based algorithmic nudging in real-world manufacturing environments and elicit workers’ experience with such a system.

The approach presented here has the potential to break rigid production schedules that rely on a fixed task sequence. Through the targeted design of task attributes, it is possible to increase workers’ perceived sequencing autonomy and, at the same time, improve the likelihood of adherence to an optimal production sequence. Despite its ethical challenges, XAI-based nudging may offer a favorable alternative to rigid, algorithmically generated schedules to optimize both worker well-being and overall system performance.

Bibliography

[1] International Ergonomics Association: International Ergonomics Association-What is ergonomics. In: Acedido em 7 (2015) 4.[2] Lee, M. K.; Nigam, I.; Zhang, A.; Afriyie, J.; Qin, Z.; Gao, S.: Participatory algorithmic management: Elicitation methods for worker well-being models. In: Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society (2021), pp. 715-726. DOI: https://doi.org/10.1145/3461702.346262.

[3] Molnar, C.: Interpretable machine learning (2020). Lulu.com. Accessed 26.06.2025.

[4] Thaler, R. H.; Sunstein, C. R.: Nudge: The final edition. Penguin (2021).

[5] Parent-Rocheleau, X.; Parker, S. K.: Algorithms as work designers: How algorithmic management influences the design of jobs. In: Human resource management review, 32 (2022) 3, p. 100838. DOI: https://doi.org/10.1016/j.hrmr.2021.100838.

[6] Tursunbayeva, A.; Pagliari, C.; Di Lauro, S.; Antonelli, G.: The ethics of people analytics: risks, opportunities and recommendations. In: Personnel Review 51 (2022) 3, pp. 900-921. https://doi.org/10.1108/PR-12-2019-0680.

[7] Benlian, A.; Wiener, M.; Cram, W. A.; Krasnova, H.; Maedche, A.; et al.: Algorithmic management: bright and dark sides, practical implications, and research opportunities. In: Business & Information Systems Engineering 64 (2022) 6, pp. 825-839. DOI: https://doi.org/10.1007/s12599-022-00764-w.

[8] Lee, M. K.: Understanding perception of algorithmic decisions: Fairness, trust, and emotion in response to algorithmic management. In: Big data & society 5 (2018) 1. https://doi.org/10.1177/2053951718756684.

[9] Schmidt, A. T.; Engelen, B.: The ethics of nudging: An overview. In: Philosophy compass, 15 (2020) 4, e12658. https://doi.org/10.1111/phc3.12658.

[10] Gal, U.; Jensen, T. B.; Stein, M. K.: Breaking the vicious cycle of algorithmic management: A virtue ethics approach to people analytics. In: Information and Organization 30 (2020) 2, p. 100301. DOI: https://doi.org/10.1016/j.infoandorg.2020.100301.

[11] Fürnkranz, J.; Hüllermeier, E.; Vanderlooy, S.: Binary decomposition methods for multipartite ranking. In: Machine Learning and Knowledge Discovery in Databases: European Conference, Bled, Slovenia (2009), pp. 359-374. DOI: https://doi.org/10.1007/978-3-642-04180-8_41.

[12] Burges, C. J.: From ranknet to lambdarank to lambdamart: An overview. In: Learning 11 (2010) 23-581, p. 81.

[13] Raschka, S.: Model evaluation, model selection, and algorithm selection in machine learning. arXiv preprint (2018), arXiv:1811.12808. DOI: https://doi.org/10.48550/arXiv.1811.12808.

[14] Nitsch, V.; Rick, V.; Kluge, A.; Wilkens, U.: Human-centered approaches to AI-assisted work: the future of work? In: Zeitschrift für Arbeitswissenschaft 78 (2024) 3, pp. 261-267. https://doi.org/10.1007/s41449-024-00437-2.

Your downloads

Solutions: Process Management