Co-Determination Dialogues |

A tool for human-centered AI implementation

| Journal | Industry 4.0 Science |

| Issue | Volume 42, Edition 1, Pages 92-98 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.84 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

The provisions of the EU AI Act [1] establish dialogue between management representatives, employees, and their interest groups as a prerequisite for the operational use of AI. Article 4 makes this dialogue an integral part of technical innovation processes in companies. If they exercise their rights, employees can thus become active co-creators in the technological, organizational, and social design process.

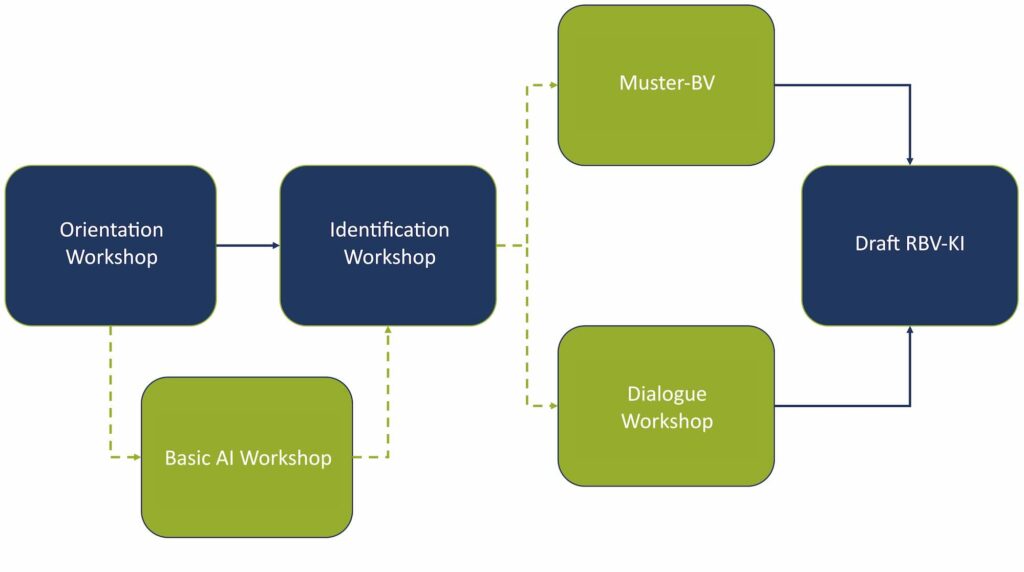

In order to overcome this complex labor policy challenge, the concept of co-determination dialogues was developed, which should ultimately lead to the conclusion of a works agreement (WA) on the introduction and use of AI in companies (“HUMAINE Muster-BV KI”; Fig. 1; see Ranft et al. in this issue).

The dialogical development of regulations for the implementation and use of AI was deliberately separated from the actual negotiation of a works agreement, i.e., the formal exercise of the works council’s co-determination right. This approach is intended to facilitate a preparatory and open exchange between the parties within the company. Both formats (co-determination dialogues and HUMAINE Muster-BV KI) complement each other in a logical sequence for the legally compliant and social partnership-oriented implementation of AI in the company.

AI in companies: State of research in the sociology of work

In the sociology of work, it is empirically proven that the relationship between people, work, and technology is subject to structural power imbalances in companies. At the same time, this relationship is determined by changing social, economic, and political conditions [2]. A human-centered introduction and design of AI—understood as ensuring working and employment conditions that are as healthy, self-determined, and competence-promoting as possible—can therefore only be achieved if employees and their collective representative bodies succeed in effectively incorporating their interests into the design of technical systems.

From a labor policy perspective [3], the introduction of AI systems can be understood as a political design process in which technological and social innovations are negotiated. Labor policy stakeholders within companies and organizations—management, employees, and their representatives—struggle for influence and power in the design of work systems. However, according to Walther Müller-Jentsch [4], this conflict-laden process can lead to positive results. Reliable regulations can be derived through structured dialogue between the actors—for example, through participation and co-determination rights, qualification measures, or company-specific agreements [5].

In current research on human-centered AI design, a range of normative criteria have been established. Eight central requirements for the design of AI work systems can be formulated [6]: Explainability (1); Trustworthiness, confidentiality, and ethics (2); Responsibility and safety culture (3); Compensation for weaknesses in the system (4); Use of knowledge from the user domain (5); Human action and augmentation (6); Physical and mental health (7); and Prevention of job losses (8).

These criteria are compatible with both works constitution regulations and the participation requirements of the EU AI Act (Articles 4 and 5) but only become effective in practice through negotiation processes within the company that are legitimized by social partnership.

Krzywdzinski’s empirical findings (2024) point to a division into “two worlds of AI in the workplace” [7]. Companies with a cooperative co-determination practice in relation to AI contrast with companies with a more conflictual co-determination practice [7]. Sociological research on workplace negotiation emphasizes that the introduction of digital technologies—including AI—should be understood less as a disruptive process and more as an incremental, organizational change process.

These negotiation processes follow specific operational path dependencies. The way in which previous organizational or technological change processes were handled in the company (e.g., whether they were more top-down, conflict-laden, or rather participatory, respecting the co-determination and participation rights of employees) also has a significant influence on the actions of the parties involved in AI introduction [8–11].

While established stakeholder constellations play a role in technology adoption, employees often also express a desire to participate in AI-influenced work contexts. The results of two quantitative online surveys on the use of AI in the workplace (2022 and 2024) [12] show that employees do not fundamentally reject the use of AI in their workplace. Instead, 75% of respondents express an interest in AI assisting them in their work in the future, albeit with the clear caveat that this only applies if AI is actively requested by employees.

This is because 85% of respondents reject AI that operates in the background without their consent, reduces their scope for action at work, and monitors their performance. An essential condition for the successful introduction of AI is therefore co-determination and participation, as enshrined in collective bargaining agreements: Compared to the initial survey in 2022, the proportion of employees who demand co-determination and participation in 2024 has risen from 74% to 81% [12].

However, whether these interests of employees for more co-determination and participation in the introduction of AI are asserted depends on their political negotiation and design processes and specific corporate power structures. Only 37% of employees are represented by a works council [13], while the majority of employees have no institutionalized representation of their interests [14]. Companies without a works council lack a collective actor to exercise and guarantee the participation rights of employees.

The concept of co-determination dialogues described in this article is primarily used in companies with a works council: Through co-determination dialogues, representatives of employee and employer engage in social partnership discussions on AI guidelines and regulatory requirements.

Co-determination dialogues as a method for introducing AI

The co-determination dialogue process developed at the HUMAINE competence center is based on the recognition of experiential knowledge as a complementary knowledge resource [15]. At the heart of this process is the dialogue between parties within the company, which is moderated by scientists. Co-determination dialogues characterize a qualitative approach that links scientific knowledge and experiential knowledge of social actor groups.

However, the implicit experiential knowledge of the company’s actors is not simply taken into account in the design of transformation processes. Rather, the scientifically detached analysis of the findings identified in the co-determination dialogues reveals the potential of experiential knowledge and prepares it for the design of work and technology.

Other studies also point to the importance of participatory processes in the introduction of AI, emphasizing that humans should be at the center of the design of AI systems and should be involved in the operational design process through appropriate reflection and evaluation tools [16, 17, 18]. This highlights the relevance of dialogical formats that aim to achieve a common understanding of the opportunities and risks of AI systems.

Co-determination dialogues take place throughout the AI implementation cycle in a company, from initial information (taking into account Section 90 (1) No. 3 BetrVG; works council’s right to consultation) and the development of company intentions associated with the introduction of AI to the use of AI programs in regular operations. The dialogue series comprises three phases that serve the purpose of joint orientation (1) and the identification of areas for action in labor policy (2). The aim is to enter into a dialogue between management and the works council (WC) on the conclusion of an AI works agreement (Section 77 BetrVG) (3) (Fig. 2).

The orientation phase (1) focuses on developing a common understanding of the objectives the company is pursuing with the introduction of AI; followed by an assessment of the situation and a joint determination of analytical key figures (e.g., “Reduction of the reject rate through the use of AI” or “Number of employees in training measures according to Art. 4 EU AI Act”) through to the selection of an AI pilot area (§ 91 BetrVG). The initiative to consider introducing AI software in the company does not always have to come from management. In the case of Doncasters Precision Castings, for example, it was the employee representative body that recommended the use of AI:

“Due to the high reject rate for our castings, we approached management. We, the works council, were concerned about competitiveness and jobs at the Bochum site. Taking into account the right of initiative within the meaning of Section 92a BetrVG, we presented the possibility of AI-based quality control management. Dr. Vrenegor, our Head of Technology Development, took up this initiative by the works council and contacted experts from the HUMAINE project at the Ruhr University in Bochum. Since then, we have been working together with management and the university on AI solutions and corresponding labor policy regulations.” (Dirk Stüter, Chairman of the Doncaster Works Council)

Following this basic agreement, phase (2) primarily involves working with the works council to identify key areas for action in labor policy associated with AI and to analyze existing company agreements on information technologies. Findings from previous technology introductions are used as a basis for identifying areas for action [19].

After selecting a pilot area, changes in job and qualification profiles in the pilot area are identified. This is important as employee knowledge may no longer be sufficient to perform the task after incorporating AI. Employees and interest groups must be trained on data sources, data quality, and risks before AI is deployed. After all, risks also affect ethical aspects of decision-making autonomy, monitoring, and control in the workplace according to Art. 5 EU AI Act:

“Through dialogue with scientists, we have also looked at the risk pyramid. No performance monitoring of employees, no social scoring. When using AI, the employee must always make the final decision. However, these new EU regulations do not contain any new aspects for us, because they are already regulated in accordance with Section 87 (1) No. 6 BetrVG.” (Dirk Stüter)

The analysis of agreements on the use of information technologies and the identified labor policy areas form the basis for a first draft of a “works agreement on artificial intelligence”, required to enter into dialogue with management (3). An agreement must be reached on whether existing IT agreements (with some additions) are sufficient or whether a specific AI agreement should be concluded. In the case of Doncasters, it was agreed to conclude a framework works agreement on AI so that each new program would not require renegotiation. This labor policy process involves intensive negotiations before a works agreement is concluded and ultimately involves both the legal representatives of management and those of the works council in the final negotiations.

Conclusion and outlook

Co-determination dialogues constitute a process that allows management, employees, and interest groups to jointly analyze the complex challenges of AI use and reach viable agreements. While this dialogue process in companies with works councils leads to the conclusion of “works agreements on artificial intelligence” in accordance with Section 77 of the Works Constitution Act (BetrVG) (see Ranft et al. in this issue), co-determination dialogues can also be carried out in companies without works councils or with alternative representative bodies (AVO) [20] and thus be adapted or simplified for use in SMEs, for example [21].

In these cases, instead of legally binding works agreements, AI guidelines may be formulated. However, it is only with institutionalized interest groups that (legally) binding regulations can be developed for manageable, company-based, sustainable AI practice.

Regardless, continuous training processes for all stakeholder groups should be initiated in accordance with Articles 4 and 5 of the EU AI Act. Co-determination dialogues enable a process-accompanying training process for all participants and can contribute to expanding the traditional concept of conflict partnership to include “company transformation partnership” [11]. The co-determination dialogues tool can be transferred to other companies. However, its successful application depends largely on existing operational experience with negotiation processes on technological and organizational change processes (path dependence).

This research and development project is funded by the Federal Ministry of Research, Technology, and Space (BMFTR) FKZ 02L19C200 and supervised by the Project Management Agency Karlsruhe (PTKA). The authors are responsible for the content of this publication.

Bibliography

[1] Regulation (EU) 2024/1689 of the European Parliament and of the Council of June 13, 2024, laying down harmonized rules on artificial intelligence and amending Regulations (EC) No. 300/2008, (EU) No. 167/2013, (EU) No. 168/2013, (EU) 2018/858, (EU) 2018/1139 and (EU) 2019/2144, and Directives 2014/90/EU, (EU) 2016/797 and (EU) 2020/1828 (Regulation on artificial intelligence) Text with EEA relevance. (2024). URL: https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=OJ:L_202401689.[2] Wannöffel, M.: Workers’ Participation at Plant Level: Conflicts, Institutionalization Processes, and Roles of Social Movements. In: Berger, S.; Pries, L.; Wannöffel, M.: (eds.): The palgrave handbook of workers’ participation at plant level. New York 2019, pp. 36-88.

[3] Jürgens, U.; Naschhold, F.: Arbeitspolitik: Materialien zum Zusammenhang von politischer Macht, Kontrolle und betrieblicher Organisation der Arbeit. Wiesbaden 1984.

[4] Müller-Jentsch, W.: Konfliktpartnerschaft: Akteure und Institutionen der industriellen Beziehungen. Munich 1993.

[5] Haipeter T.; Wannöffel M.; Daus J.-T.; Schaffarczik, S.: Human-centered AI through employee participation. In: Front. Artif. Intell. 7 (2024). DOI: 10.3389/frai.2024.1272102.

[6] Wilkens, U.; Lupp, D.; Langholf, V.: Configurations of human-centered AI at work: seven actor-structure engagements in organizations. In: Front. Artif. Intell. 7 (2023). DOI: https://doi.org/10.3389/frai.2023.1272159.

[7] Krzywdzinski, M.: Zwei Welten der KI in der Arbeitswelt (Weizenbaum Discussion Paper No. 39). Weizenbaum Institute 2024.

[8] Giddens, A.: The Constitution of Society. Outline of the Theory of Structuration, Cambridge: Polity Press 1984.

[9] Kuhlmann, M.: Digitalisierung und Arbeit. Eine Zwischenbilanz als Einleitung. WSI Mitteilungen 76 (2023) 5, pp. 331-336. DOI: https://doi.org/10.5771/0342-300X-2023-5-331.

[10] Haipeter, T.; Hoose, F.; Rosenbohm, S.: Arbeitspolitik in digitalen Zeiten. Entwicklungslinien einer nachhaltigen Regulierung und Gestaltung von Arbeit. Baden-Baden 2021.

[11] Niewerth, C.: Experimentierräume der Mitbestimmung: betriebliche Transformationspartnerschaften. In: Wannöffel, M.; Niewerth, C.; Hoose, F.; Urban, H.-J. (eds.): Mitbestimmung und Partizipation 2030: Demokratische Perspektiven auf Arbeit und Beschäftigung. Baden-Baden 2025, pp. 123-146.

[12] Pfeiffer, S.: (Generative) Künstliche Intelligenz (KI) als Kollegin? Gestaltung und Mitbestimmung aus Sicht der Beschäftigten. In: Wannöffel, M.; Niewerth, C.; Hoose, F.; Urban, H.-J. (eds.): Mitbestimmung und Partizipation 2030: Demokratische Perspektiven auf Arbeit und Beschäftigung. Baden-Baden 2025, pp. 247-264.

[13] Hohendanner, C.; Kohaut, S.: Tarifbindung und betriebliche Mitbestimmung: keine Trendwende in Sicht. In: IAB-Forum May 30, 2025. URL: https://iab-forum.de/tarifbindung-und-betriebliche-mitbestimmung-keine-trendwende-in-sicht/, accessed 05.11.2025.

[14] Ellguth, P.; Kohaut, S.: Tarifbindung und betriebliche Interessenvertretung: Ergebnisse aus dem IAB-Betriebspanel 2020. In: WSI-Mitteilungen 74 (2021) 4, pp. 306-314.

[15] Huchler, N.: Grenzen der Digitalisierung von Arbeit – Die Nicht-Digitalisierbarkeit und Notwendigkeit impliziten Erfahrungswissens und informellen Handelns. In: Zeitschrift für Arbeitswissenschaft 71 (2017) 4, pp. 215-223. DOI: https://doi.org/10.1007/s41449-017-0076-5.

[16] Doellgast, V.; Kämpf, T.: Co-determination meets the digital economy: Works councils in the German ICT services industry. In: Entreprises et histoire 4 (2023) 13, pp. 32-43.

[17] Stowasser, S. (ed.): Künstliche Intelligenz (KI) und Arbeit. Berlin Heidelberg 2023. DOI: https://doi.org/10.1007/978-3-662-67912-8.

[18] Stowasser, S.; Suchy, O. et al. (eds.): Einführung von KI-Systemen in Unternehmen. Gestaltungsansätze für das Change Management. White paper from the Learning Systems Platform. Munich 2020.

[19] Niewerth, C.; Schäfer, M.; Miro, M.: Leitfaden zur Einführung von Mensch-Roboter-Kollaboration: Perspektiven der Betrieblichen Interessenvertretung. Wannöffel, M.; Kuhlenkötter, B.; Hypki, A. (eds). 2019.

[20] Ranft, A.: Alternative Wege der Interessenvertretung? Herausforderungen der Zusammenarbeit mit anderen Vertretungsorganen in betrieblichen Transformationsprojekten. In: Wannöffel, M.; Niewerth, C.; Hoose, F.; Urban, H.-J. (eds.): Mitbestimmung und Partizipation 2030: Demokratische Perspektiven auf Arbeit und Beschäftigung. Baden-Baden 2025, pp. 297-326.

[21] Mittelstand-Digital Center Kaiserslautern. KI-Verordnung: Praxisbeispiele zur Orientierung für KMU 2025.

Your downloads

Potentials: Innovation Leadership Management

Solutions: Process Management