Guidelines for the Fair Use of Generative AI |

Practical examples from production management and social welfare

| Journal | Industry 4.0 Science |

| Issue | Volume 42, 2026, Edition 1, Pages 22-28 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.22 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

ChatGPT, Gemini, Copilot, and other generative AI-based assistants are being introduced in more and more companies. They can be employed for a wide range of tasks, including for creating, correcting, translating, and summarizing texts as well as for programming and control tasks, problem solving, and knowledge generation.

Companies are also recognizing that developing company-specific usage strategies is necessary to tap into the productivity potential [1] of these AI tools: in addition to aspects such as the protection of sensitive company data [2] or the risk of so-called “hallucinations” [3], the introduction of generative AI can also lead to resistance among employees, e.g., due to concerns about impending job losses [4, 5]. For the trustworthy and responsible use of the technology, it is therefore particularly important to consider fairness criteria (non-discrimination, no performance monitoring, job promotion/security) and to develop AI guidelines on that basis.

A number of good practice examples (e.g., Deutsche Telekom’s AI manifesto) and general guidelines are now available to operational decision-makers as a “blueprint” for developing employee-oriented guidelines [6]. However, it is essential for successful implementation that company guidelines are developed with the participation of the various stakeholder groups and taking into account the areas of application within the company [7]. This requires the identification of specific potential uses and risks in the company setting. But how can companies identify the potential and risks of AI use in a participatory manner and incorporate them into their AI guidelines?

As part of the HUMAINE project, the Institute for Work, Skills and Training at the University of Duisburg-Essen has developed the FriendlyTechCheck (FTC), a dialogue process that enables company stakeholders to independently identify design requirements for the implementation of artificial intelligence and use them, for example, to develop guidelines [8, 9]. This article presents the process. Based on two practical examples from companies, it shows how the FTC can support the development of context-sensitive and employee-oriented AI guidelines in companies.

FriendlyTechCheck for risk and potential analysis

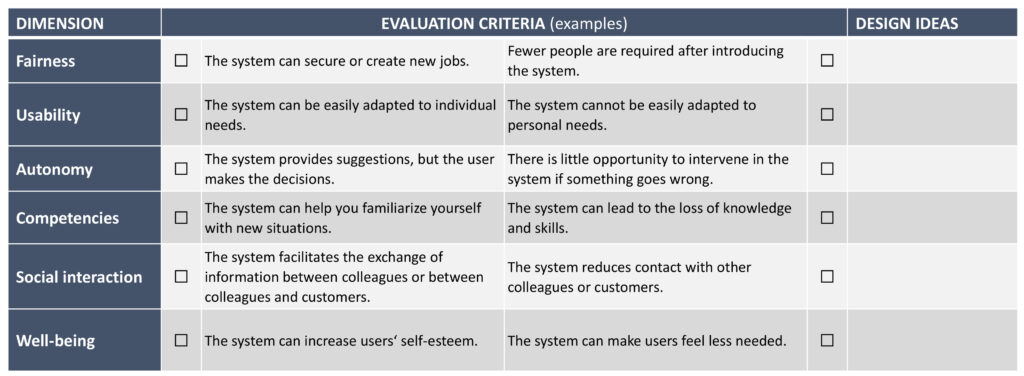

The aim of the FTC is to enable operational designers to analyze AI systems in their respective socio-technical contexts with regard to resource-promoting (“friendly AI”) and harmful effects (“unfriendly AI”). The tool is based on established human criteria (e.g., learning supportiveness, harmlessness) for the introduction and use of digital technologies [10] and covers various dimensions (Fig. 1). The theoretical basis is a modification of Demerouti’s demand-resource model [11].

The FTC can be used during planning as well as during and after technology introduction. The procedure aims to facilitate dialogue between various operational stakeholders in order to take different perspectives into account. The evaluation of an AI system using the FTC checklist is therefore generally carried out by several operational stakeholders (e.g., managers, works councils, users). It is embedded in a workshop format moderated by trained company stakeholders (e.g., from the works council or from the human resources or occupational safety department). Participants should have knowledge of how the planned AI system works and initial experience of using it.

The workshop (duration approx. 1.5 hours, depending on operational requirements) consists of the following phases:

- Survey phase: Participants evaluate the AI system using the FTC checklist. The risk and potential analysis along the FTC dimensions takes less than ten minutes to complete.

- Evaluation phase: The collected data is presented graphically and made available to the moderator. They then present the results of the screening and ask participants to place the results in the industrial context. The aim is to establish a specific contextual reference to the identified potentials and risks, which is essential for the subsequent development of design measures.

- Design phase: Against the backdrop of the identified potentials and risks, the participants develop concrete design ideas. This includes both the promotion of potentials and the preventive reduction of risks through accompanying measures. The design ideas are evaluated by the participants in terms of their feasibility and relevance. The documentation of the results is forwarded to those responsible for implementation in the company (unless the participants already have the relevant authority or resources).

Practical application in the company

Below, we provide an insight into how the FTC was used in two different fields of application and what implications this had for the development of AI guidelines. At Fraunhofer IAO, the Production Excellence department used the method to assess the risks and potential of generative AI-based chatbots in production management. The nine participants (seven members of project staff, one manager, one occupational safety specialist) are responsible for planning logistics and production processes in customer and research projects and have initial experience of using ChatGPT. The tool is used here for research purposes, programming, and to support the preparation of quotations and reports.

The second group consisted of six employees from Iserlohner Werkstätten gGmbH, most of whom work in the field of digitalization and represent the interests of workshop employees. The group (one supervisor, three employees from the technical department, and two representatives from the works council) was given the opportunity to act as ‘early adopters’ and identify potential areas of application for ChatGPT in workshops for people with disabilities. Among other things, AI is used here to populate a self-developed content management system for creating personalized work instructions for workshop employees. The participants expected the FTC deployment to provide insights into the potentials and design requirements associated with a possible rollout of generative AI.

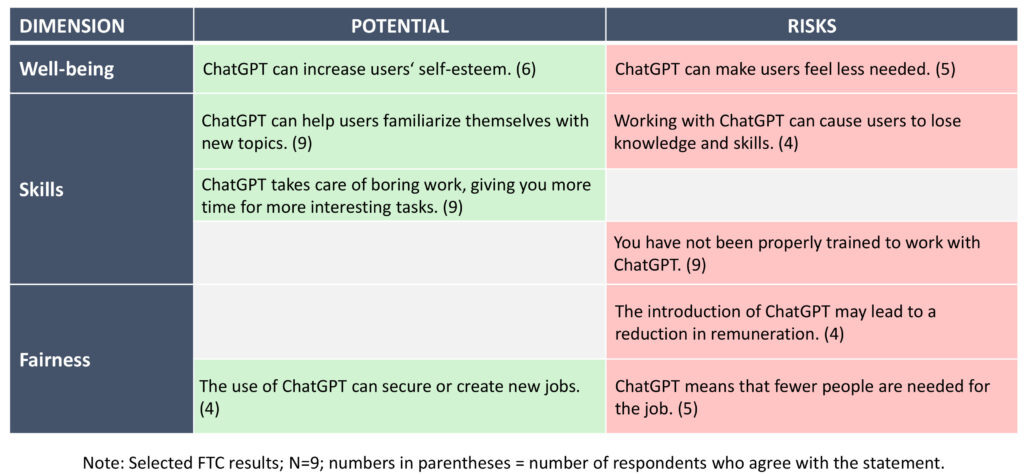

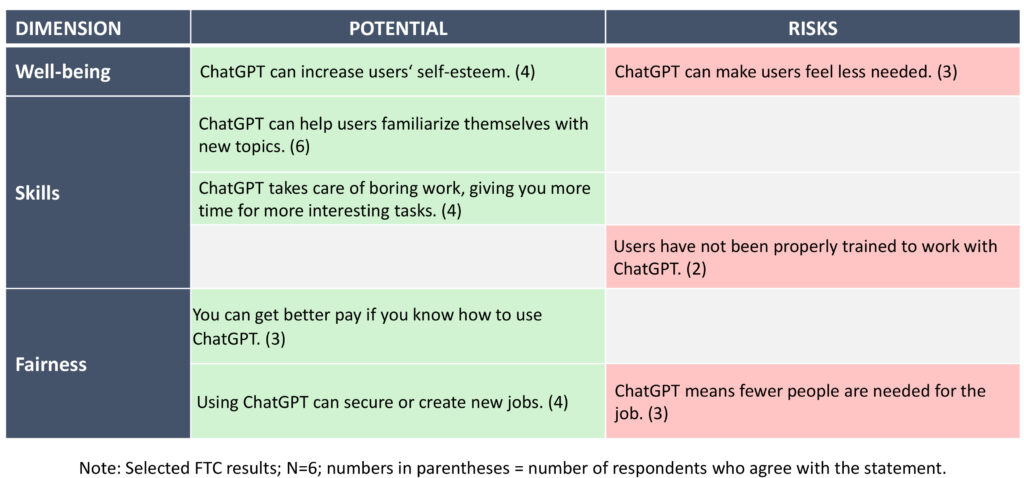

The key findings based on the evaluation of the FTC surveys and workshops for both case studies are presented separately below. We focus on the dimensions of well-being, skills, and fairness. For results on the other FTC dimensions, see [12].

Key findings

The FTC results in the area of production management (Fig. 2) show that participants see considerable potential in the use of ChatGPT. For example, respondents report that using ChatGPT enables them to improve the linguistic quality of applications or reports, which boosts their self-esteem. Potential is also seen in familiarizing oneself with new, particularly non-specialist topics and reducing processing times for bureaucratic tasks such as business trip requests.

In addition to these potentials for relief and learning, however, respondents also see challenges, particularly with regard to long-term effects on professional status and employment opportunities. The majority of participants fear that their expertise may be less in demand among customers in the future. One project employee commented: “But if it turns out that artificial intelligence produces results just as good as you do, there’s no reason why what you do is still a valuable job.” The majority of engineers assume that ChatGPT will not completely replace their work and that instead there will be a shift in tasks toward control activities.

However, there are fears, particularly in the area of project acquisition, that project providers will reduce volumes due to the possibilities of automation, which could lead to involuntary part-time work or shorter project durations. All participants also see the lack of systematic training in the use of ChatGPT as a central problem in its current operational deployment, which they believe could have a negative long-term impact on both career opportunities and the productivity of the institute.

At first glance, we see some similar FTC assessments in social welfare (Fig. 3). However, during the group discussion, it becomes clear that these must be viewed in a different (usage) context. The rehabilitation specialists employed in the workshops hope that the introduction of a company-specific ChatGPT will free up time for their original educational tasks. For example, the content management system currently being developed by the digitalization department will use ChatGPT to support the creation of needs-based work instructions for workshop employees.

The participants do not see any particular employment risks for either the educational staff or the people with disabilities entrusted to them in the workshops when introducing an in-house ChatGPT: “I don’t think we’ll be cutting jobs. Instead, the task will shift” (supervisor). The question of appropriate training in the use of generative AI is less acute due to its current ‘experimental status’. However, involving workshop employees in the use of and training in ChatGPT is considered an important task for the future as it ensures that they are prepared for the primary labor market: “But the [training] should definitely be provided because it simply breaks down barriers” (technical department employee).

Implications for a human-centered generative AI usage strategy

The results show that the impact of generative AI on work and employment is ambivalent and context-dependent. Participants in both FTC workshops rated ChatGPT as a useful tool. At the same time, key design requirements were discussed. In production management, these mainly related to the acquisition of new skills in the use of generative AI and dealing with potential employment risks. In the case of social welfare, any negative effects were considered less acute due to the ‘experimental status’ of the technology. Nevertheless, training and organizational guidelines are required in order to ensure the quality of work and compliance with data protection requirements.

In both cases, the development and implementation of an AI usage strategy geared toward design requirements was assessed as a decisive success factor in leveraging the potential of AI use in business. From the perspective of those involved, the following guidelines are central:

- Agree on clear guidelines and governance: Binding rules for the use of ChatGPT should be defined, for example with regard to permissible tasks and data entries. The introduction of user councils with equal representation, which test and evaluate new AI systems and act as contact persons for employees, was considered useful.

- Raise awareness and train AI users: Both groups emphasized the need to train all potential users before introducing new AI tools in order to raise risk awareness and ensure (legally) safe use in everyday business operations. This could also help to alleviate fears about AI.

- Offering training: The participants believe that employers have a duty to offer comprehensive and mandatory training when introducing ChatGPT and similar tools so as not to give a one-sided advantage to tech-savvy or high-performing employees. Otherwise, existing inequalities in digital skills could have a negative impact on productivity and career opportunities.

- Create access: Against this backdrop, participants from the welfare sector in particular emphasized that, despite the additional costs for licenses and training measures, all employees (and employee groups) should be given easy access to ChatGPT.

- Considering the consequences of automation: Both groups see a low risk that the introduction of ChatGPT will eliminate entire work tasks. Nevertheless, they consider a potential and risk analysis to be necessary, even in the case of partial substitution risks, in order to open up alternative fields of activity or training opportunities for employees at an early stage.

Preliminary conclusion

Anyone who wants to tap into the potential of generative AI for greater business success and economic growth would be well advised to do so in a fair and responsible manner [13]. The insights gained from the use cases have shown that the FTC method can be helpful for participatory potential and risk analysis and the subsequent development of operational AI usage strategies and guidelines.

The operational partners perceive the method as simple and time-efficient, and the workshop participants appreciate its dialogical nature: the different perspectives of the stakeholders involved in the process can be discussed on an equal footing. The use of the FTC thus made it possible to take a first step toward a technology assessment that is often overlooked in day-to-day business—a circumstance that is likely to apply in particular to SMEs, which often have limited resources for designing digitization processes. Further testing of the FTC will show to what extent the identified design requirements and guidelines can be transferred to other companies and use cases.

This article was written as part of the HUMAINE research project, which was funded by the BMFTR funding program “Future of Value Creation – Research on Production, Services, and Work” (funding code: 02L19C201).

Bibliography

[1] Noy, S.; Zhang, W.: Experimental evidence on the productivity effects of generative artificial intelligence. In: Science 381 (2023), pp. 187-192.[2] DIHK: Was Unternehmen beim Umgang mit generativen KI-Anwendungen beachten sollten. DIHK-Leitfaden am Beispiel von ChatGPT. URL: https://www.dihk.de/de/themen-und-positionen/wirtschaft-digital/Digitalisierung/was-unternehmen-beim-Umgang-mit-Generativen-KI-Anwendungen-beachten-sollten-94832, accessed 12.06.2025.

[3] Bitkom: Künstliche Intelligenz in Deutschland – Status quo und Ausblick. URL: https://www.bitkom.org/sites/main/files/2024-10/241016-bitkom-charts-kuenstliche-intelligenz-final.pdf, accessed 12.06.2025.

[4] Eloundou, T.; Manning, S. et al.: GPTs are GPTs: Labor market impact potential of LLMs. In: Science 384 (2024) 6702, pp. 1306-1308.

[5] Gmyrek, P.; Berg, J.; Bescond, D.: Generative AI and jobs: A global analysis of potential effects on job quantity and quality. URL: https://ssrn.com/abstract=4584219, accessed 12.06.2025.

[6] Zickerick, B.: KI-Leitlinien für Unternehmen: ein partizipativer Ansatz für einen verwantwortungsvollen Einsatz Künstlicher Intelligenz. In: GfA (ed.): Arbeit 5.0: Menschzentrierte Innovationen für die Zukunft der Arbeit. Sankt Augustin 2025.

[7] Lauer, T.: Change Management. Fundamentals and Success Factors. 1st edition, Heidelberg 2021.

[8] Gerlmaier, A.; Bendel, A.: Künstliche Intelligenz am Arbeitsplatz gesundheits- und lern-förderlich gestalten: Entwicklung und Erprobung des FriendlyTechChecks für betriebliche Praktiker:innen. In: Dettmers, J.; Tisch, A.; Trimpop, R. (eds.): 23. Workshop Psychologie der Arbeitssicherheit und Gesundheit – Gesundheitsförderliche Arbeit = attraktive Arbeit? Kröning 2024.

[9] Gerlmaier, A.; Kramer, P.-F.: Humanzentrierte Arbeitsgestaltung beim Einsatz künstlicher Intelligenz: Evaluation des Dialogverfahrens „FriendlyTechCheck“ in verschiedenen Arbeitskontexten. In: GfA (ed.): Arbeit 5.0: Menschzentrierte Innovationen für die Zukunft der Arbeit. Sankt Augustin 2025.

[10] Kluge, A.; Ontrup, G. et al.: Mensch-KI-Teaming: Mensch und Künstliche Intelligenz in der Arbeitswelt von morgen. In: Zeitschrift für wirtschaftlichen Fabrikbetrieb 116 (2021) 10, pp. 728-734.

[11] Demerouti, E.: Turn Digitalization and Automation into a Job Resource. In: Applied Psychology 71 (2022) 4, pp. 1205-1209.

[12] Gerlmaier, A.; Kramer, P.-F.: How human friendly is ChatGPT for knowledge workers? Analyzing opportunities and risks of generative AI with the FriendlyTechCheck (FTC). In: E|N|E|T|O|S|H Facts 07 (2024).

[13] Adolph, L.; Kirchhoff, B.: Künstliche Intelligenz in der Arbeitswelt. In: GfA (ed.): Die Arbeit von morgen: digital, intelligent, nachhaltig – effizient. Dortmund 2024.