Pre-Stages of GenAI Governance via Managerial Communication |

Exploratory findings from SMEs in the Ruhr area

| Journal | Industry 4.0 Science |

| Issue | Volume 42, Edition 1, Pages 6-13 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.6 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

Generative artificial intelligence (GenAI) tools such as ChatGPT, Gemini, or Microsoft Copilot are associated with a number of promises for small and medium-sized enterprises (SMEs), relating to efficiency gains or process automation [1]. Combined with comparatively low costs, broad accessibility, and diverse application possibilities, GenAI changes the competitive landscape for SMEs as it democratizes scalability and creativity [2, 3]. The exploitation of GenAI potential requires an organizational governance structure that contends with the ethical challenges of AI usage. This is particularly important for SMEs [3–6].

While the underdevelopment of institutional corporate responsibility and the ensuing hindrance to GenAI implementation is a well-understood fact [7], little is known about how GenAI risks and responsibilities are perceived in SMEs and what practices have so far been established from a procedural perspective. Deeper insights are valuable for building a support structure that expands on existing approaches.

In the following sections, we characterize AI governance as a reference framework, explore the state-of-the-art of GenAI governance in the Ruhr region, further substantiate the findings with insights from a case study, and finally suggest next steps.

Gen AI governance—A conceptual framework

GenAI in SMEs is not only considered a possible game changer [8], but also an ethical challenge that faces new regulatory demands (e.g.; the EU AI Act) [9]. Coping with ethical challenges is an established practice in large corporations to avoid unchecked use of GenAI tools [10]. Even though SMEs operate largely outside public control, they are no less in need of institutionalized mechanisms and practices that ensure responsible use of GenAI [3, 11].

Governance aims to unlock the potential of GenAI in organizations while mitigating the associated risks. Governance describes a formalized and institutionalized structure embodied in legal regulations, in this case the EU AI Act [12], as well as in established reporting mechanisms for dealing with stakeholder demands in areas where risk-based externalities might occur. This can include codes of conduct, corporate declarations, and similar company-wide guidelines [13].

With respect to GenAI governance, scholars further distinguish structural, procedural, and relational governance mechanisms [14, 15]. Structural mechanisms cover aspects like the location of responsibility and decision-making authority, while procedural mechanisms include defining an AI strategy, developing guidelines and processes, and monitoring the use of GenAI to ensure compliance with legal and organizational requirements. Supplementary relational mechanisms support cooperation between stakeholders by transparently sharing information about the use of GenAI and by creating training opportunities for its safe and competent use [14, 15].

This is of particular relevance when a new governance topic such as GenAI requires institutionalization. Moreover, it is a method of defining governance requirements for SMEs. Governance in SMEs is leadership-oriented and therefore needs to reflect managerial accountability and leadership roles [16, 17, 18]. This is why managers’ responsibility, openness, and answerability also indicate first steps towards organizational governance [19].

Taken together, framing GenAI governance for SMEs includes institutionalized reporting structures in reference to the EU AI Act, but also less institutionalized ways of demonstrating managerial responsibility in terms of specifying responsible authorities, formulating explicit guidelines, establishing means of exchange for overcoming ethical challenges, and generating individual risk awareness. Excluding these managerial aspects would likely cause research to overlook possible first steps towards GenAI governance.

Empirical insights from an initial screening among SMEs in the Ruhr area

As part of the HUMAINE project, we conducted a screening among the member companies of the Chamber of Industry and Commerce for the Central Ruhr area (IHK Mittleres Ruhrgebiet). The Chamber represents the interests of more than 37,500 companies from various industries operating in the German Ruhr area, thus constituting an invaluable source for exploring the current state of GenAI governance on a regional level.

The analysis was based on a standardized questionnaire exploring five thematic areas: (1) forms of GenAI usage in the organization, (2) perceived risks, (3) implemented practices for enhancing responsibility, (4) core managerial activities, and (5) basic organizational data, particularly company size, for further contextualization [9, 15, 19, 20, 21]. Items were rated on a scale of 1 to 5 from “strongly disagree” to “strongly agree.” To ensure the applicability of the questionnaire, all items were pre-tested with members of a medium-sized company.

The screening instrument with standardized measures was sent to 6,846 organizations via an IHK distribution list. The survey was aimed at managing directors and owners of SMEs as key informants and yielded a total of 56 evaluable responses within one week – a low turnout that can be attributed to the brief survey period, the target group being addressed via top management [22, 23], and the specialized nature of the topic. Low response rates indicate that GenAI governance is not high on the agenda for most SMEs.

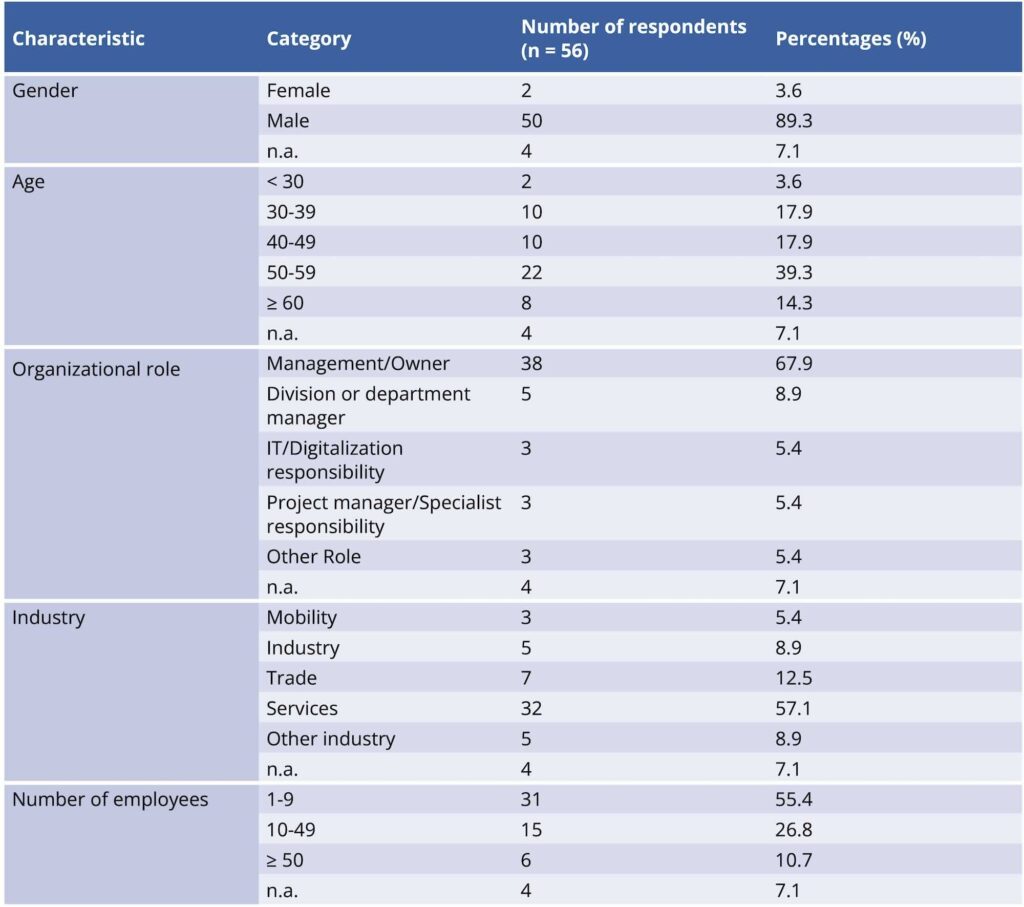

While self-reported data from organizational decision-makers may be subject to social desirability bias [24], measures were taken to reduce bias, including the use of anonymous data collection and neutral item phrasing. Figure 1 gives a brief summary of the demographic characteristics of the respondents. Statistical analyses showed that response rates do not correlate with company size.

The survey results indicate that over 80% of organizations use GenAI primarily for information gathering and research (84%) as well as for formulating, summarizing, and translating (86%). It seems that GenAI software is primarily used to substitute pre-GenAI applications, especially Google applications. Almost half of SMEs use GenAI for brainstorming (52%) and creating templates and draft concepts (48%). Only a small group uses GenAI for image generation (29%) or programming and formula tasks (23%). The findings suggest a conservative use of GenAI that does not explore new dimensions of technological potential. In these environments, neither risk awareness nor an institutionalized AI governance structure can reasonably be expected.

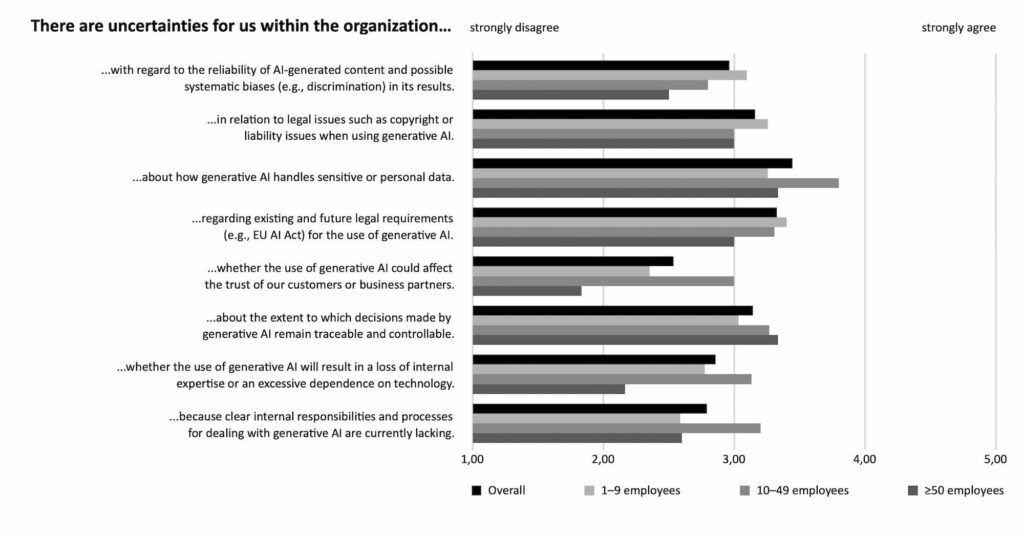

Asked about uncertainties and risks, the respondents classify almost all potential risk categories as below average. It is only with regard to data privacy, the legal framework of the EU AI Act, and the opacity of black-box decision-making that a moderate risk awareness is shown (Fig. 2).

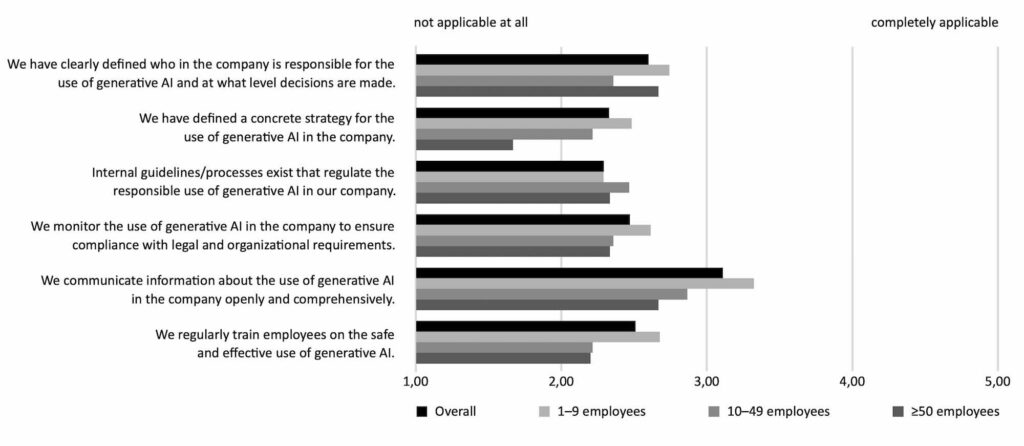

Corresponding to the low risk awareness, respondents admit a significant lack of established authorities or practices for dealing with GenAI risks (Fig. 3). It is only with regard to communication practices that managers indicate a medium level of responsibility.

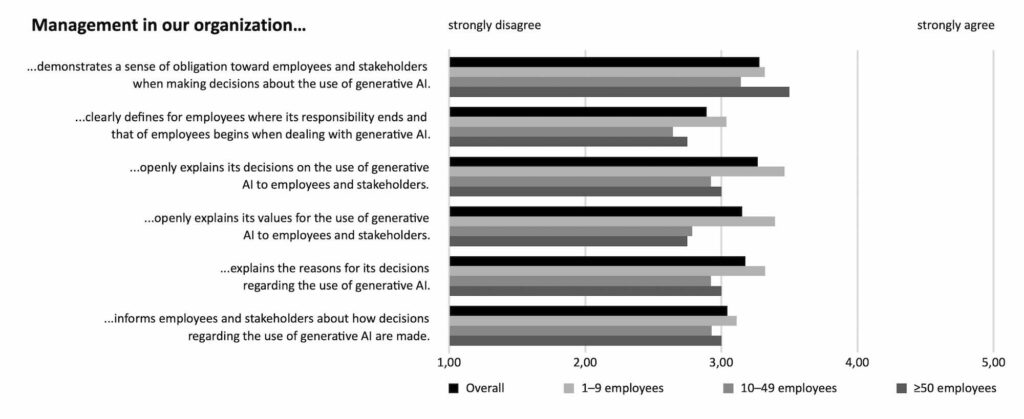

In view of the lack of institutionalized responsibility, it is interesting to note how managers describe their individual role and activities. It becomes evident that respondents see themselves as personally and organizationally responsible for the use of GenAI in their organizations, at least on a medium level (Fig. 4).

In summary, the screening of regional SMEs from the Ruhr area shows that the topic of GenAI governance is on the agenda for only a few companies. Where reflection is taking place, rather conventional ways of using the technology, similar to those offered by former software products, tend to emerge. Risk awareness is rather low and an institutionalized AI governance is missing. Responsible AI is considered and practiced as a managerial communication task. Thus, SMEs from the Ruhr area are far from practicing GenAI governance, revealing both a high demand for more advanced technology usage and a corresponding need for GenAI governance.

Insights from the SEEPEX case study

The screening provides a first overview but does not offer any information about the development process within SMEs. In this regard, the qualitative data from the SEEPEX case, a medium-sized pump manufacturer from the Ruhr area, affords a more nuanced picture. The case study includes a series of interviews with employees confronted with GenAI implementation during a six-month period in 2025. The interviews were conducted at two measurement points by two independent researchers.

At the beginning of the implementation period, employees used the GenAI tool rather conservatively, given their concerns about exposing themselves to penalties for sharing personal or company-related data in their prompts and requests.

Already here, the relevance of managerial communication as a first step towards AI governance becomes clear. Extensive clarification and clear communication about how sensitive data should be handled when interacting with GenAI mitigated initial uncertainty. Even though an institutional approach was missing, managerial responsibility was a substitute to cope with GenAI risks at the beginning of the process. In the next stage of deployment, SEEPEX defined responsibilities for the GenAI implementation process and specified the guidelines for the use of GenAI in the further implementation process. These guidelines have a technical focus, on the trustworthiness of data [20].

The case study indicates that GenAI governance does not always take the form of an established report system but can constitute an interactive way of clearing the implementation process. During the early stage of implementation, it is managerial risk awareness and responsibility that favors risk avoidance. Therefore, managerial practices should be considered as early-stage governance [14]. Obviously, this approach has limitations when it comes to company-wide use of GenAI and more advanced ways of leveraging its technological potential. It must therefore be complemented by more deeply embedded, structural measures in future development.

Discussion: Evolving GenAI governance in SMEs

As the empirical findings from the Ruhr area suggest, structural or procedural control mechanisms, such as formal guidelines or monitoring systems, are almost entirely missing. The current stage of development shows that a responsible use of GenAI is instead based on communicative practices and social interaction. Although these informal methods of coping with ethical challenges bear risks and indicate deficits, they are nonetheless highly valuable for a nascent GenAI governance.

In line with other scholars [16, 17], we affirm that the relevance of informal governance should not be underestimated when it comes to GenAI implementation in SMEs, as it is often an important starting point for establishing explicit guidelines and control structures [25].

The presented findings from the screening in combination with the more detailed insights from a local case study of the Ruhr area made clear that, as long as more informal substitutes in managerial communication and practices exist, a lack of GenAI governance does not necessarily encourage risky behavior.

The empirical results reveal early-stage practices evolving to become a governance structure for responsible GenAI use in SMEs. We agree with previous studies [16, 17] that existing governance models have not yet adequately considered the specific conditions of SMEs but could emphasize managerial communication and practices as a relevant pre-stage of GenAI governance for the quick adaptation of AI in corporate problem solving.

Beyond these research outcomes, the findings reveal a high demand for practitioners to advance not only GenAI usage, but also for corresponding governance mechanisms. For all actors and institutions who provide a support structure for regional development, e.g.; the IHK or the competence center HUMAINE, the requirements for future support activities are clear.

This article was written as part of the project “HUMAINE (human-centered AI network) – Transfer-Hub of the Ruhr Metropolis for human-centered work with AI”, which is funded by the German Federal Ministry of Research, Technology and Space in the program “Future of Value Creation – Research on Production, Services and Work” and supervised by the Project Management Agency Karlsruhe (PTKA) (funding code: 02L19C200).

Bibliography

[1] Lu, X.; Wijayaratna, K.; Huang, Y.; Qiu, A. (2022). AI-Enabled Opportunities and Transformation Challenges for SMEs in the Post-pandemic Era: A Review and Research Agenda. Frontiers in Public Health, 10, 885067. https://doi.org/10.3389/fpubh.2022.885067[2] Oldemeyer, L.; Jede, A.; Teuteberg, F. (2025). Investigation of artificial intelligence in SMEs: A systematic review of the state of the art and the main implementation challenges. Management Review Quarterly, 75(2), 1185–1227. https://doi.org/10.1007/s11301-024-00405-4

[3] Rajaram, K.; Tinguely, P. N. (2024). Generative artificial intelligence in small and medium enterprises: Navigating its promises and challenges. Business Horizons, 67(5), 629–648. https://doi.org/10.1016/j.bushor.2024.05.008

[4] Rana, N. P.; Pillai, R.; Sivathanu, B.; Malik, N. (2024). Assessing the nexus of Generative AI adoption, ethical considerations and organizational performance. Technovation, 135, 103064. https://doi.org/10.1016/j.technovation.2024.103064

[5] Novelli, C.; Taddeo, M.; Floridi, L. (2024). Accountability in artificial intelligence: What it is and how it works. AI & SOCIETY, 39(4), 1871–1882. https://doi.org/10.1007/s00146-023-01635-y

[6] Zavodna, L. S.; Überwimmer, M.; Frankus, E. (2024). Barriers to the implementation of artificial intelligence in small and medium-sized enterprises: Pilot study. Journal of Economics and Management, 46, 331-352. https://doi.org/10.22367/jem.2024.46.13

[7] Stowasser, S. (2025). Modelle zur strukturellen Einbindung von Künstlicher Intelligenz. Industry 4.0 Science, 41(5), 144-151. https://doi.org/10.30844/I4SD.25.5.144

[8] Kshetri, N.; Rojas-Torres, D.; M. Hanafi, M.; Al-kfairy, M.; O’Keefe, G.; Feeney, N. (2024). Harnessing Generative Artificial Intelligence: A Game-Changer for Small and Medium Enterprises. IT Professional, 26(6), 84–89. https://doi.org/10.1109/MITP.2024.3501552

[9] Hagendorff, T. (2024). Mapping the Ethics of Generative AI: A Comprehensive Scoping Review. Minds and Machines, 34(4), 39. https://doi.org/10.1007/s11023-024-09694-w

[10] Mok, A.: Amazon, Apple, and 12 other major companies that have restricted employees from using ChatGPT. URL: https://www.businessinsider.com/chatgpt-companies-issued-bans-restrictions-openai-ai-amazon-apple-2023-7, accessed 15.06.2025.

[11] Kumar, A.; Shankar, A.; Hollebeek, L. D.; Behl, A.; Lim, W. M. (2025). Generative artificial intelligence (GenAI) revolution: A deep dive into GenAI adoption. Journal of Business Research, 189, 115160. https://doi.org/10.1016/j.jbusres.2024.115160

[12] EU AI Act (EU Artificial Intelligence Act). Der AI Act Explorer. URL: https://artificialintelligenceact.eu/de/ai-act-explorer/, accessed 20.06.2025.

[13] Müller-Stewens, G.; Lechner, C.; Kreutzer, M.; Stonich, J. (2024). Strategisches Management (6. Auflage), Stuttgart, Schäffer-Poeschel.

[14] Mäntymäki, M.; Minkkinen, M.; Birkstedt, T.; Viljanen, M. (2022). Defining organizational AI governance. AI and Ethics, 2(4), Article 4. https://doi.org/10.1007/s43681-022-00143-x

[15] Schneider, J.; Kuss, P.; Abraham, R.; Meske, C.: Governance of generative artificial intelligence for companies. In: arXiv preprint (2024), arXiv:2403.08802.

[16] Handley, K.; Molloy, C. (2022). SME corporate governance: A literature review of informal mechanisms for governance. Meditari Accountancy Research, 30(7), 310–333. https://doi.org/10.1108/MEDAR-06-2021-1321

[17] Teixeira, J. F.; Carvalho, A. O. (2024). Corporate governance in SMEs: A systematic literature review and future research. Corporate Governance: The International Journal of Business in Society, 24(2), 303–326. https://doi.org/10.1108/CG-04-2023-0135

[18] Miladi, A. I. (2014). Governance for SMEs: Influence of leader on organizational culture. International Strategic Management Review, 2(1), 21–30. https://doi.org/10.1016/j.ism.2014.03.002

[19] Wood, J. A. (Andy), & Winston, B. E. (2007). Development of three scales to measure leader accountability. Leadership & Organization Development Journal, 28(2), 167–185. https://doi.org/10.1108/01437730710726859

[20] Wilkens, U.; Lupp, D.; Langholf, V. (2023). Configurations of human-centered AI at work: Seven actor-structure engagements in organizations. Frontiers in Artificial Intelligence, 6, 1272159. https://doi.org/10.3389/frai.2023.1272159

[21] Gerlich, M. (2025). AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking. Societies, 15(1), 6. https://doi.org/10.3390/soc15010006

[22] Cycyota, C. S.; Harrison, D. A. (2006). What (Not) to Expect When Surveying Executives: A Meta-Analysis of Top Manager Response Rates and Techniques Over Time. Organizational Research Methods, 9(2), 133–160. https://doi.org/10.1177/1094428105280770

[23] Borgholthaus, C. J.; Bourgoin, A.; Harms, P. D.; White, J. V.; Fezzey, T. N. A. (2025). Surveying the Upper Echelons: An Update to Cycyota and Harrison (2006) on Top Manager Response Rates and Recommendations for the Future. Organizational Research Methods, 10944281241310574. https://doi.org/10.1177/10944281241310574

[24] Fisher, R. J. (1993). Social Desirability Bias and the Validity of Indirect Questioning. Journal of Consumer Research, 20(2), 303. https://doi.org/10.1086/209351

[25] Nadjib, T.; Wilkens, U. (2025) Vertrauensaufbau durch GenAI-Governance bei einer VW-Tochter. PERSONALquarterly, (04), 28-33.

Your downloads

Potentials: Leadership Management