Human-Centered AI in Companies with Employee Representation |

Using the HUMAINE model for a company-specific works agreement

| Journal | Industry 4.0 Science |

| Issue | Volume 42, Edition 1, Pages 14-21 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.14 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

The use of artificial intelligence (AI) in companies has been considered a key factor for the successful transformation of work and the economy for years. Although the actual spread of such systems remained limited for a long time [1], AI applications were recognized early on as having the potential to profoundly impact work processes, which is why the first regulatory debates began as early as 2016 with the “White Paper on Work 4.0” published by the Federal Ministry of Labor and Social Affairs [2].

The actual use of AI has changed with the advent of large language models (LLM) such as ChatGPT. The chatbot from the US software company OpenAI broke the one million user mark after just five days [3] and, according to internal statements, is now used by over 400 million people every week. This means that the topic has arrived in German companies, and more and more employees are regularly confronted with AI systems in their everyday work.

Generative AI (GenAI) such as ChatGPT has enormous potential to bring about social and economic change [4]. The German government addressed AI in its current coalition agreement, emphasizing not only its potential but also the need for (social partnership-based) regulation [5]. Current OECD figures point to a lack of the AI skills [6] necessary in business practice for the widespread, trustworthy use of AI at work. In order to truly leverage the opportunities offered by AI, clear rules are needed on how employees can participate, gain qualifications, and have their interests taken into account after AI is introduced.

Early white papers already recognized the fact that cooperation between company management and employees (or their representatives) is essential for the successful and humane introduction of AI in companies [7, 8]. This insight was also later recorded in concrete guidelines for practitioners [9, 10]. The AI Regulation (EU AI Act) adopted by the European Union in May 2024 created a legal framework that requires the regulated and responsible use of AI in companies. At the same time, it reinforced the importance of a social partnership approach, building on established structures in Germany.

Due to the path dependency of past transformations [11], trade unions, works councils, and employers play a central role in concrete implementation. §77 of the Works Constitution Act (BetrVG) permits the parties within a company to conclude works agreements. Such regulations are not only necessary to compensate for structural power imbalances between employers and employees, but also create legal certainty for companies, prevent unilateral decisions, increase acceptance among employees, and thus contribute to the successful introduction of AI systems.

Against this backdrop, this article introduces HUMAINE MBV KI, which was developed as a practical tool for the social partnership-based regulation of AI. The MBV KI is an extension of the RBV KI, which was developed in HUMAINE as a collaboration between the Joint Working Group RUB/IGM and the project partner Doncasters Precision Castings Bochum GmbH (DPC) and tested in a number of multiplier cases.

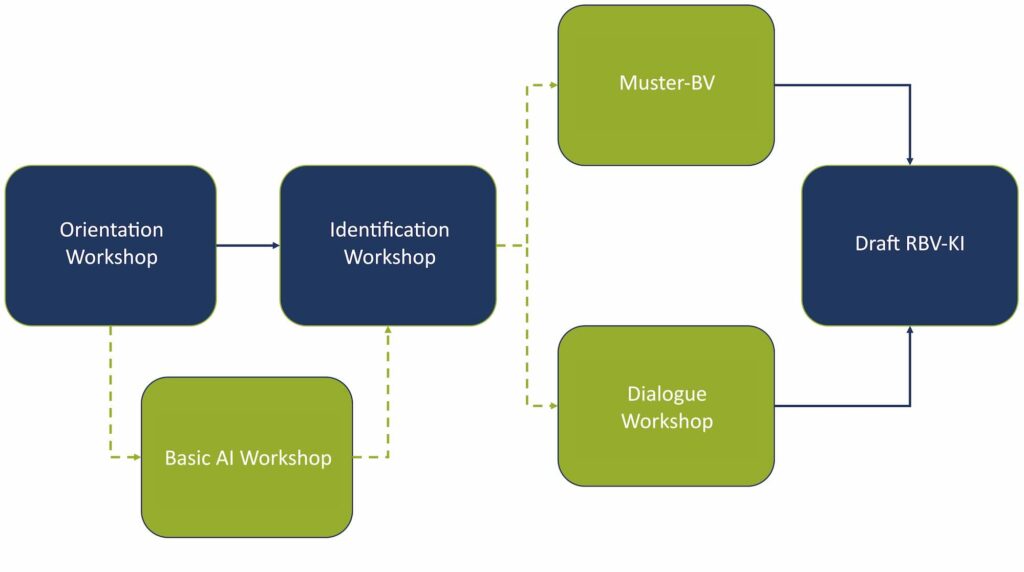

Methodologically, this process was supported by the co-determination dialogues of the HUMAINE toolbox (Fig. 1; see Wannöffel et al. in this issue). The project uses a transfer research methodology [12, 13] in order to take into account the fact that operational change processes are path-dependent and that successful implementation can only be achieved with targeted further training that accounts for the experiences of the actors involved.

Employee participation in AI based on the BetrVG

According to BetrVG , the works council plays an intermediary role in shaping the structural conflict of interest between employer and employees. The description of the works council as a boundary institution [14] also applies in the context of the introduction of AI [14] (Fig. 2).

Works councils are confronted with conflicts with regard to the efficiency and rationalization potential associated with the use of AI. Even if the works council wants to protect the interests and, ultimately, the jobs of employees, it must also take the profitability of the company into account. Section 2 (1) of the Works Constitution Act (BetrVG) obliges both sides to work together for the benefit of the employees and the company. This creates a mandate for both parties [15, 16].

Looking at the works council’s experience in shaping digital transformation processes within companies to date, three key findings can be applied to the implementation of AI:

- Operational change processes are incremental rather than disruptive and revolutionary [10]

- Operational change processes follow operational path dependencies in their design [17]

- An accompanying and framework-setting labor policy is important for the design of these transformation processes [18].

In the latest amendment to the Works Constitution Act (BetrVG)—the 2021 Works Council Modernization Act—the scope of action and discretion of works councils was explicitly expanded to include the topic of AI. Against the backdrop of the partial enactment the EU AI Act in February 2025, the options for action and organization available to works councils will in future become an obligation to act that applies to both parties in the workplace: According to Art. 4, they are obliged to demonstrate the competencies of employees with regard to the AI systems and applications used in the workplace.

This obligation represents an important lever for co-determination and directly links European legislation to the reality of the workplace in Germany: Sections 96-98 of the Works Constitution Act (BetrVG) grant the works council extensive rights to information, consultation, and co-determination with regard to the qualification of employees. In view of this change, the parties in the workplace must take action. However, studies show that around half of employees do not feel sufficiently informed about AI [19] and only just under a fifth are considered by their superiors to be sufficiently qualified for basic work with AI [20].

![Figure 2: Works council as a boundary institution (based on [14]).](https://industry-science.com/wp-content/uploads/2026/02/Ranft_I4S-26-1_Figure-2-1024x618.jpeg)

The works council has co-determination rights in the event of fundamental changes to the company’s organization (Section 111 (1) No. 4 BetrVG) or the introduction of new working methods and production processes (Section 111 (1) No. 5 BetrVG). These legal rights must also be examined in the context of AI implementation [21]. The same applies to an application-specific assessment of the potential risk of AI controlling employee behavior and/or performance (Section 87 (1) No. 6 BetrVG) [22]. If this is the case, the introduction of AI is subject to the works council’s co-determination rights [21].

Furthermore, other standards safeguard the role of the works council: According to Section 90 (1) No. 3 BetrVG, the works council must be informed in good time about plans to introduce AI. According to Section 91 BetrVG, there is a right of co-determination if planned changes contradict ergonomic findings. In conjunction with Section 87 (1) No. 7 BetrVG and Section 5 (1) ArbSchG, there are also enforceable co-determination rights in occupational health and safety—for example, in the ergonomic design of workplaces.

In addition to the BetrVG, there are other links between German labor law and European management rights, such as the EU AI Act or the General Data Protection Regulation (GDPR) [21, 23]. In order to implement the resulting obligations at the operational level, the parties within the company can conclude agreements on the basis of Section 77 BetrVG, which, from a transaction cost theory perspective, provides the basis for tapping the potential associated with AI and safeguarding the interests of the parties at the company.

Specific contents of the MBV KI

With HUMAINE MBV KI, RUB/IGM has collaborated with DPC to develop an action support tool that companies can use to develop their own works agreement (WA) for a human-centered AI planning, implementation, and application process. As DPC is a former subsidiary of Thyssen Guss AG, the company has its roots in coal and steel co-determination. This means that the works council—in line with path dependency and the historical relevance of past transformation processes [11]—plays a significant role in the solidarity-based design of these very same transformation processes.

These circumstances are just as unique as the fact that the intention to introduce AI was proactively initiated by the works council. A works council that acts in this way has a positive influence on the implementation of operational transformation processes but requires specific qualifications [24].

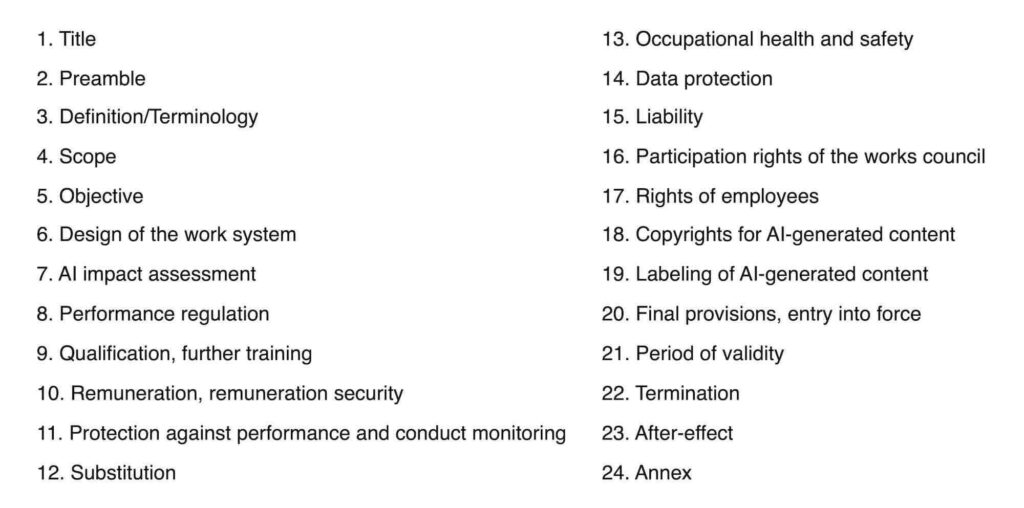

Through co-determination dialogues (see Wannöffel et al. in this issue), the contents of an AI WA were developed in collaboration with the works council of DPC. Following this process, this specific AI WA was abstracted into an initial model form and further tested and evaluated. Through collaboration with Gebr. Eickhoff Maschinenfabrik und Eisengießerei GmbH and IHK-Gesellschaft für Informationsverarbeitung mbH (IHK-GfI), 24 paragraphs were created in sample form that can be used to create company-specific WAs (Fig. 3).

The paragraphs developed in this way do not claim to be exhaustive. An AI WA may vary in terms of the scope and granularity of its content and the number and content of its paragraphs due to existing company regulations, such as WAs on IT or EDP systems.

With regard to the eight criteria for human-centered AI [24], the following criteria can be addressed using an AI policy:

- Trustworthiness, confidentiality, and ethics

- Human action and augmentation

- Avoiding job losses

Paragraph in the WA on AI impact assessment

All AI processes in the company are monitored from the outset by the works council and, if necessary, by experts, whereby alternatives to the planned use of AI must also be examined. In order to institutionalize this continuous exchange between the employer and the works council, an AI commission is established, which meets as required. This commission is composed of two representatives each from the works council and the employer, as well as the data protection officer. As soon as the purchase of an AI application is finalized, the employer provides the AI commission with all relevant documents relating to the AI application.

Once the AI Commission has received these documents, a test or pilot phase of the AI application can begin. If this trial phase is successful, the commissioning department and the client must assess the specific risk categorization in accordance with the EU AI Act on the basis of the AI WA annex. This risk assessment is evaluated by the AI Commission and the works council. In the course of this, the AI Commission decides whether and to what extent further regulation is necessary and whether this must be addressed in an application-specific WA. This applies in particular to AI applications that are classified in risk category 3 (high risk) of the EU AI Act.

In this context, transparency regarding the use of AI must also be established for employees. If potential changes to activities and work processes are known, these must be clearly identified. In addition, clear objectives and evaluation criteria must be defined before the pilot phase begins, and the results of these used for the final risk assessment. Before an AI application is integrated into regular work and business processes, all necessary documents, attachments, and any application-specific WA must be signed by the operating parties. With regard to the project partner IHK-GfI, the importance of a balanced approach to the practical implementation of the AI Commission described in the paragraph of the German Industrial Code becomes clear:

“The establishment of an AI commission presents both opportunities and challenges. As stipulated in the paragraph, the commission should meet “as required.” The extent of this need can only be guessed at this point. We are caught between the conflicting priorities of innovation and security risks:

On the one hand, it is important for us, as it is for many commercial enterprises, to be able to react quickly and adapt. The short innovation cycles in AI put pressure on companies. In the area of software-as-a-service (SaaS) in particular, suppliers often introduce AI components as part of continuous deployments, to which companies then have to react ex post. In addition, AI has now become a commodity; providers are increasingly trying to integrate AI into almost every product or service.

On the other hand, in addition to the requirements for response speeds, there is the security and integrity of our own employees and infrastructure. The AI Commission’s task is therefore not an easy one: our challenge will be to find an acceptable balance between speed in decision-making and implementation cycles and security for our employees, our data, and our infrastructure.” (Mathias Preuß, IHK-GfI)

Qualification paragraph in the MBV KI

The qualifications required for working with the proposed AI systems are determined in advance on the basis of the RBV-KI annex. Accordingly, the employees affected must be comprehensively informed about the technical or organizational changes and new work processes or methods before the AI is introduced. In this context, employees must be trained both in the specific application and in general on the topic of AI. To ensure the quality of work, a needs-based schedule for refresher and training measures for employees, works council members, and the AI commission will be established. These measures will take place exclusively during working hours and with continued payment of regular wages.

In the event of foreseeable changes in activities as a result of the use of AI, retraining or further training shall be carried out in order to maintain the employability of the employees affected. In addition, works council members and managers shall be trained to be able to counter any fears and concerns that employees may have. The AI Commission can also support employers in developing a documentation system for verifying AI competencies in accordance with Art. 4 of the AI Act. The project partner IHK-GfI also found that training issues play a key role in the successful use of AI in the workplace:

“There is no question that training and further education on the functioning and application of AI is necessary. It is important to meet all employees where they are individually and to empower them in the long term to use the technologies correctly and safely and to critically ensure the quality of the results. For us, the key point of the paragraph lies in the changing framework conditions for cooperation. Workplaces and work processes for all employees in the company are potentially changing.

This opportunity gives us additional options for addressing demographic change, in particular generational change and the increasing shortage of skilled workers. At the same time, training and continuing education are necessary aspects of change and transformation management and thus essential for organizational development.” (Mathias Preuß, IHK-GfI)

AI change processes often proceed incrementally

Regulating the use of AI in companies with works councils requires the systematic linking of European and national legal requirements, in particular the EU AI Act, the GDPR, and the BetrVG. The MBV KI developed in the HUMAINE project is a practice-oriented tool that takes legal requirements into account while promoting the human-centered design of AI in the workplace.

Empirical findings from the development and testing of MBV KI show that operational change processes in the context of AI are often incremental, build on existing structures and are only effective to a limited extent without accompanying training measures. The obligation to demonstrate AI competencies in accordance with Art. 4 of the EU AI Act opens up new design options, which can be systematically exploited by the MBV KI.

The MBV KI thus offers a proven orientation framework that combines legal certainty, ethically sound, human-centered guidelines, and co-determination into an integrated design approach. It promotes employee acceptance of and trust in AI systems, ensures their employability, and contributes to the sustainable design of digital transformation in the spirit of a social partnership-based working relationship. Its transferability to different operational contexts, even beyond existing works council structures, underscores its innovative and forward-looking character, essential in the age of AI.

This research and development project is funded by the Federal Ministry of Research, Technology, and Space (BMFTR) FKZ 02L19C200 and supervised by the Project Management Agency Karlsruhe (PTKA). The authors are responsible for the content of this publication.

Bibliography

[1] Giering, O.: Künstliche Intelligenz und Arbeit: Betrachtungen zwischen Prognose und betrieblicher Realität. In: Zeitschrift für Arbeitswissenschaft 76 (2022) 1, pp. 50-64. DOI: https://doi.org/10.1007/s41449-021-00289-0.[2] Federal Ministry of Labor and Social Affairs: Weißbuch Arbeiten 4.0. Berlin 2016.

[3] Makhov, V.: ChatGPT-Statistiken enthüllt – Vom Benutzerwachstum bis zur wirtschaftlichen Auswirkung. URL: https://doit.software/en/blog/chatgpt-statistiken#screen4, accessed 01.07.2025.

[4] Engels, B.; Scheufen, M.; Schmitz, E.: Künstliche Intelligenz als Wettbewerbsfaktor für die deutsche Wirtschaft (IW Report 33/2025).

[5] CDU, CSU & SPD: Verantwortung für Deutschland. Koalitionsvertrag zwischen CDU, CSU und SPD, 21. Legislaturperiode. Berlin Munich 2025.

[6] OECD: OECD-Bericht zu Künstlicher Intelligenz in Deutschland, OECD Publishing, Paris 2024.

[7] Stowasser, S.; Suchy, O.; Huchler, N.; Müller, N.; Peissner, M.; et al.: Einführung von KI-Systemen in Unternehmen: Gestaltungsansätze für das Change-Mangement (2020).

[8] Huchler, N.; Adolph, L.; André, E.; Bauer, W.; Bender, N.; et al. (eds.): Kriterien für die menschengerechte Gestaltung der Mensch-Maschine-Interaktion bei Lernenden Systemen – Whitepaper aus der Plattform Lernende Systeme (2020).

[9] Schröder, L.; Höfers, P.: Praxishandbuch Künstliche Intelligenz. Handlungsanleitungen, Praxistipps, Prüffragen, Checklisten. Frankfurt am Main 2022.

[10] ver.di: Digitalisierung und Künstliche Intelligenz. Gute Arbeit 2025. Berlin 2024.

[11] Hirsch-Kreinsen, H.: Digitale Transformation von Arbeit. Entwicklungstrends und Gestaltungsansätze. Stuttgart 2020.

[12] Schäfer, M.; Wannöffel, M.; Virgillito, A.: Transferforschung – ein methodisches Konzept für die Analyse der Industriellen Beziehungen. In: Industrielle Beziehungen 2 (2020), pp. 127-149.

[13] Wannöffel, M.: Transferforschung im Feld der Mitbestimmung. In: Wannöffel, M.; Niewerth, C.; Hoose, F.; Urban, H.-J. (eds.): Co Mitbestimmung und Partizipation 2030: Demokratische Perspektiven auf Arbeit und Beschäftigung. Baden-Baden 2025, pp. 475-498.

[14] Fürstenberg, F.: Der Betriebsrat. Strukturanalyse einer Grenzinstitution. In: Kölner Zeitschrift für Soziologie und Sozialpsychologie 10 (1958), pp. 418-429.

[15] Gerst, D.: Autonome Systeme und Künstliche Intelligenz Herausforderungen für die Arbeitssystemgestaltung: Perspektiven, Herausforderungen und Grenzen der Künstlichen Intelligenz in der Arbeitswelt. In: H. Hirsch-Kreinsen, A. Karacic (eds.): Autonome System und Arbeit. Perspektiven, Herausforderungen und Grenzen der Künstlichen Intelligenz in der Arbeitswelt. Bielefeld 2019, pp. 101-138.

[16] Niehues, S.; Sandrock, S.; Shahinfar, F.; Schüth, N. J.; Conrad, R.: Gestaltung eines KI-Arbeitssystems. In: Stowasser, S. (ed.): Künstliche Intelligenz (KI) und Arbeit. Leitfaden zur soziotechnischen Gestaltung von KI-Systemen. Berlin Heidelberg 2023, pp. 141-166.

[17] Haipeter, T.; Hoose, F.; Rosenbohm, S.: Arbeitspolitik in digitalen Zeiten. Entwicklungslinien einer nachhaltigen Regulierung und Gestaltung von Arbeit. Baden-Baden 2021.

[18] Kuhlmann, M.: Digitalisierung und Arbeit. Eine Zwischenbilanz als Einleitung. In: WSI Mitteilungen 76 (2023) 5, pp. 331-336. DOI: https://doi.org/10.5771/0342-300X-2023-5-331.

[19] Pfeiffer, S.: (Generative) Künstliche Intelligenz (KI) als Kollegin? Gestaltung und Mitbestimmung aus Sicht der Beschäftigten. In: Wannöffel, M.; Niewerth, C.; Hoose, F.; Urban , H.-J. (eds.): Mitbestimmung und Partizipation 2030: Demokratische Perspektiven auf Arbeit und Beschäftigung. Baden-Baden 2025, pp. 247-264.

[20] Rampelt, F.; Klier, J.; Kirchher, J.; Ruppert, R.: KI-Kompetenzen in Deutschen Unternehmen. Schlüssel zu einer Jahrhundertchance für Deutschland. Essen 2025.

[21] Wedde, P.: Künstliche Intelligenz und Mitbestimmung: Möglichkeiten und Grenzen. In: Ver.di (ed.): Digitalisierung und Künstliche Intelligenz – Gute Arbeit 2025. Berlin 2024, pp. 28-39.

[22] Hoppe, M.; Suriano, G.: Das humAIn work.lab: Erkenntnisse aus betrieblichen Praxislaboratorien zur menschenzentrierten KI-Gestaltung. In: Ver.di (ed.): Digitalisierung und Künstliche Intelligenz – Gute Arbeit 2025. Berlin 2024, pp. 78-89.

[23] Von dem Bussche, A. F: Datenschutz 4.0. In: Frenz, W. (ed.): Handbuch Industrie 4.0: Recht, Technik, Gesellschaft. Berlin Heidelberg 2020, pp. 155-180.

[24] Niewerth, C.; Massolle, J.: Betriebliche Interessenvertretung in der doppelten Transformation – Einblicke in neue Gestaltungsformen betriebsrätlicher Arbeit. Mitbestimmungspraxis No. 36. Düsseldorf 2020.

[25] Wilkens, U.; Lupp, D.; Langholf, V.: Configurations of human-centered AI at work: seven actor-structure engagements in organizations. In: Front. Artif. Intell. 7 (2023). DOI: https://doi.org/10.3389/frai.2023.1272159.

Your downloads

Potentials: Innovation Management