AI Skills for Responsible Use |

Realistic learning environments, critical thinking, and role design in teams

| Journal | Industry 4.0 Science |

| Issue | Volume 42, Edition 1, Pages 100-107 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.92 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

The ongoing integration of artificial intelligence (AI) into operational work processes presents companies and employees with new challenges while also opening up a wide range of opportunities to increase efficiency and innovation [1]. While the use of generative AI is rapidly growing in many companies, the question of how employees can be professionalized in dealing with AI is pivotal if human problem-solving is to be supported by AI systems [2].

In particular, development skills for integrating AI systems into work processes in a targeted, critical, and reflective manner is a key factor for the successful use of AI technologies [3]. Initial studies on medium- and long-term changes in work with AI support show that productivity gains are contrasted partly with reduced critical thinking [4].

Existing approaches to developing AI competence mostly focus on individual declarative knowledge about AI (how it works, legal frameworks) and individual procedural knowledge (prompting, critical examination of AI outputs). Approaches for the long-term improvement of work results and problem-solving competence at the system level are still in their infancy [5]. However, these are necessary in order to address the challenges of applied AI ethics that revolve around augmentation through AI [6, 7].

Against this background, this article examines the following research question: What systemic effects of AI integration in work processes can be identified in a workplace-oriented simulation setting? This analysis seeks to determine the training strategies most suitable for cultivating AI skills that promote the responsible application of AI in work environments. The article provides initial evidence from four test runs in a workplace-like simulation environment and thus offers a qualitative-exploratory starting point for future investigations.

Theoretical background

As a result of the advances in generative AI since 2022, it is now in high-profile and widespread use, with adoption rates of approximately 25% in organizations and a continuing upward trend [8]. While the productivity-enhancing effect of generative AI can already be demonstrated in a comprehensive literature review [9], the authors of the review make it clear that there is a considerable need to investigate the skills necessary and to develop innovative training approaches for AI use. These not only help to increase productivity but also play an important role in the literature on human-centered AI use and meaningful work [6, 7].

AI competence encompasses various areas of human activity. On the one hand, the literature refers to skills in using AI in a goal-oriented manner, operating it effectively, tailoring AI models to specific applications, and critically evaluating AI-generated outputs. On the other hand, it mentions skills in ethics and critical thinking in problem solving and creativity [3, 10]. The latter do not relate competence to the operation of the technology, but to the goal-oriented and well-founded consideration of the opportunities and risks of AI use in a specific application context.

It is understandable that competence in goal-oriented use and effective operation can lead to short-term efficiency gains. However, ethical considerations and critical reflection are also of great importance for the long-term successful use of AI in work processes. This is underlined by recent studies showing that frequent AI use is associated with a reduction in critical thinking skills [4], because employees delegate cognitive tasks to AI (cognitive offloading) [11].

High levels of competence in the operation and use of AI can therefore conflict with skills in ethical reflection and critical thinking. Consequently, both areas are important for operational competence development in the context of AI use in work processes.

While research into the development of AI skills in organizations is still in its infancy, individual studies have presented structured programs. These combine technical instruction and ethical reflection and take various formats [12, 13].

Although a certain degree of transferability has been established [14], such approaches are not geared toward the internal effects of AI use and deal only with the operation and reflection of AI systems. This study focuses on the development of AI competence in a training setting, which allows teams to experience AI operation and critical-reflective problem-solving during its use.

Methodology

The study is conducted in the Human-AI Role Development Lab, which was developed to enable participants to experience and reflect on AI interaction within a training setting. It is a workplace-oriented simulation lab in which groups learn a work process and carry it out across five phases.

Here, they take on the role of an analysis team for a fictional healthcare provider with a data-based business model. The premise is that customers commission the company to provide independent health recommendations based on a wide range of data (ECG data, health data from fitness trackers, patient information such as medical reports, previous illnesses, anamnestic information, etc.). The AI support consists of two specific evaluation assistants that can evaluate ECG and health data. The AI mode can be activated in the evaluation software and offers visual and textual classification.

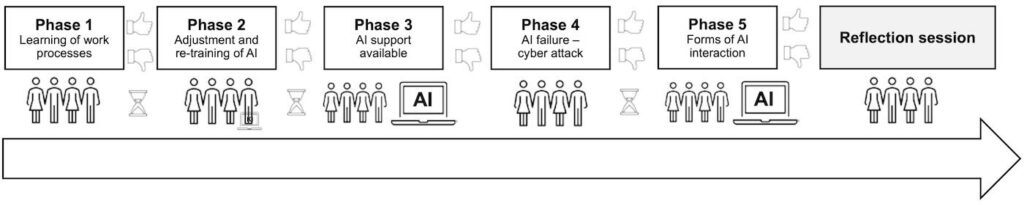

Each group goes through the phases sequentially. In phase 1, the work process is learned without AI support and the first customer case is solved. In the second phase, another customer case is solved and feedback is simultaneously transmitted to the two newly introduced AI systems (labeling). In the third phase, the two AI assistants are used, and initial experience with AI support is gained.

In the fourth phase, a hacker attack interrupts the usual workflow and makes it necessary to fall back on the original workflows in order to process the customer case. In addition, work is done to reactivate the systems. In the fifth phase, different customer cases are processed with AI support, and new routines are established. The process is shown in Figure 1.

After completing all five phases, participants leave the simulation context and a reflection round follows. This is central to the learning process and is also intended to prepare the transfer of the experience into the teams’ real work processes.

Data collection

Data collection took place in April and May 2025 with four test groups. Two of the test groups consisted of four members and two consisted of seven members. Two groups consisted of university employees, one group consisted of a company in the healthcare industry, and one group consisted of master’s students. For all four test groups, two to three members of the author team were present to resolve any unexpected (technical, organizational) problems. Each of these members created an observation log, which was compared with the other members and supplemented as necessary.

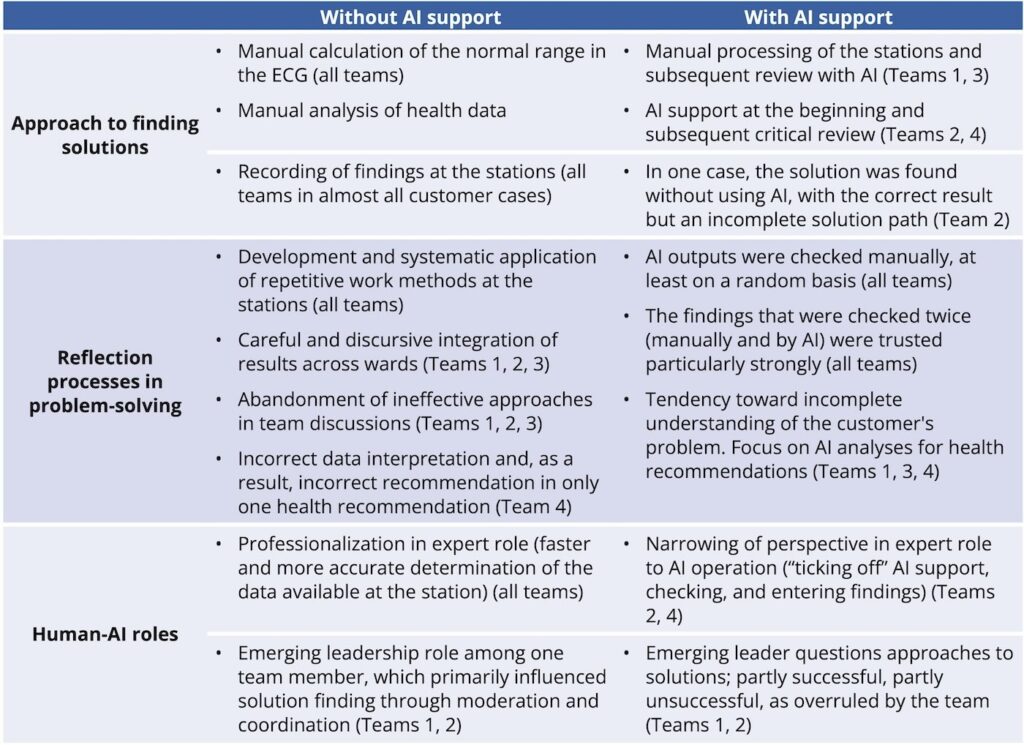

The observation log recorded the work results in the phases with and without AI (working methods). The work results included the time required for each phase and the rating given to the health recommendation (good, average, poor – analogous to positive, mixed, or disappointed customer feedback). To examine working methods, codes were noted for the following three facets: a) approach to finding solutions, b) reflection processes in problem solving, c) interpretation of roles for team members and AI. The three facets are based on considerations of observable facets of working methods in the given simulation environment.

Data analysis

The aim of the data analysis is to systematically record the effects of AI integration on work results and methods during the simulation. The observation data is analyzed using an inductive, analytical-interpretative approach [15]. The analysis examines how work results and working methods differ when AI is used and when it is not used. To this end, the codes of all four teams within the facets of solution finding, reflection in problem solving, and human-AI roles were aggregated into recurring themes. Changes with regard to AI integration were identified.

Results

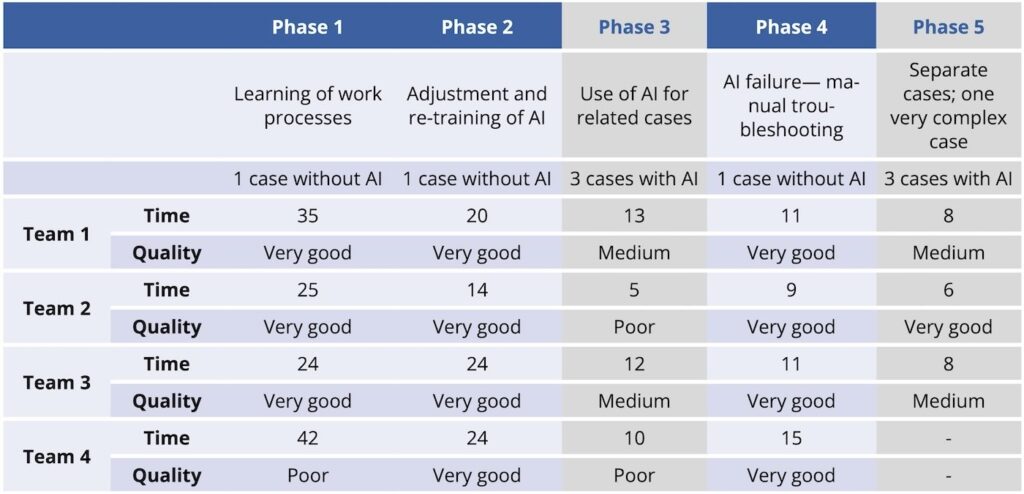

First, the development of the work results during the simulation is examined. The time required by the test subjects to analyze a case and send health recommendations decreases over the course of the phases. The average time for a customer case without AI support is 32 minutes in phase 1, 21 minutes in phase 2, and 11 minutes in phase 4. The average time with AI support is 10 minutes in phase 3 and 7 minutes in phase 5.

The quality also decreases moderately over the course of the phases. In the phases without AI, the best rating was given eleven out of twelve times. With AI, it was only in one out of seven cases. Figure 2 provides an overview of the findings.

Note: The time required per customer case is noted. In phase 4, the time required to restore the system after the hacker attack was deducted. Phases involving the use of AI are highlighted in gray. Teams 1 and 2 consisted of university employees, team 3 consisted of employees from a company in the healthcare industry, and team 4 consisted of students.

The following section presents observations on working methods with and without AI support for the facets of solution finding, reflection during problem solving, and human-AI roles (Fig. 3).

Approach to finding solutions

Soultions were found in a similar manner with and without AI support. The individual stages were worked through either sequentially or in parallel, and findings were recorded. Health recommendations were derived based on the findings together with a group discussion. AI support was used in different ways. Two teams used AI to verify their previously manually obtained findings. Two teams relied directly on AI and then verified the accuracy of the AI analysis, mostly on a random basis.

Reflection processes in problem solving

Occasionally, solutions were proposed that proved to be incorrect in the joint discussion and were therefore rejected. When no AI support was available, in eleven out of twelve cases it was possible to understand the case sufficiently well that the health recommendation was rated “very good”. In cases with AI support, the overall understanding of the cases was less successful.

In both phase 3 and phase 5, the cases were constructed in such a way that, although there were abnormalities in the ECG or health data, these were not considered problematic in terms of the customer’s state of health when taking into account the information from the other stations.

Nevertheless, three out of four teams interpreted the abnormalities as indicative of illness (in some cases even though individual team members questioned this). In such cases, the discussions then focused primarily on the AI analyses, possibly because these double-checked findings were given particular credence. The teams were unable to proceed in the same way as in the phases without AI support and thus exclude inadequate solutions.

Human-AI roles

Expert roles emerged in all teams (expert for ECG, expert for health data, etc.), with at least two people working on the same station in each case. The experts within the teams using AI tended to limit themselves to operating the station and checking the AI results, and showed less reflection on the relevance of the results with regard to the customer case than before the introduction of AI. The use of expert knowledge for better understanding of the case was hardly observable anymore.

In two teams, two people took on leadership roles during the simulation and acted as moderators and questioners in the problem-solving process. They were only partially successful in this, especially when the respective experts supported their solutions with AI-assisted analyses. AI support itself was considered a tool function in all teams. AI-supported results tended to be treated as particularly relevant or particularly reliable.

AI integration changes working methods and work results

The results of the four test runs in the human-AI role development laboratory show: Over the course of the simulation, the teams demonstrate progress in integrating AI into their work processes. They critically examine the AI outputs and achieve higher speeds with AI support.

However, they are unable to improve the quality of the key work results (health recommendations); in fact, there is a slight downward trend. This is related to the fact that working with AI support is accompanied by a change in the team’s problem-solving behavior. The teams are confronted with largely reliable findings but are unable to classify them in the same way as they did without AI support.

The results tie in with existing literature that reports negative effects of AI use on critical thinking [4, 11]. The present study shows that these effects can be observed within a few hours in a simulation setting. Via feedback on quality and the guided reflection round, however, this observation was accessible to team members in the simulation setting. It therefore stands to reason that an experience-based and workplace-oriented simulation approach can address both facets of AI competence: those that relate to AI operation and those that relate to critically reflective problem solving with and without AI.

The study does not allow for generalizable conclusions. This is due, on the one hand, to the very small number of test groups. On the other hand, numerous alternative explanations for the above results are conceivable. These include fatigue effects or reduced effort due to the absence of real consequences in terms of quality and learning effects in terms of speed. It also remains unclear to what extent the simulation has different effects on students and working professionals.

The diversity of the test groups (students, scientists, team from the healthcare industry) only made it possible to confirm the basic plausibility of the scenario across different professional backgrounds. Despite these limitations, the study suggests an approach to developing AI competence, the exact effect of which needs to be investigated in more comprehensive studies.

The present results make it clear that the development of AI competence for collective problem solving requires an approach that goes beyond the development of technical understanding. Responsible use of AI therefore includes, in particular, anticipating changes in thought processes with regard to problem solving when using AI and taking appropriate countermeasures. In addition to the approach presented in the Human-AI Role Development Lab, other experience-based approaches are conceivable that can be integrated into operational practice in the workplace.

This article was written as part of the HUMAINE research project, which was funded by the BMFTR funding program “Future of Value Creation – Research on Production, Services, and Work” (funding code: 02L19C200).

Bibliography

[1] Bankins, S.; Ocampo, A. C.; Marrone, M.; Restubog, S. L. D.; Woo, S. E.: A multilevel review of artificial intelligence in organizations: Implications for organizational behavior research and practice. In: Journal of organizational behavior 45 (2024) 2, pp. 159–182.[2] Raisch, S.; Fomina, K.: Combining human and artificial intelligence: Hybrid problem-solving in organizations. In: Academy of Management Review 50 (2025) 2, pp. 441–464.

[3] Annapureddy, R.; Fornaroli, A.; Gatica-Perez, D.: Generative AI literacy: Twelve defining competencies. In: Digital Government: Research and Practice 6 (2025) 1, pp. 1–21.

[4] Lee, H.-P.; Sarkar, A.; Tankelevitch, L.; Drosos, I.; Rintel, S. et al.: The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers. Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery 1121 (2025), pp. 1–22.

[5] Li, H.; Kim, S.: Developing AI literacy in HRD: Competencies, approaches, and implications. In: Human Resource Development International 27 (2024) 3, pp. 345–366.

[6] Bankins, S.; Formosa, P.: The ethical implications of artificial intelligence (AI) for meaningful work. In: Journal of Business Ethics 185 (2023) 4, pp. 725–740.

[7] Wilkens, U.; Lupp, D.; Langholf, V.: Configurations of human-centered AI at work: seven actor-structure engagements in organizations. In: Frontiers in Artificial Intelligence (2023) 6, 1272159. DOI: https://doi.org/10.3389/frai.2023.1272159.

[8] Bick, A.; Blandin, A.; Deming, D. J.: The rapid adoption of generative AI (No. w32966). National Bureau of Economic Research. 2024.

[9] Al Naqbi, H.; Bahroun, Z.; Ahmed, V.: Enhancing work productivity through generative artificial intelligence: A comprehensive literature review. In: Sustainability 16 (2024) 3, 1166.

[10] Rampelt, F.; Ruppert, R.; Schleiss, J.; Mah, D. K.; Bata, K.; Egloffstein, M.: How do AI educators use open educational resources?: A cross-sectoral case study on OER for AI education. In: Open Praxis 17 (2025) 1, pp. 46–63.

[11] Gerlich, M.: AI tools in society: Impacts on cognitive offloading and the future of critical thinking. In: Societies 15 (2025) 1, 6.

[12] Schuckart, A.: GenAI and Prompt Engineering: A Progressive Framework for Empowering the Workforce. In: Proceedings of the 29th European Conference on Pattern Languages of Programs, People, and Practices (2024), pp. 1–8.

[13] Johri, A.; Schleiss, J.; Ranade, N.: Lessons for GenAI Literacy from a Field Study of Human-GenAI Augmentation in the Workplace. In 2025 IEEE Global Engineering Education Conference (EDUCON) (2025), pp. 1–9.

[14] Kaspersen, M. H.; Musaeus, L. H.; Bilstrup, K. E. K.; Petersen, M. G.; Iversen, O. S. et al.: From primary education to premium workforce: Drawing on K-12 approaches for developing AI literacy. In: Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems (2024), pp. 1–16.

[15] Creswell, J. W.; Clark, V. L. P.: Designing and conducting mixed methods research. New York 2017.

Your downloads

Potentials: Leadership Management Training