Multi-Stakeholder AI Ethics in Radiology |

Implications for integrated technology and workplace design

| Journal | Industry 4.0 Science |

| Issue | Volume 42, Edition 1, Pages 136-143 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.128 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

AI applications in clinical radiology

In clinical medicine, labor is divided meticulously and efficiently, with sequential and parallel task allocation between and within groups of specialists, each of which has clearly defined responsibilities. The requirements for the use of AI support systems in this context relate, on the one hand, to the technical ability of the systems to collect and evaluate a large amount of information, draw conclusions from it, and communicate these. Given the nonlinear progression of diseases, such AI support systems would have to harness new information to lead to a reassessment of old information.

Such universal AI systems do not currently exist. AI solutions are only used for specific aspects of medical decision-making processes, such as the detection of radiological anomalies [1, 2, 3]. On the other hand, the use of AI systems in medicine is subject to particular ethical requirements regarding the transparency, explainability, and traceability of their output, all of which are essential for the interaction between medical professionals and AI as well as for the trust between medical professionals and patients [4, 5, 6].

However, observing ethical criteria in AI development does not automatically lead to improvements in medical care. In addition, ethical considerations do not always take patients and medical staff equally into account, creating a tension between physician assistance and physician competition. As early as 2018, radiologists expressed concerns about the future decision-making authority of AI [7].

As part of the HUMAINE joint project on ergonomics, we investigated the added value of an integrative AI development approach for users and patients, i.e., for a human-centered and thus ethical introduction of AI in radiology workplaces. An exemplary use case was the AI-assisted detection of structural abnormalities (lesions) causing epilepsy as well as the associated therapy options in magnetic resonance imaging (MRI) of epilepsy patients (in short: AI-assisted Epi-MRI). This study is located at the interface between neuroradiology and neurology/epileptology and touches on numerous ethical aspects of medical AI/human co-creation.

Framework conditions for ethical AI in radiology—current status and shortcomings

The detection of lesions that cause epilepsy raises numerous ethical considerations, which can be illustrated using the influential four ethical principles according to Beauchamp and Childress [8] (beneficence, non-maleficence, autonomy, and justice). The principle of beneficence is central to lesion detection, as it has high therapeutic relevance for patients [9, 10]. However, detection rates by non-specialist radiologists have been suboptimal to date due to a lack of knowledge [11] and interdisciplinary interface issues (lack of a neurological focus hypothesis) [12].

The principle of non-maleficence is challenging, especially for radiologists with little experience, as the technical requirements for radiologists are high. The spectrum of lesions to be recognized is wide, and the lesions are sometimes very subtle. Knowledge of pathophysiology and the interdisciplinary clinical consequences of the findings is required [13, 14].

The principle of autonomy becomes relevant when AI has to be integrated into the established intra-radiological and interdisciplinary workflow [15–18], where it stands in direct competition with physicians [7]. The immediacy and wide availability of AI-generated findings, compared to significantly slower medical diagnosis and communication, contribute to this challenge for autonomy.

The principle of fairness is affected, among other things, because the time required for a thorough evaluation is hardly compatible with the clinical workload [19]. AI support can therefore offer more capacity for fair access to sound diagnostics.

However, the integration of AI applications in this field adds further ethical requirements, as AI systems themselves must meet certain ethical standards. In fact, ethical aspects are an overarching principle goal when integrating them into work processes and patient care. The FUTURE-AI consensus criteria [6] (Fig. 1) were developed by scientists, physicians, ethicists, social scientists, and legal experts from numerous countries and outline requirement profiles for six core principles.

![Figure 1: Ethical requirements and overarching principles for AI technologies in healthcare according to the international consensus guidelines for trustworthy and applicable AI (FUTURE-AI, based on [6]).](https://industry-science.com/wp-content/uploads/2026/01/Langholf2_I4S-26-1_Figure-1-1024x404.jpeg)

Based on these core ethical principles of the FUTURE-AI consensus criteria, key challenges for clinical radiology can be identified:

- Fairness: AI-assisted lesion detection requires a large quantity of high-quality data. This is often difficult to achieve in any given context, as high standards of anonymization and data protection are required.

- Universality: To date, no algorithm is capable of detecting all types of lesions equally well.

- Traceability: Quality control of AI applications has so far focused on the technical side [20, 21]. However, the influence of human decisions on the quality of AI-human co-creation must also be measured against ground truth [22]. Initial methodological groundwork has been laid for this [23], but no comprehensive quality measures are available yet.

- User-friendliness: Radiologists’ hopes (AI as assistant) and fears (AI as competition) associated with integrating AI in radiology workplaces [7, 24, 25] have not yet been investigated for the specific use case.

- Robustness: AI applications in radiology must be tolerant of poor or fluctuating conditions, which may include technical image artifacts, anatomical variants, age-related changes, and insufficient or incorrect preliminary medical information. The quality of the findings, e.g., anomaly detection and derived clinical recommendations, must not suffer as a result [16].

- Explainability: Diagnosis is made by clinical users. AI can provide support if it delivers comprehensible outputs that enable radiologists to develop well-founded diagnoses and treatment recommendations.

Research approach

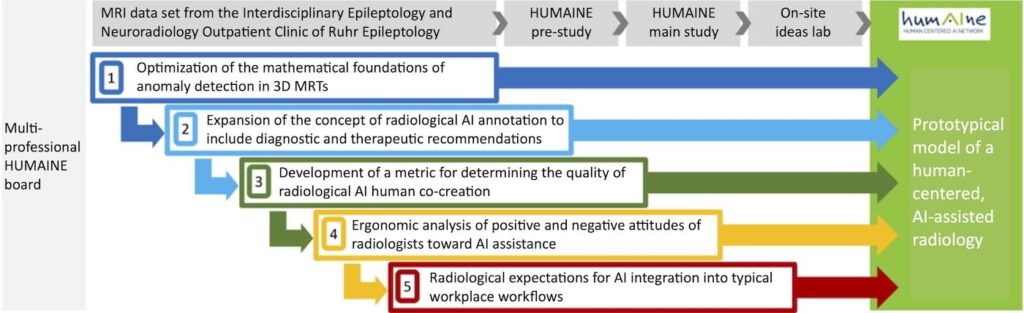

In response to the challenges mentioned above, we have developed modules that support the FUTURE AI core principles and aim to help realize a comprehensive, prototypical model of human-centered AI-assisted radiology, including workflow design. The aim of this work is not to offer a comprehensive solution for all use cases but to stimulate future developments. Figure 2 shows the five modules created by an interdisciplinary board comprising ergonomists, occupational sociologists, physicians, computer scientists, statisticians, and providers of radiological communication systems (see list of authors).

Module 1: Optimization of the mathematical foundations of anomaly detection in 3D MRIs

Focus on FUTURE-AI core principles: fairness, universality

With the Spatially Context Aware Transformer (SpyCAT), we have developed an approach for detecting small, local anomalies [26] that is explicitly derived from formulated modeling assumptions and can explicitly characterize the normality of MRIs and identify anomalies as deviations from it. SpyCAT was trained using publicly available 3D data and 900 MRIs from the Interdisciplinary Epileptology-Neuroradiology Outpatient Clinic of Ruhr Epileptology. To comply with GDPR requirements, a new defacing method was developed and validated [27].

This approach not only yields better predictive accuracy compared to other methods but also widens applicability in line with Future AI principles. To increase fairness, the annotated training will incorporate the international MELD database [28], while the short processing time of less than 30 seconds per MRI sequence will enhance user-friendliness. SpyCAT also follows other Future AI principles.

Module 2: Expansion of AI annotation to include diagnostic and therapeutic recommendations

Focus on FUTURE AI core principles: universality, robustness

The output of clinical recommendations from the findings requires that these have already been entered at the time of AI training. In our use case, for example, we linked the categories of lesion etiology according to SNOMED classification with their potential epileptogenicity. One challenge is that the same lesion etiologies can have different clinical consequences; for example, the size and location of a specific lesion also determine its operability.

To validly transfer such extended annotations onto practical use, building on the approach in Module 1 requires an additional layer of machine learning, including additional data. In order to obtain this data in the future, current developments in multicenter consortium structures should be further pursued. This module particularly concerns the principles of universality, as therapeutic recommendations increase applicability, and robustness, as any influence of unfavorable external circumstances must be ruled out, especially in the case of clinical recommendations.

Module 3: Development of a metric for evaluating the quality of AI-human co-creation of findings

FUTURE-AI core principles in focus: traceability, explainability

Our quality control system for AI/human diagnosis co-creation [29] extends the basic accuracy formula Quality Q = (ground truth – error) / ground truth with several optional components. The ground truth can be defined variably (entire examinations, individual lesion types, etc.), and differentially weighted diagnostic axes, including clinical recommendations derived from anomalies, can be incorporated into the end result. False negative and false positive findings can be evaluated as quality-reducing depending on the application. The effect of MRI training and critical or non-critical attitudes towards false positive and negative results can also be measured.

A pilot study showed significantly better results with AI/human co-diagnosis than with human diagnosis alone. The quality control procedure takes into account the principle of traceability through verifiable metrics, whereas explainability is achieved through application-specific weighting and transparent metrics.

Module 4: Ergonomic analysis of positive and negative attitudes of radiologists toward AI assistance

Focus on FUTURE AI core principles: user-friendliness, universality, robustness

A standardized questionnaire (perception of human-AI interaction and cognitive, affective, and behavioral acceptance) in conjunction with expert interviews (interpretation using grounded theory [30]) was used to assess radiologists’ attitudes toward AI assistance. Radiologists recognize the (objectively given) diagnostic benefits and time savings of AI applications, but they are critical of the unclear reliability of AI solutions and their own possible uncertainties when making diagnoses using AI. The use of AI does not reduce their own contribution to diagnosis, provided that both AI and radiologists make interactive diagnoses (obligatory co-creation of findings).

However, the majority view the “dictation of findings by AI” critically. While it was positively noted that AI affords more time for individual interactions with patients, negative attitudes were driven by the expectation that using AI could further increase workloads and thus induce more stress. Freedom in organizing their own work and relief from complex diagnostic decisions are among radiologists’ top priorities.

Overall, radiologists do not see their role fundamentally challenged by AI assistance. These findings are closely linked to the FUTURE-AI principles of user-friendliness (in particular of human-centered AI, user interaction, and efficiency), universality (especially adaptability and applicability), and robustness (reducing fears about the unreliability of AI assistance).

Module 5: Radiological expectations for AI integration into typical workflows

FUTURE-AI core principles in focus: explainability, user-friendliness

According to the results of the interview study (see above), radiologists’ expectations regarding the integration of AI into their own workflow depend on their workplace, role or position at the institute/clinic, and other personal criteria. Therefore, individual workplace considerations must always be taken into account.

However, there are also more general criteria for user acceptance. These include, for example, an accompanied and interactive AI introduction at the workplace, expectations towards AI analysis speed, the ability to enter clinical information into the AI, the provision of derived recommendations by the AI, the AI’s indication of the probability of the correctness of results, the presentation of alternative findings, and the AI’s assistance in formulating findings.

The FUTURE-AI principle of explainability is crucial, as both the provision of recommendations and the desire to input clinical information require a transparent human-AI interface. In addition, the principle of user-friendliness is addressed in the form of interactive elements and efficient usability.

Prototypical HUMAINE model of human-centered, AI-assisted radiology

The findings from all modules are incorporated into a HUMAINE model for human-centered, AI-assisted radiology as integrated technology and work design (Fig. 2). The basic expectation is that the resulting model of human-centered radiological AI will meet all expectations if the module phase succeeds in evaluating the interests of stakeholders and all relevant ethical criteria. Whether the application-specific goals, including a global ethical improvement, have been achieved remains open.

Outlook on the transfer potential for other use cases and contexts

The current use case, “AI-assisted Epi-MRT,” represents numerous requirements that are also placed on modern radiology and the people working in it. We therefore assume that most of the knowledge gained here can be transferred to other radiological use cases.

The primary indication is that AI can benefit both quality and quantity [31]. We believe that our modular approach to developing workplace-specific, human-centered AI applications in all areas of medicine can increase AI acceptance and contribute to better outcomes. In this context, the FUTURE-AI consensus criteria are suitable for reviewing numerous ethical expectations of human-centered clinical AI, both from the perspective of AI users and the patients “affected” by it. The expectation of added value should apply to all AI stakeholders.

If the circle of potential beneficiaries is expanded to include the healthcare system or stakeholders who were not the focus of the current analysis (such as manufacturers and operators of radiological hardware and software and communications infrastructure), it may be necessary to define modules other than, or in addition to, those invoked here. The reference to manufacturers and operators builds a bridge to the application of AI in industry and administration. Workflows in these areas can be just as complex as in medicine.

There, too, many different and sometimes conflicting stakeholder interests are present. We propose that the principle of human-centered AI development and application—structured into modules that include qualitative and ethical validation tailored to the respective use case—also be evaluated in this context. However, it is quite possible that other ethical catalogs are more applicable there [4, 32] than that applied in the current clinical use case.

The HUMAINE joint project in occupational science is funded by the German Federal Ministry of Research, Technology, and Space (BMFTR) FKZ 02L19C200 and supervised by the Project Management Agency Karlsruhe (PTKA). The authors are responsible for the content of this publication.

Bibliography

[1] Chukwujindu, E.; Faiz, H.; Sara, A. D.; Faiz, K.; De Sequeira, A.: Role of artificial intelligence in brain tumour imaging. In: European journal of radiology 176 (2024), 111509. DOI: 10.1016/j.ejrad.2024.111509.[2] Subudhi, A.; Dash, P.; Mohapatra, M.; Tan, R. S.; Acharya, U. R.; Sabut, S.: Application of machine learning techniques for characterization of ischemic stroke with MRI images: a review. In: Diagnostics, 12 (2022) 10, 2535.

[3] Schlaeger, S.; Shit, S.; Eichinger P.; Hamann, M.; Opfer, R.; Krüger, J.; … & Hedderich, D. M.: AI-based detection of contrast-enhancing MRI lesions in patients with multiple sclerosis. In: Insights Imaging, 14 (2023) 1, p. 123. DOI: 10.1186/s13244-023-01460-3.

[4] Jobin, A.; Ienca, M.; Vayena, E.: The global landscape of AI ethics guidelines. Nature machine intelligence, 1 (2019) 9, pp. 389-399.

[5] Currie, G.; Hawk, K. E.: Ethical and legal challenges of artificial intelligence in nuclear medicine. In: Seminars in Nuclear Medicine, 51 (2021) 2, pp. 120-125.

[6] Lekadir, K.; Frangi, A. F.; Porras, A. R.; Glocker, B.; Cintas, C.; Langlotz, C. P.; … Starmans, M. P.: FUTURE-AI: international consensus guideline for trustworthy and deployable artificial intelligence in healthcare, bmj (2025) 388.

[7] Munk, P. L.: A Brave New World Without Diagnostic Radiologists? Really? Can Assoc Radiol J.; 69 (2018) 2, 119. DOI: 10.1016/j.carj.2018.03.004.

[8] Beauchamp, T. L.; Childress, J. F.: Principles of biomedical ethics. New York: Oxford University Press 2025.

[9] Rugg-Gunn, F.; Miserocchi, A.; McEvoy, A.: Epilepsy surgery. Pract Neurol.; 20 (2020) 1, pp. 4-14. DOI: 10.1136/practneurol-2019-002192.

[10] Téllez-Zenteno, J.F.; Ronquillo, L. H.; Moien-Afshari, F.; Wiebe, S.: Surgical outcomes in lesional and non-lesional epilepsy: a systematic review and meta-analysis. Epilepsy Res.; 89 (2010), 2-3, pp. 310-318.

[11] Walger, L.; Schmitz, M. H.; Bauer, T.; Kügler, D.; Schuch, F.; Arendt, C.; … Rüber, T.: A public benchmark for human performance in the detection of focal cortical dysplasia. In: Epilepsia Open, 10 (2025) 3, pp. 778-786. DOI: 10.1002/epi4.70028.

[12] Wehner, T.; Weckesser, P.; Schulz, S.; Kowoll, A.;; Fischer, S.; Bosch, J.; … Wellmer, J.: Factors influencing the detection of treatable epileptogenic lesions on MRI. A randomized prospective study. Neurological research and practice, 3 (2021) 1, 41. DOI: 10.1186/s42466-021-00142-z.

[13] Wellmer J.; Elger C. E.: MRI in the presurgical evaluation. In: Shorvon S, Engel J Jr.; Perucca E (eds). The treatment of Epilepsy, 3rd edition, Blackwell publishing 2009.

[14] Passaro, E. A.: Neuroimaging in Adults and Children With Epilepsy. Continuum (Minneap Minn), 29 (2023) 1, pp. 104-155. DOI: 10.1212/CON.0000000000001242.

[15] Katal S.; York B.; Gholamrezanezhad A.: AI in radiology: From promise to practice – A guide to effective integration. In: Eur J Radiol, 181 (2024), 111798. DOI: 10.1016/j.ejrad.2024.111798.

[16] Behzad, S.; Tabatabaei, S. M. H.; Lu, M. Y.; Eibschutz, L. S.; Gholamrezanezhad, A.: Pitfalls in interpretive applications of artificial intelligence in radiology. In: American Journal of Roentgenology, 223 (2024) 4, e2431493. DOI: 10.2214/AJR.

[17] Ranschaert, E.; Topff, L.; Pianykh, O.: Optimization of Radiology Workflow with Artificial Intelligence. In: Radiol Clin North Am. 59 (2021) 6, pp. 955-966. DOI: 10.1016/j.rcl.2021.06.006.

[18] Kapoor, N.; Lacson, R.; Khorasani, R.: Workflow Applications of Artificial Intelligence in Radiology and an Overview of Available Tools. In: J Am Coll Radiol.; 17 (2020) 11, pp. 1363-1370. DOI: 10.1016/j.jacr.2020.08.016.

[19] McDonald, R. J.; Schwartz, K. M.; Eckel, L. J.; Diehn, F. E.; Hunt, C. H. et al.: The effects of changes in utilization and technological advancements of cross-sectional imaging on radiologist workload. In: Academic radiology, 22 (2015) 9, pp. 1191-1198.

[20] Dashtkoohi, M.; Ghadimi, D. J.; Moodi, F.; Behrang, N.; Khormali, E.; Salari, H. M.; … Rad, H. S.: Focal cortical dysplasia detection by artificial intelligence using MRI: A systematic review and meta-analysis. In: Epilepsy & Behavior, 167 (2025), 110403.

[21] David, B.; Kröll‐Seger, J.; Schuch, F.; Wagner, J.; Wellmer, J.; Woermann, F.; … Rüber, T.: External validation of automated focal cortical dysplasia detection using morphometric analysis. In: Epilepsia, 62 (2021) 4, pp. 1005-1021.

[22] Mercolli, L.; Rominger, A.; & Shi, K.: Towards quality management of artificial intelligence systems for medical applications. In: Zeitschrift für Medizinische Physik, 34 (2024) 2, pp. 343-352. DOI: 10.1016/j.zemedi.2024.02.001.

[23] Walger, L.; Adler, S.; Wagstyl, K.; Henschel, L.; David, B.; Borger, V.; … Rueber, T.: Artificial intelligence for the detection of focal cortical dysplasia: Challenges in translating algorithms into clinical practice. In: Epilepsia, 64 (2023) 5, pp. 1093-1112. DOI: 10.1111/epi.17522.

[24] Yang, L.; Ene, I. C.; Arabi Belaghi, R.; Koff, D.; Stein, N.; Santaguida, P.: Stakeholders’ perspectives on the future of artificial intelligence in radiology: a scoping review. In: European Radiology, 32 (2022) 3, pp. 1477-1495. DOI: 10.1007/s00330-021-08214-z.

[25] Goyal, S.; Sakhi, P.; Kalidindi, S.; Nema, D.; & Pakhare, A. P.: Knowledge, attitudes, perceptions, and practices related to artificial intelligence in radiology among Indian radiologists and residents: a multicenter nationwide study. In: Cureus, 16 (2024) 12. DOI: 10.7759/cureus.76667.

[26] Schwarz, J.; Will, L.; Wellmer, J.; Mosig, A.: A Patch-based Student-Teacher Pyramid Matching Approach to Anomaly Detection in 3D Magnetic Resonance Imaging. In: Proceedings of Machine Learning Research, 250 (2024), 13571370.

[27] Wolf, J.: Comparison and Evaluation of Anonymization Approaches for Magnetic Resonance Images, B.Sc. Thesis, Faculty of Computer Science, Ruhr University Bochum, 2025.

[28] Spitzer, H.; Ripart, M.; Whitaker, K.; D’Arco, F.; Mankad, K.; Chen, A. A.; … Wagstyl, K.: Interpretable surface-based detection of focal cortical dysplasias: a Multi-centre Epilepsy Lesion Detection study. In: Brain, 145 (2022)11, pp. 3859-3871.doi: 10.1093/brain/awac224.

[29] Will, L.; Schwarz, J.; Denz, R. et al.: A metrics toolbox for the quality assessment of joint human-AI reporting in radiology (in Vorbereitung zur Einreichung).

[30] Glaser, B. G.; Strauss, A. L.; Paul, A. T.; Kaufmann, S.; Hildenbrand, B.: Grounded theory – Strategien qualitativer Forschung (3.; unveränderte Auflage.). Verlag Hans Huber, 2010.

[31] Obuchowicz, R.; Lasek, J.; Wodziński, M.; Piórkowski, A.; Strzelecki, M.; Nurzynska, K.: Artificial intelligence-empowered radiology—current status and critical review. In: Diagnostics, 15 (2025) 3, 282. DOI: 10.3390/diagnostics15030282.

[32] Ohliger, U.; Winter, J.; von Richthofen, G.; Aslan Gümüsay, A.; Hünsche, M. Menschengerechte Arbeitswelt und ressourceneffizientes Wirtschaftswachstum durch KI – Potenziale für den nachhaltigen Technologieeinsatz. In: Fabisch, N.; Schmidpeter, R.; Schuster, G.; Sihn-Weber, A. (eds.) SDG 8: Menschenwürdige Arbeit und Wirtschaftswachstum. Globale Ziele für nachhaltige Entwicklung. Berlin Heidelberg 2025. https://doi.org/10.1007/978-3-662-68327-9_1-1.

Your downloads

Potentials: Innovation Leadership Management

Solutions: Production Control Quality Management