Improving Social Media Moderation with Generative Language Models |

Study on the detection and correction of disinformation

| Journal | Industry 4.0 Science |

| Issue | Volume 40, 2024, Edition 6, Pages 72-79 |

| Open Access | https://doi.org/10.30844/I4SE.24.6.72 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

The threat of disinformation in the digital age

In today’s digital age, society faces new challenges posed by generative language models and the global spread of disinformation on social media. The latter not only influences public opinion, but also poses a threat to democratic processes. Former Google CEO Eric Schmidt aptly remarked that the internet is the greatest anarchist experiment in history and the first that humanity has constructed but does not understand [1]. This quote illustrates the immense complexity and unpredictability of the internet, which has revolutionized the exchange of information while simultaneously providing a platform for disinformation and hate speech.

A significant example of the current challenges facing the global community is the choice of the term “post-truth” as the 2016 word of the year. In a post-factual era, where facts and logic often take a back seat to emotional and personal beliefs, objective facts have less influence on public opinion than ever before [2]. This development underlines the urgent need to develop and implement effective strategies to combat disinformation.

Why disinformation must be tackled

The targeted spread of disinformation is a serious challenge that goes beyond simply bad journalism or unintentional misinformation. Disinformation is often deliberately used to strengthen political power or promote financial interests [3]. Various studies also show that disinformation and hate speech are purposely used to intensify social divisions.

One example of this is the rise of hate crime against refugees and migrants, which is largely motivated by the spread of disinformation about these groups on social media. At worst, disinformation can result in real violence [2]. A Spanish study shows that hate speech and disinformation are most often spread by politicians and radical groups [4]. In other cases, disinformation is deliberately used to undermine the credibility of established news services [5].

This development is particularly threatening for democracies, which rely on an open and constructive exchange of opinions. The latter is significantly disrupted by such manipulation. Disinformation not only serves as a tool for exerting influence, but also acts as a catalyst for hate crime, which jeopardizes social cohesion.

Against this background, this paper aims to investigate whether a generative language model such as GPT-4o is able to effectively detect and correct cases of disinformation in social media moderation.

The complexity of disinformation

Having emphasized the importance of combating disinformation as a key component of protecting democratic processes, it is important to develop a clear understanding of what disinformation actually entails. This enables both the identification of disinformation and the development of appropriate countermeasures.

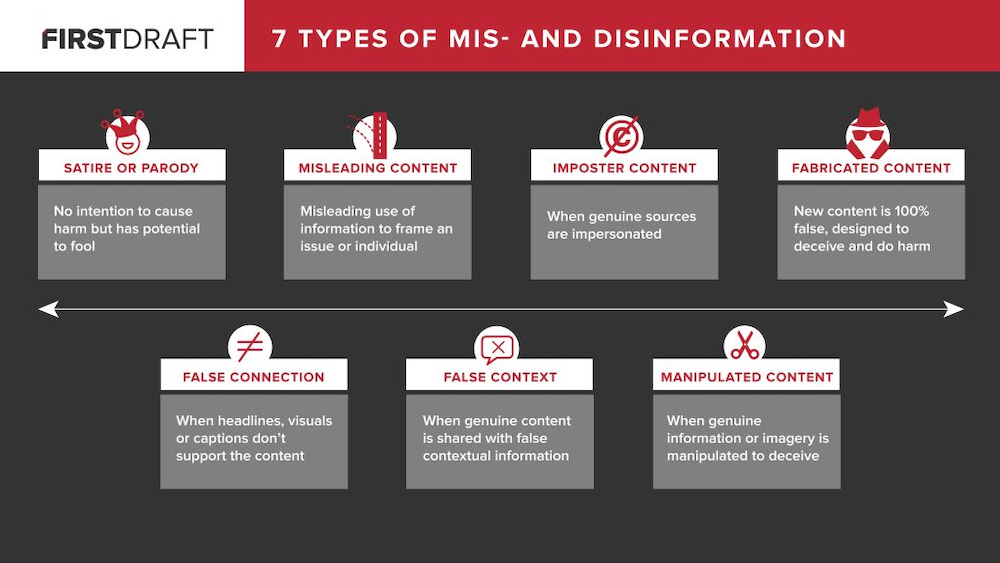

Disinformation can be divided into different categories, as shown in the figure above. The seven main types of disinformation include:

- Satire or parody: Content that has no malicious intent but has the potential to mislead people.

- Misleading content: This describes information that is deliberately being misrepresented or taken out of context to cause harm or distort a particular topic or person.

- Imposter content: This type of disinformation occurs when authentic sources are imitated or falsified to give the appearance of credibility.

- Fabricated content: This is completely fabricated content created with the intent to deceive and cause harm.

- False connection: These are misleading headlines, images or videos that do not reflect the actual content of the article or news item.

- False context: Genuine material is placed in a false or distorted context in order to manipulate perception.

- Manipulated content: In this form of disinformation, genuine image or video material is deliberately edited or manipulated to create a false impression. [3]

Disinformation is a complex phenomenon that can undermine public perception and trust in information in various ways. This poses a considerable challenge for generative language models and makes the research question particularly exciting: Can a generative language model successfully cope with the many facets of disinformation?

Insights into current research

Research in the field of hate speech is extensive, but there is comparatively less work on uncovering disinformation. Nevertheless, some studies in this area are worth highlighting.

In one paper, researchers developed an automated framework for detecting disinformation in political speeches. Using various classification methods, the system extracts specific features, such as topic, location, profile and credibility of the speaker as well as contextual information. The trained language model, which is based on a Support Vector Machine, achieved a recognition accuracy of 74% on the “Liar” dataset, consisting of about 12,000 manually labeled political statements [6]. German research institutes have also made significant contributions: The Fraunhofer Gesellschaft developed a tool to warn against fake news, while the Max Planck Institute developed a language model to classify Twitter posts according to German criminal law [7].

In the field of generative language models, the study “Detecting Hate Speech with GPT-3” by Collins is worth mentioning. This study examined the ability of the GPT-3 language model to recognize and classify hate speech. In various learning scenarios (zero-shot, one-shot, few-shot), the accuracy of the language model varied between 55% and 67%, but could be increased to 85% in few-shot scenarios [8].

Data collection and classification of information sources

In this experiment, a total of 50 data sets were collected, covering a variety of topics and sources. 40 of these 50 data setswere classified as potential cases of disinformation, while the remaining 10 data sets were categorized as correct information. The potential cases of disinformation were further divided into four categories: ten records contained satirical content, ten were marked as false context, ten held fabricated content, and the remaining ten represented misinformation.

The data sets include both online messages and comments from social media users, such as Facebook and Twitter/X. Only text content was considered. Visual or multimodal content such as images and videos were not considered, with the exception of cases where text was extracted from images.

Correctiv.org and Mimikama were used as reliable sources for the collection of fake news. Satirical content was obtained from recognized satirical sources such as “Der Postillon” and “Titanic Magazin”. The serious data sets came from various established news portals such as “Die Zeit”, “ZDF”, “Welt” and “Der Spiegel”. This selection ensures broad thematic coverage as well as highly relevant content.

The data sets cover a diverse range of topics, such as climate change, celebrities, crimes, curiosities, political events and scientific topics, such as health and technology. All selected topics are of current relevance, with the oldest contribution dating back to the beginning of 2024. The majority of the contributions focus on the months of May and June 2024, which ensures the topicality and relevance of the data.

Application of the GPT-4o language model

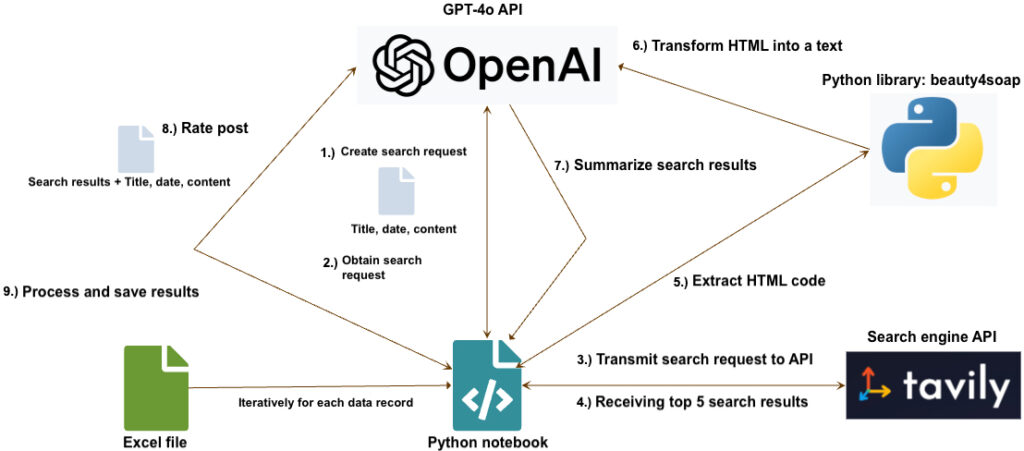

The GPT-4o model is activated via the OpenAI platform, using a Python notebook. The Python script runs through the collected data sets iteratively and passes three key data points to the GPT-4o language model: The publication date of the article, the title (if available) and the content of the article. First, the language model uses this information to create a search query that returns five results via a search engine API.

The provider Tavily was selected for the search engine API, as the integration into a Python notebook proved to be particularly straightforward due to the library provided [9]. The corresponding URLs of these search results are then read out, using Python. The “Beautiful Soup” library is used to extract the text from the HTML content [10]. This content is then summarized by the GPT-4o language model and returned in a JSON array.

In the next step, the GPT-4o model is activated once again to make an assessment based on the original contribution and the summarized information of the recorded web pages. This happens via a defined format, in which the language model indicates whether the content is correct, partially correct or incorrect. The language model also provides a reason for the rating and suggests corrections, if necessary.

The results are saved in an Excel spreadsheet and the author manually analyzes whether the classification is correct and the reasoning is comprehensible. Another aspect of the analysis is to check whether the language model has correctly captured the context of the post: whether a satirical post has been recognized as such or whether a serious post has incorrectly been classified as satire, for example.

The technical procedure of the experiment can be seen in the figure below.

Evaluation and assessment

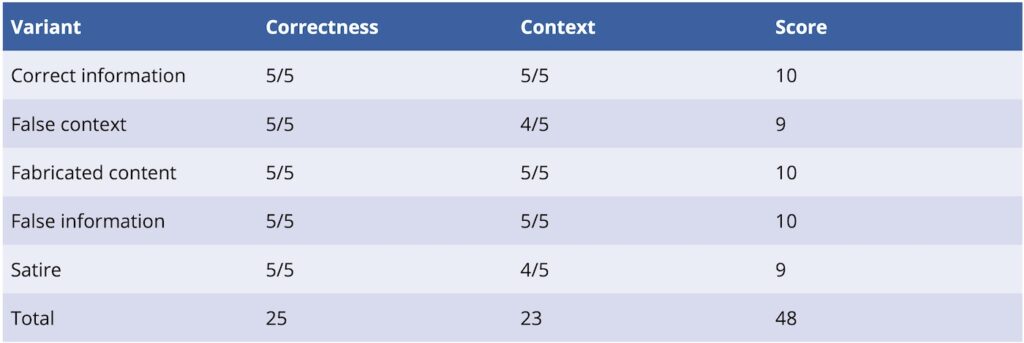

The evaluation of the results followed a clear pattern: If the author was able to fully understand the language model’s reasoning, the data set does not receive a point deduction. This assumes that the language model has correctly recognized the content and interpreted the context of the post appropriately. Context refers to the language model’s ability to grasp the overall meaning and intention of the post.

The following example illustrates this approach: If the language model does not recognize the satirical intention behind a post, it manages to identify an apparent content error, yet does not take into account that the supposed disinformation is a deliberate stylistic choice.

Points are deducted as follows:

- One point is deducted for incorrect content recognition.

- One point is deducted for misunderstanding the context.

If we are given 50 data records, a maximum total score of 50 points can be achieved. Points are only deducted in accordance with theaforementioned criteria if either the accuracy of the content or the context was not correctly gathered; The occurrence of both errors results in a deduction of two points per data set. If 25 data records do not fulfill both criteria, the total score is 0 points. Minus points are not possible.

The assessment method is comparable to a teacher assessing a student and makes it possible to consider the content-related reasoning of the language model. However, it should be noted that this assessment method is more subjective and less neutral than the calculation of quantitative indicators.

The final score is used to calculate the accuracy, which reflects the overall performance of the setup. The term “accuracy” here refers exclusively to the percentage of the total 50 points that can be achieved. Achieving 40 out of a possible 50 points translates to an accuracy of 80%. The term “correctness” was deliberately avoided, as it is already used in this context specifically for the criterion of correctness of content.

The GPT-4o language model, in combination with the Tavily search request-API, achieved an overall score of 48 points.

A positive example can be seen here:

– Content of the misinformation: The observed sea level fall in Oslo is demonstrated in the graph below. The data clearly shows that the sea level has been falling for over 100 years and will have fallen by a further 28 cm by the end of the century.

-Correctness rating : “Partly correct”

-Reasoning: “The text claims that the sea level in Oslo has been falling for over 100 years and will have fallen by a further 28 cm by the end of the century. This is partly correct, as there is actually a relative drop in sea level in Oslo caused by isostatic land uplift. The land is rising faster than the sea level is rising, resulting in a relative decline.

However, absolute sea levels are rising worldwide due to climate change, which has been proven by numerous scientific studies and reports, such as that of the IPCC. Sources, such as the dpa fact check and the Helmholtz Centre for Ocean Research, confirm that global sea levels are rising, even if local geological processes, such as land uplift in Oslo, can lead to a relative decline.”

In this example, GPT-4o shows that it can even recognize partially correct information and correct the context accordingly, giving the reader a comprehensive overview of the facts.

In two cases, however, the language model failed to understand the context.

In one case, the satirical intention was overlooked:

– Content of the misinformation: Reports in the New York Times stating that the organizers of the Paris Olympics want to deter athletes from performance-reducing activities with anti-sex measures, are true. The cardboard beds that collapse immediately at the slightest movement are just the tip of the iceberg. The pleasantly warm showers in the apartments have already been replaced by freshly filled ice barrels. (…) Resisting the charms of the Minister of Economic Affairs could, however, become the 49th discipline of this year’s games for the security forces, where the motto in sexual terms is: “Nottaking part is what counts!”

– Correctness rating of GPT-4o: “False”

– Reasoning: “The article contains numerous false and exaggerated claims. The organizers of the Olympic Games in Paris have denied the rumors about ‘anti-sex measures’ and ‘anti-sex beds’. The cardboard beds were chosen for environmental reasons and are sturdy enough to provide comfort for the athletes. There are no reports of ice barrels in place of showers, flamed bushes or condoms coated with ketamine. These claims are exaggerated and do not correspond to reality. Sources such as DER SPIEGEL and WDR confirm that the rumors about anti-sex measures are unsubstantiated.”

Another point was deducted when evaluating a post with the wrong context, as the language model had misunderstood the post. Specifically, the problem was that GPT-4o did not recognize that the title chosen by the author had no relation to the actual content of the post.

In conclusion, the study shows that the GPT-4o model in combination with a search engine API, such as Tavily, is highly effective in detecting and correcting disinformation. With an accuracy of 96%, the language model was able to classify most of the data sets correctly, although in some cases it struggled to fully capture the context. These results underline the potential of generative language models to support content moderation and combat disinformation in digital media.

Bibliography

[1] TechMonitor: 10 Most Notable Quotes from Google’s Eric Schmidt. URL: https://techmonitor.ai/technology/10-most-notable-quotes-from-googles-eric-schmidt-4207960, Accessed17.08.2024.[2] European Foundation for South Asian Studies (ed.): The role of fake news in fueling hate speech and extremism online; Promoting adequate measures for tackling the phenomenon.URL: https://www.efsas.org/publications/study-papers/the-role-of-fake-news-in-fueling-hate-speech-and-extremism-online/, Accessed17.08.2024.

[3] Wardle: Fake news. It’s complicated. URL: https://firstdraftnews.org/articles/fake-news-complicated/, Accessed17.08.2024.

[4] Blanco-Herrero, D.; Sánchez-Holgado, P.: Fake news and hate speech: who is to blame? In: Ninth International Conference on Technological Ecosystems for Enhancing Multiculturality (TEEM’21), Barcelona: ACM, Oct. 26, 2021, pp. 448-451.

[5] Drobnik, Holan, A.: PolitiFact – The media’s definition of fake news vs. Donald Trump’s. URL: https://www.politifact.com/article/2017/oct/18/deciding-whats-fake-medias-definition-fake-news-vs/, Accessed 17.08.2024.

[6] Purevdagva, C. et al.: A machine-learning based framework for detection of fake political speech. In: 2020 IEEE 14th International Conference on Big Data Science and Engineering (BigDataSE). Guangzhou, China: IEEE, December 2020, pp. 80-87.

[7] Machnig, L.: Combating hate speech with artificial intelligence. URL: https:/www.telekom.com/en/company/details/combating-hate-speech-with-artificial intelligence-628530, Accessed 17.08.2024.

[8] Chiu, K.-L.; Collins, A.; Alexander, R.: Detecting Hate Speech with GPT-3. In: arXiv (2022). URL: https://arxiv.org/abs/2103.12407.

[9] Tavily: Tavily Search API.URL: https://tavily.com/, Accessed 17.08.2024.

[10] Beautiful Soup: Beautiful Soup 4 Documentation.

Your downloads

Potentials: Business Models Services

Solutions: Quality Management Safety