JOCAT (Job Change Acceptance Toolbox) |

A change management approach for implementing AI systems ethically and sustainably

| Journal | Industry 4.0 Science |

| Issue | Volume 42, Edition 1, Pages 80-91 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.74 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

Artificial Intelligence (AI) is transforming work in unprecedented ways. As an umbrella term for technologies emulating human cognition, AI automates routine tasks and augments decision-making, reasoning, and content generation [1]. Characterized by autonomy, self-learning, and opacity, AI systems differ fundamentally from previous technologies, enabling deeper and broader transformations of organizational structures, processes, and cultural dynamics [2, 3]. As a result, AI implementation generates heightened uncertainty and resistance, frequently rooted in ethical and psychological concerns [3, 4].

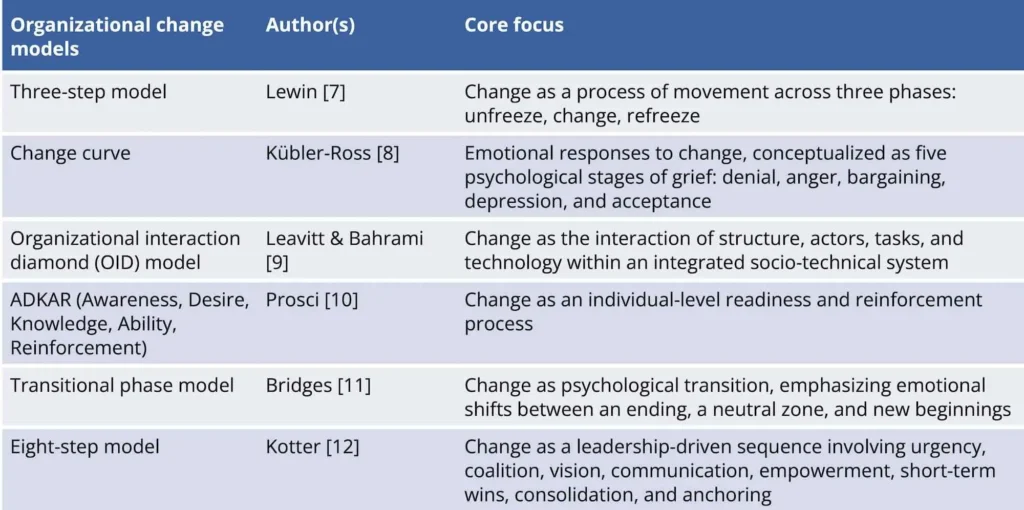

While these challenges are intensified by AI’s complexity, many observed reactions reflect familiar patterns of organizational change. Hence, revisiting foundational change models can help illuminate conditions under which transformation succeeds. Over the past decades, numerous frameworks have emerged (e.g., by Lewin, Kübler-Ross, Leavitt, Prosci, Bridges, and Kotter), each emphasizing distinct but important aspects of change (see Fig. 1). Broader concepts such as Organizational Development (OD) and Action Research (AR) complement these models by highlighting iterative learning, stakeholder engagement, and cultural alignment, elements which are all also essential for sustainable transformation [5, 6].

A central insight shared across these models is that successful change depends on people and their willingness to engage [13, 14]. Nevertheless, most models remain fragmented, focusing on isolated aspects rather than offering an integrated perspective [15, 16]. For instance, participation and involvement are stressed in ADKAR [10] and OID [9] models, while communication is central to Kotter’s model [12] and Kübler-Ross’ emotional curve [8]. Leadership is treated as a key lever in Kotter’s model [12], whereas OD approaches [6] emphasize cultural alignment as both a change driver and barrier.

Considering this fragmentation, recent reviews [13-17] emphasize the need for integrated, adaptable frameworks that address these factors jointly. These should enable more holistic as well as ethically and psychologically responsible approaches to contemporary transformation processes.

However, rooted in earlier technological paradigms, most models, even in integrated form, fall short of addressing the volatile, adaptive, and data-intensive nature of AI systems [3]. To adequately respond to today’s transformation demands, change frameworks must also account for the dynamic interplay between humans and AI, the opacity of algorithmic processes, and ethical challenges tied to data use and augmentation [3, 18]. This leads to the guiding question of this article: How can change management approaches be designed to meet the unique psychological, ethical, and structural demands of AI system implementation?

To address this question, a two-part mixed-method approach is adopted. The first part establishes a holistic view on change processes by integrating classical models and applying them to a use case from FraAlliance (a joint venture between Fraport AG and Lufthansa). The use case reveals the conceptual gaps present when it comes to meeting AI-specific demands, which are further substantiated by insights from literature on AI-related transformation processes. The second part draws on six expert interviews to explore the impact that these gaps have in practice. This comes together to inform the development of the Job Change Acceptance Toolbox (JOCAT), a human-centered, practice-oriented framework for AI-related change.

Part I: Integration of change models and identification of AI-specific gaps

This first part aims to develop an integrated framework offering a holistic view on change processes, which is essential for sustainable transformation. The framework is derived by integrating the established change models summarized in Figure 1 and grounded in findings from recent literature reviews [see 13-17, 19, 20]. This integrated model is subsequently applied to a real change case (FraAlliance) involving AI-based baggage detection and further enriched with insights from literature on AI-related change processes [see 21-25] to uncover gaps in addressing AI-specific change requirements.

Deriving an integrated change management model from literature

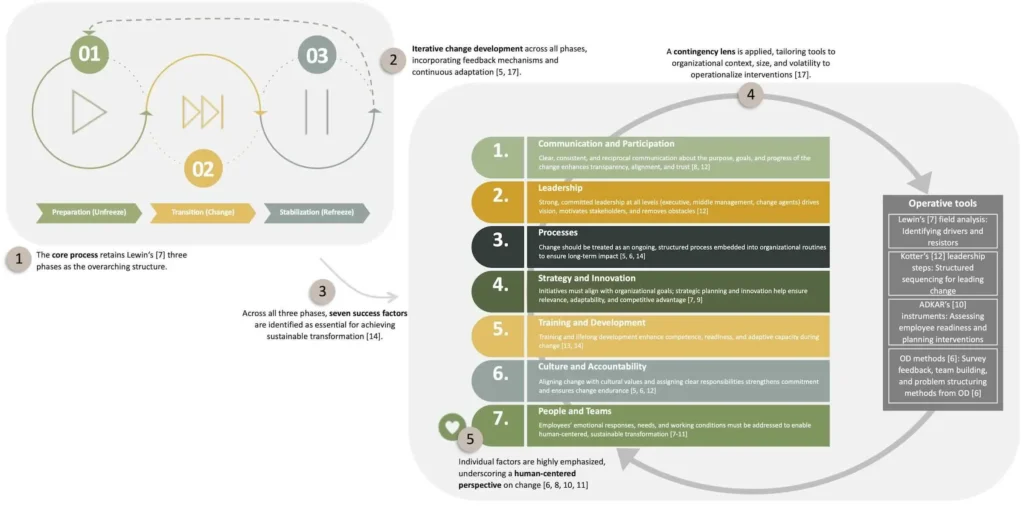

A suitable starting point for an integrated change management framework from an organizational psychology perspective remains Lewin’s [7] three-step model, which offers enduring processual clarity and has shaped many subsequent approaches [13, 14]. However, sustainable transformation demands more than processual clarity; it requires a focus on content-related drivers and interdependent success factors that enable effective change [16]. To that end, seven cross-cutting factors occur across the models in Figure 2, including communication and participation, leadership, processes, strategy and innovation, training and development, culture and accountability, and people and teams [14, 20].

In line with the OID model [9], these factors must be understood as interrelated system elements that both influence and are influenced by change. While all seven factors are considered relevant across all phases, their influence varies by phase, with certain factors gaining salience at specific stages without excluding others:

- In the preparation (unfreeze) phase, communication and participation, leadership, and people and teams are central to building urgency, trust, and psychological readiness;

- During the transition (change) phase, processes, strategy and innovation, and training and development take priority in order to operationalize change, align it with goals, and build adaptive capacity. Communication and participation and leadership remain essential for navigating uncertainty;

- In the stabilization (refreeze) phase, culture and accountability, along with people and teams, become critical for anchoring new behaviors and sustaining outcomes [13-17, 19, 20].

Given that emotional dynamics, participation, and cultural alignment are emphasized across all phases [see 14], the integrated framework adopts a human-centered perspective, recognizing that successful transformation ultimately depends on employees’ needs, perceptions, and engagement.

In addition to these structural success factors, Macedo et al. [14] advocate for a contingency-based approach, emphasizing that no ‘one-size-fits-all’ model can address the diversity of change contexts. Change must therefore be flexibly adapted to organizational factors such as size, culture, volatility, and stakeholder composition. This reinforces the idea that the integrated model should not replicate individual models in full but instead draw on their most effective tools, tailored to the organization’s specific challenges.

Moreover, building on principles from AR [5] and OD [6], and as emphasized by Mosadeghrad and Ansarian [17], change can be understood as an iterative process. Rather than assuming linear progression, the integrated model should also incorporate feedback loops, double-loop learning, and continuous adaptation as central elements. Accordingly, this proposal is not conceived as a prescriptive roadmap but as a multidimensional, human-centered, and context-sensitive framework with built-in iterative adjustment mechanisms (Fig. 2).

In summary, the integrated model combines processual, structural, and psychological dimensions. It leverages the strengths of established models while compensating for their limitations, such as Lewin’s [7] simplicity, Kotter’s [12] rigidity, or ADKAR’s [10] individual-level focus, offering a more comprehensive foundation for navigating complex change.

Exploring AI-specific gaps in the integrated change framework

To explore the applicability and limitations of the integrated change model in the context of AI system implementation, this section combines practical insights from a real-world use case at FraAlliance with theoretical perspectives from AI-specific change literature. In FraAlliance’s case, one key operational challenge concerns the handling of carry-on baggage during boarding. Passengers often bring items exceeding their fare allowances, causing delays, staff conflicts, and downstream disruptions in load distribution or departures.

To address this, an AI-based computer vision system is deployed to detect and classify carry-on items in real time as passengers enter defined boarding zones. The goal is to provide gate personnel with timely and reliable data to better handle oversized baggage and improve boarding efficiency and flight punctuality.

According to the integrated framework (see Fig. 2), implementation would require distinct support across all phases. In the preparation phase, leadership support from both airport and airline stakeholders, clear communication about the system’s purpose, and participatory involvement of gate staff are essential to foster readiness. During transition, inclusive feedback formats and AI training may promote competence and confidence among gate personnel. For stabilization, long-term mechanisms such as feedback loops between IT and operations or respective onboarding adjustments can help anchor the new routines.

While initial pilot results appeared promising, several implementation challenges immediately became apparent. Technically, the system requires environments where baggage can be accurately linked to individual passengers and fare classes. Employees raised concerns about the accuracy of AI recommendations and the resulting impact on decision autonomy and role clarity. Ethical concerns were also voiced due to the system’s surveillance capabilities, and difficulties arose in aligning AI output with existing workflows.

These observations indicate that, despite its holistic structure, the integrated change model does not sufficiently address certain concerns. Issues such as autonomy loss, privacy, and system opacity go beyond the model’s scope [see 3]. Hence, while a holistic change framework is necessary, it is not sufficient for guiding AI-specific transformations. Dedicated refinements aligned with AI’s distinctive characteristics are required.

To clarify these AI-specific requirements, the following insights from AI-focused literature identify core challenges and their implications for change models:

- AI implementation is a never-ending journey. AI systems evolve through use and interaction, requiring ongoing recalibration, maintenance, and oversight [21-24]. Therefore, change frameworks must go beyond iterative logic to support agile, cyclical adaptation processes.

- AI systems reshape work by merging with human decision-making. Rather than merely supporting tasks, they influence judgements, roles, and authority, challenging perceived control, role clarity, and professional identity [21, 24]. Change strategies must explicitly address the dynamics of human-AI interaction and related challenges.

- AI systems create complex data governance demands. Their effectiveness depends on access to structured, high-quality data across systems and departments, raising concerns about ownership, privacy, bias, and standardization [24, 25]. Accordingly, change models must incorporate cross-functional coordination, ethical data governance, and quality control mechanisms.

- AI systems intensify resistance and uncertainty. Fears of deskilling, job loss, and loss of control are amplified. Trusting AI may require overriding personal intuition [23-25]. Thus, change efforts must reinforce psychological safety, promote trust calibration, and invest in broad-based competence building.

- AI systems amplify ethical, legal, and reputational risks. These include fairness, transparency, regulation, and accountability, exceeding those associated with traditional technologies [21, 23-25]. Ethical reflection and structured stakeholder engagement must be embedded into change processes.

- Generative AI (GAI) introduces additional challenges. While GAI can support a variety of tasks, including in management, issues such as hallucinated content and opaque source generation complicate trust and governance [23]. Change models must differentiate between AI types and define safeguards specific to generative functionalities.

Taken together, these insights highlight a fundamental gap: While the integrated model provides a robust foundation, it lacks mechanisms to address the dynamic, relational, and ethically sensitive nature of AI systems. This gap underscores the need for a targeted extension of the framework.

Part II: Expert insights on AI-specific change requirements

To validate and address the AI-specific gaps identified above, semi-structured interviews were conducted with professionals involved in digital and AI-related change processes. The interviews focused on perceived barriers and success factors in implementing AI systems, with the present analysis restricted to open-ended questions about success (e.g., “What do you consider key success factors in implementing AI systems in organizations?”).

Ethics approval was granted by the local ethics committee (vote no. 997), and the study was conducted in accordance with the guidelines of the German Research Foundation [26]. All interview questions and the full procedure were preregistered and are accessible at https://doi.org/10.17605/OSF.IO/W6Y2U.

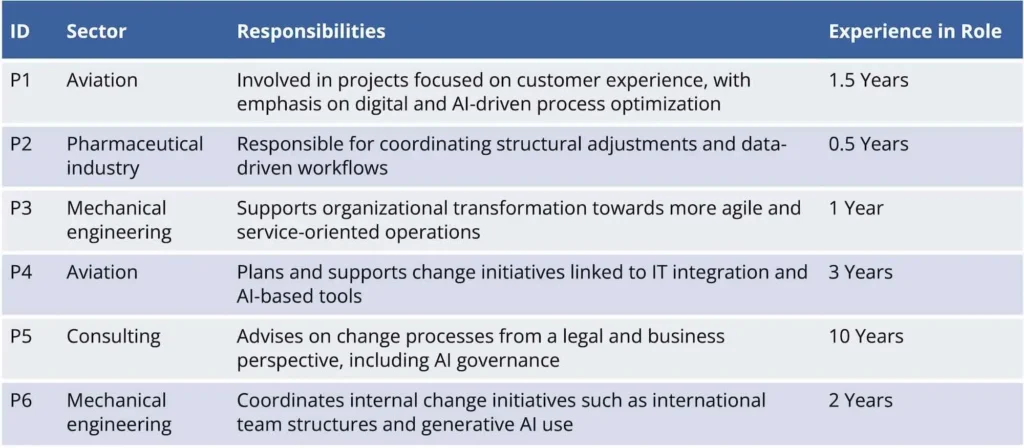

The sample consists of six interviews (out of eight planned), conducted since May 2025 across five organizations. The interviews lasted on average 31.8 minutes (SD = 4.2), with participants aged between 28 and 56. Figure 3 summarizes each participant’s sector, responsibilities, and experience.

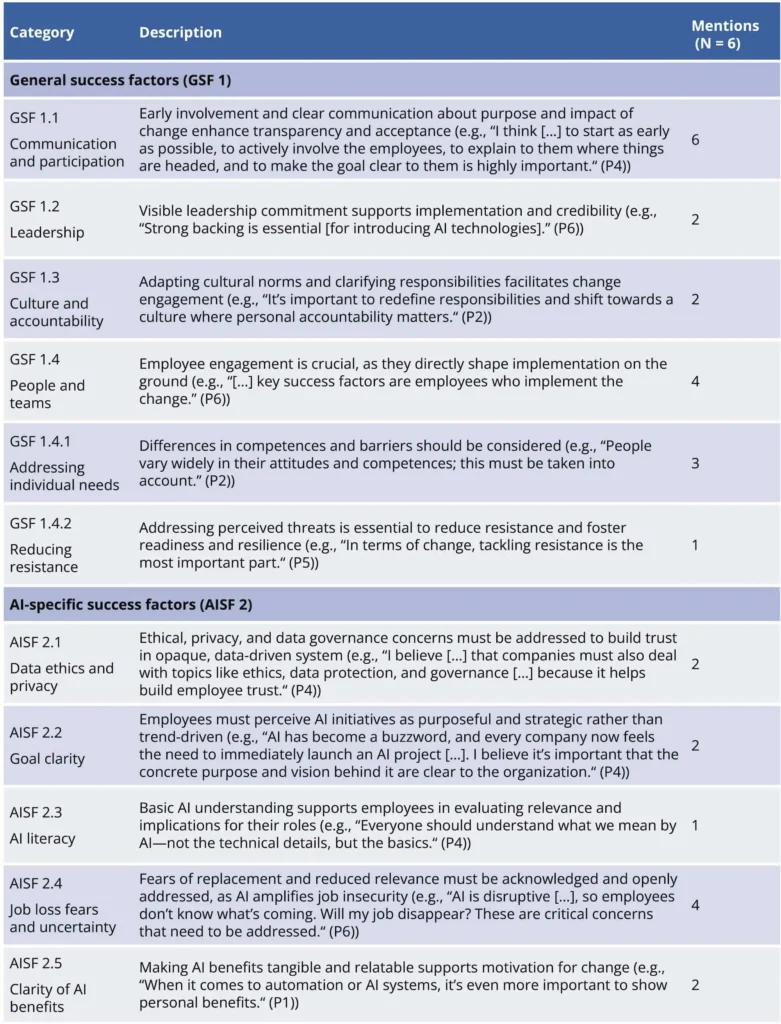

The data analysis followed a combined deductive and inductive approach using structured content analysis [27] to identify success factors in change processes. Due to overlaps between general and AI-specific success factors and varying levels of AI expertise among participants, the category system was structured around these two overarching categories. General success factors were derived deductively from the seven success factors in the integrated change model (see Fig. 2), but were also open to inductive additions. AI-specific success factors were identified purely inductively.

Findings on (AI-specific) success factors

Regarding general success factors, four of the seven factors from the integrated model were consistently mentioned by participants: communication and participation, leadership, culture and accountability, and people and teams (see Fig. 4). Within the latter, two inductive subcategories emerged that further specify individual-level requirements for successful change: addressing individual needs and reducing resistance. Taken together, the interviews provide preliminary support for and also expand the general success factors of the integrated change framework

For AI-specific success factors, five categories were identified: data ethics and privacy, goal clarity, AI literacy, job loss fears and uncertainty, and clarity of AI benefits (see Fig. 4). These findings partly align with the AI-related transformation challenges described earlier, particularly in relation to intensified resistance and uncertainty and ethical, legal, and reputational risks.

However, several critical aspects identified in part one, such as the ongoing nature of AI implementation, its integration into human decision-making, the complexity of data governance, and the specifics of GAI, were not mentioned by participants. This may reflect the nascent stage of practical AI deployment and underscores the importance of integrating the theoretical and empirical insights of this work into a targeted, AI-specific change model.

Synthesizing the findings in the Job Change Acceptance Toolbox (JOCAT)

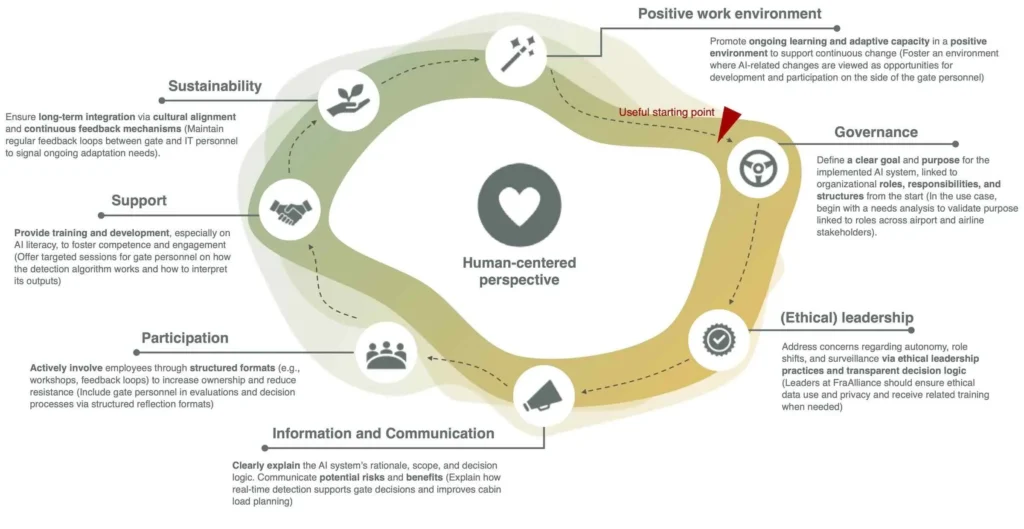

To address the central question of how change management approaches can be designed to meet the specific psychological, ethical, and structural demands of AI system implementation, findings from theory, case analysis, and interviews were synthesized into a dedicated change model embedded in the Job Change Acceptance Toolbox (JOCAT).

JOCAT builds on the integrated change framework (see Fig. 2), maintaining a human-centered perspective as its core principle based on the understanding that sustainable transformation must address human needs, emotions, and meaning-making [8, 10, 11, 6]. As a foundation, the four general success factors, confirmed both in literature and expert interviews (communication and participation, leadership, culture and accountability, people and teams) are retained but further refined to align with AI-specific challenges.

Instead of treating people and teams as a standalone factor, JOCAT embeds it across all components through its human-centered logic. It is reflected, for example, in the new success factor fostering a positive work environment, which anchors change within adaptive, trust-building structures and explicitly addresses psychological needs [3, 24].

Although communication and participation are conceptually intertwined, they are separated for practical implementation in the JOCAT [22]. The AI-specific success factor of AI benefit clarity, emphasized in the interviews, is thus integrated into communication. Additionally, communication should also highlight how AI influences human judgement and decision-making, helping employees make sense of new human-AI interactions and thereby respond to a critical AI-specific gap [21, 24].

The leadership factor is extended to become ethical leadership, emphasizing not only mobilization [12] but also ethical decision-making [21, 24]. This refinement reflects the amplified uncertainties and job fears raised in the interviews and literature [23-25], and helps foster responsible, transparent guidance.

The factor of culture and accountability is split to improve applicability: cultural alignment is integrated into the new factor of sustainability, emphasizing the long-term anchoring of change in organizational values [13, 15, 22], and accountability is merged into governance, incorporating clarification of data-related responsibilities (from literature [24]) and of overall goals (from interviews) as key mechanisms across factors and useful entry points in change processes.

Furthermore, AI literacy, identified as central in the interviews, is included under the factor support. It expands traditional training efforts by preparing employees to understand AI systems, build competence, and increase trust [21, 25]. This also includes generative AI-specific risks [23], ensuring differentiation between system types and informed handling.

Moreover, as highlighted in AI-related literature, the disruptive, evolving nature of AI systems demands change processes that go beyond linear or iterative logic [21-24]. JOCAT addresses this in two ways: (1) structurally, by promoting positive work environments where change is seen as ongoing and adaptive and (2) procedurally, through the cyclical logic of the model, where the described success factors function as modular building blocks that can recur and evolve over time rather than following a fixed sequence.

Taken together, JOCAT offers a flexible, human-centered, and context-sensitive approach to managing AI-related transformation based on seven success factors. Figure 5 visualizes the model, with additional applications illustrated in italics based on the FraAlliance use case. JOCAT thereby bridges theoretical and empirical insights with AI-specific practice.

JOCAT as a starting point for AI-related change

In response to pressing challenges of AI implementation, the proposed framework within JOCAT offers a valuable starting point for managing AI-related change. It builds on classical models, recent literature, and expert insights. While preliminary, the findings highlight core success factors and provide transferable guidance for human-centered, ethically grounded transformation. Further refinement is needed to distinguish between AI system types and increase theoretical and empirical granularity.

This work is funded by the German Federal Ministry of Research, Technology and Space (BMFTR) as part of the HUMAINE Competence Center within the program “The Future of Value Creation—Research on Production, Services and Work”, supervised by the Project Management Agency Karlsruhe (PTKA) (funding code: 02L19C200).

Bibliography

[1] Dellermann, D.; Calma, A.; Lipusch, N.; Weber, T.; Weigel, S.; Ebel, P.: The future of human-AI collaboration: a taxonomy of design knowledge for hybrid intelligence systems.[2] Berente, N.; Gu, B.; Recker, J.; Santhanam, R.: Managing artificial intelligence. In: MIS Quarterly, 45 (2021) 3, pp. 1433-1450.

[3] Ulfert, A.-S.; Le Blanc, P.; González-Romá, V.; Grote, G.; Langer, M: Are we ahead of the trend or just following? The role of work and organizational psychology in shaping emerging technologies at work. In: European Journal of Work and Organizational Psychology, 33 (2024) 2, pp. 120-129.

[4] Ameye, N.; Bughin, J.; van Zeebroek, N.: How uncertainty shapes herding in the corporate use of artificial intelligence technology. In: Technovation 129 (2023), pp. 1-20.

[5] Coghlan, D.: Doing action research in your own organization. 4th Edition. California 2014.

[6] French, W. L.; Bell, C. H.; Zawacki, R. A.: Organization Development and Transformation: Managing Effective Change. 6th Edition. New York 2005.

[7] Lewin, L.: Group decision and social change. In: Readings in Social Psychology 3 (1947), pp. 459-473.

[8] Kübler-Ross, E.: On Death and Dying: What the Dying Have to Teach Doctors, Nurses, Clergy and Their Own Families. New York 1969.

[9] Leavitt, H. J.; Bahrami, H.: Managerial psychology: Managing behavior in organization. 5th Edition. Chicago 1988.

[10] Hiatt, J.: ADKAR: A Model for Change in Business, Government, and Our Community. 2006, pp. 43-62.

[11] Bridges, W.: Managing Transitions. Massachusetts 1991.

[12] Kotter, J. P.: Leading Change. Massachusetts 1996.

[13] Rosenbaum, D.; More, E.; Steane, P.: Planned organisational change management: Forward to the past? An exploratory literature review. In: Journal of organizational change management 31 (2018) 2, pp. 286-303.

[14] Macedo, M. I.; Ferreira, F. A.; Dabić, M.; Ferreira, N. C.: Structuring and analyzing initiatives that facilitate organizational transformation processes: A sociotechnical approach. In: Technological Forecasting and Social Change 209 (2024) 3.

[15] Brisson‐Banks, C. V.: Managing change and transitions: A comparison of different models and their commonalities. In: Library Management 31 (2010) 4/5, pp. 241-252.

[16] Galli, B. J.: Comparison of Change Management Models: Similarities, Differences, and Which is Most Effective?. In: Daim, T.; Dabić, M.; Başoğlu, N.; Lavoie, J.R.; Galli, B.J. (eds.): R&D Management in the Knowledge Era. Innovation, Technology, and Knowledge Management. 2019.

[17] Mosadeghrad, A. M.; Ansarian, M.: Why do organisational change programmes fail?. In: International Journal of Strategic Change Management 5 (2014) 3, pp. 189-218.

[18] Birkstedt, T.; Minkkinen, M.; Tandon, A.; Mäntymäki, M.: AI governance: themes, knowledge gaps and future research agendas. In: Internet Research 33 (2023) 7, pp. 133-167.

[19] Rousseau, D. M.; ten Have, S.: Evidence-based change management. In: Organizational Dynamics 51 (2022) 3.

[20] Stouten, J.; Rousseau, D. M.; De Cremer, D.: Successful Organizational Change: Integrating the Management Practice and Scholarly Literatures.

[21] Ångström, R. C.; Björn, M.; Dahlander, L.; Mähring, M.; Wallin, M. W.: Getting AI implementation right: Insights from a global survey. In: California Management Review 66 (2023) 1, pp. 5-22.

[22] Smith, T. G.; Norasi, H.; Herbst, K. M.; Kendrick, M. L.; Curry, T. B. et al.: Creating a practical transformational change management model for novel artificial intelligence–enabled technology implementation in the operating room. In: Mayo Clinic Proceedings: Innovations, Quality & Outcomes 6 (2022) 6, pp. 584-596.

[23] Kanitz, R.; Gonzalez, K.; Briker, R.; Straatmann, T.: Augmenting organizational change and strategy activities: Leveraging generative artificial intelligence. In: The Journal of Applied Behavioral Science 59 (2023) 3, pp. 345-363.

[24] Lee, M. C.; Scheepers, H.; Lui, A. K.; Ngai, E. W.: The implementation of artificial intelligence in organizations: A systematic literature review. In: Information & Management 60 (2023) 5, pp. 1-19.

[25] Merhi, M. I.: An evaluation of the critical success factors impacting artificial intelligence implementation. In: International Journal of Information Management 69 (2023), pp. 1-12.

[26] Schönbrodt, F.; Gollwitzer, M.; Abele-Brehm, A: Der Umgang mit Forschungsdaten im Fach Psychologie: Konkretisierung der DFG-Leitlinien. In: Psychologische Rundschau 68 (2017) 1, pp. 20-35.

[27] Kuckartz, U.: Qualitative Inhaltsanalyse. Methoden, Praxis, Computerunterstützung, 4th edition. Weinheim/Basel 2018.