Ethical AI in the Workplace Through Value-Based Labels? |

Lessons learned from applying the VCIO framework to an AI-based assistant

| Journal | Industry 4.0 Science |

| Issue | Volume 42, Edition 1, Pages 30-38 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.30 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

Trust in AI systems

In companies, employees in areas such as healthcare, finance, law enforcement, and strategy development are increasingly reliant on AI systems for decision-making [1]. This, too, applies to ethical decisions [2]. It is therefore essential that decision-makers in companies are able to validly and accurately assess the trustworthiness of an AI-based recommendation and, if necessary, question it critically. Accordingly, there is an increasing interest in how trustworthy current AI systems are and to what extent humans are able to critically evaluate AI-generated recommendations [3].

So far, users seem to find it difficult to trust AI systems to a reasonable degree [4]. While too little trust in AI-generated outputs inspires negative attitudes and discourages adoption, blind faith often results in uncritical acceptance of AI misjudgment [5]. Appropriate trust calibration as required, for example, by the EU AI Act [6] is difficult to achieve given the sheer complexity, and sometimes opaque functioning, of modern technologies [5].

Trustworthy AI design is a pivotal feature of numerous ethical guidelines and principles. To promote an appropriate assessment of the trustworthiness of AI systems, various organizations, including the EU, have developed “ethical guidelines for trustworthy AI” [6]. However, such guidelines often prove too abstract for companies [7], failing to clarify how organizations can implement them in practice [8]. In this context, so-called labels or “certification marks” play an important role in transparently communicating the trustworthiness of a system to users [9].

AI labels as a means of establishing appropriate trust?

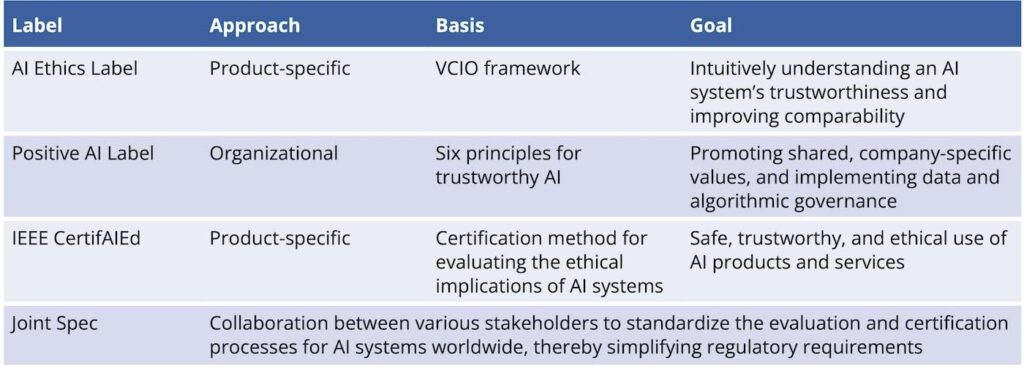

Against this backdrop, AI labels can serve as a tool to attest whether an AI product complies with ethical principles and values, allowing users to compare different AI products and promoting trust in AI systems at the same time [8]. In recent years, various approaches to AI labels and certifications have emerged.

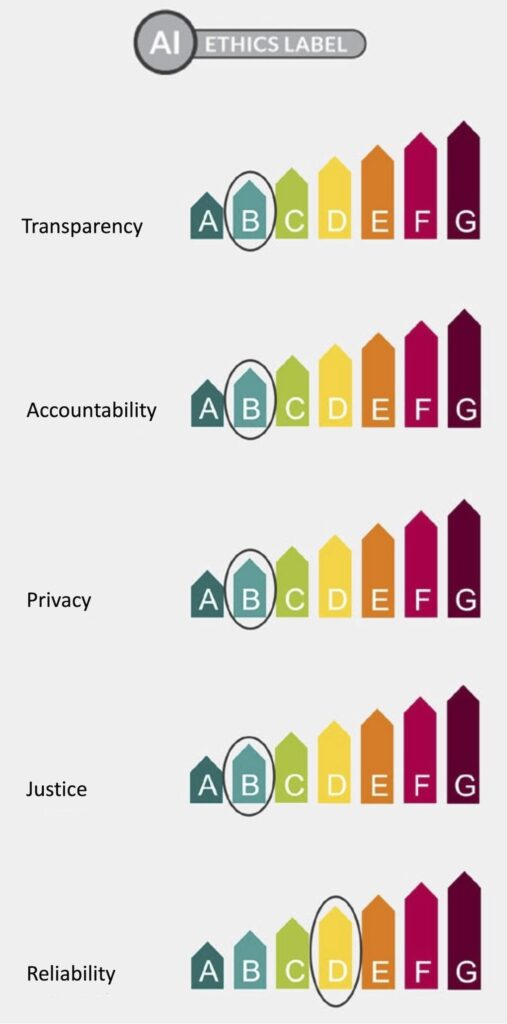

The AI Ethics Impact Group, for instance, developed a label modeled on the energy efficiency label, which specifically takes into account and represents relevant ethical values [8]. The label is based on the VCIO framework (Values, Criteria, Indicators, Observables), which aims to make ethical principles practical, comparable, and measurable. To this end, values are translated into relevant criteria, indicators, and observables. This translation enables developers to independently evaluate the extent to which the values are implemented.

The degree of fulfillment of specified indicators must be rated on a scale from A to G. Following classification, individual indicators can be aggregated to calculate the degree to which the higher-level criteria and the values are taken into account. Consequently, the rating “A” represents the complete implementation of a value in the system. The aim of the label is to convey the trustworthiness of AI systems quickly and intuitively and to increase the comparability of AI systems [10].

In addition to the AI Ethics Impact Group, other players have developed labeling and certification approaches to promote ethical AI. For instance, the Positive AI Label employs a more organization-focused approach based on six principles of responsible AI and assesses companies in terms of their maturity and ethical development potential. Furthermore, the label assists organizations in implementing data and algorithm governance, and it promotes a culture based on shared values within companies [11]. In contrast to this organizational approach, the IEEE CertifAIEd label from the IEEE Standards Association (IEEE SA) focuses on the certification of specific AI systems.

IEEE CertifAIEd is a product certification program that aims to establish standards for certification processes that promote certain values in systems. In this context, a certification methodology for assessing the ethical impact of AI systems was developed and introduced. The goal is to ensure the safe, trustworthy, and ethical use of AI products or services. Strict criteria for the evaluation and certification of systems with regard to ethical risks were thus established [12]. An overview of these labels can be found in Figure 1.

Due to the wide variety of labels and certifications in the field of AI ethics, there is currently no uniform standard enabling users to reliably assess AI trustworthiness. Therefore, VDE, IEEE, Positive AI, and IRT SystemX (coordinator of Confiance.ai) have collaborated to establish a unified specification to standardize and optimize AI evaluation worldwide. This joint specification is still under development, but it will combine the strengths of IEEE CertifAIEd, VDE VDESPEC 90012 (VDE publication of the AI Ethics Label), and the Positive AI Framework to facilitate compliance with global regulatory requirements and framework conditions [13].

Due to their static design, most AI labels exhibit systematic weaknesses. Originally intended as a differentiating tool, they lose significance over time, either when technological progress enables all products or systems to achieve the highest rating or when overly conservative criteria and a lack of reference systems render the highest ratings unattainable, fostering an intuitively negative perception of the systems. Especially in the fast-moving field of AI, this can lead to misinterpretations and a constant need for adjustment. In addition, an increasing number of labels are difficult to understand and use in practice [15].

Thus, it is clear that AI labels such as the AI Ethics Label offer potential for promoting the trustworthiness of AI, but their effectiveness depends on other factors as well, such as benchmarks and background knowledge for adequate interpretation by recipients. Overall, dynamic and comprehensible assessments and a targeted introduction to the meaning of the underlying values are key prerequisites for avoiding misunderstandings and ensuring the practical relevance of such labels.

AI-based decision support in public transport as a use case

In order to realistically assess the suitability of a label, it is essential to test it in a specific use case. This article therefore presents lessons learned from the specific application of the AI Ethics Label described above to an AI-based decision support system to be used in public transport control centers [16].

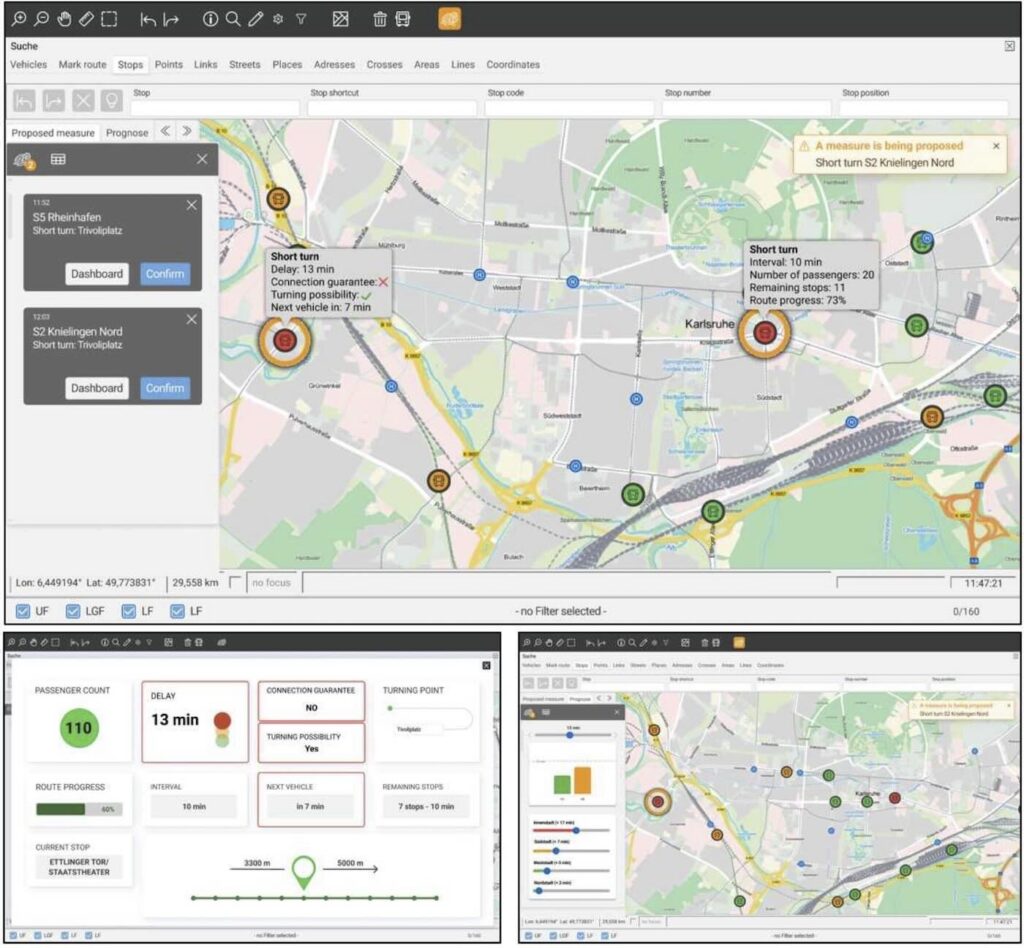

In the control center of a transport company, dispatchers monitor buses and trains using control center software, known as the Intermodal Traffic Control System (ITCS), and thus monitor the situation throughout the entire public transport system of the city or region. If disruptions occur (e.g., due to an accident), tracks are blocked, or vehicles are delayed, dispatchers intervene to minimize the impact on passenger service.

To do this, dispatchers trigger so-called dispatching measures in the control center software. These take the form of diversions, U-turns, or smaller measures such as waiting for a delayed vehicle to allow passengers to change to another line. In the event of a disruption, dispatchers must react quickly and simultaneously take many factors into account. This causes stress and requires a wealth of experience.

An AI-supported suggestion assistant was developed based on historical operational data from the company. This system is designed to help dispatchers make difficult decisions about dispatching measures calmly and carefully. The AI is trained to consider a variety of relevant factors and then suggest dispatching measures that are precisely tailored to the situation at hand. In addition, the system is to be linked to the control center software in such a way that the scheduling measures are triggered automatically when the dispatchers accept the AI proposal.

It therefore aims to relieve dispatchers while also giving them full control over the processes. Through their collaboration with the interdisciplinary project team, dispatchers are aware that placing unlimited trust in AI-based recommendations is inappropriate, especially since the AI does not have access to certain information, such as regional events or driver schedules. However, the dispatchers had not yet been specifically trained to interact with AI systems. Due to the obligation to develop and demonstrate AI competencies, which is now enshrined in law under Article 4 of the EU AI Act, a greater understanding of how to effectively use such systems can be expected in the future.

In terms of ethical AI and human-centered AI design, this use case is exciting because of the many groups of people indirectly affected and the interference in decision-making processes in the workplace. In discussions on requirements analysis, some dispatchers expressed the assumption that AI cannot adequately reflect their intuitive experiential knowledge. Despite retaining decision-making authority, dispatchers sometimes perceive AI support as a devaluation of their own expertise. Furthermore, experiential knowledge may be lost due to excessive dependence on the AI system, which in turn would erode the knowledge and experience base in the long term.

In addition to dispatchers, AI-based diversion recommendations also indirectly affect public transport drivers, passengers, and ultimately the entire local population that participates in road traffic. This inevitably pushes participatory approaches to technology design to their limits and raises questions of fairness when competing interests are weighed against each other. For example, there is a risk that the system will prioritize lines and regions for diversions without these decisions being perceived as fair and comprehensible by either drivers or passengers. Further information on this can be found in [16] and [17].

Lessons learned from applying the AI Ethics Label to the use case

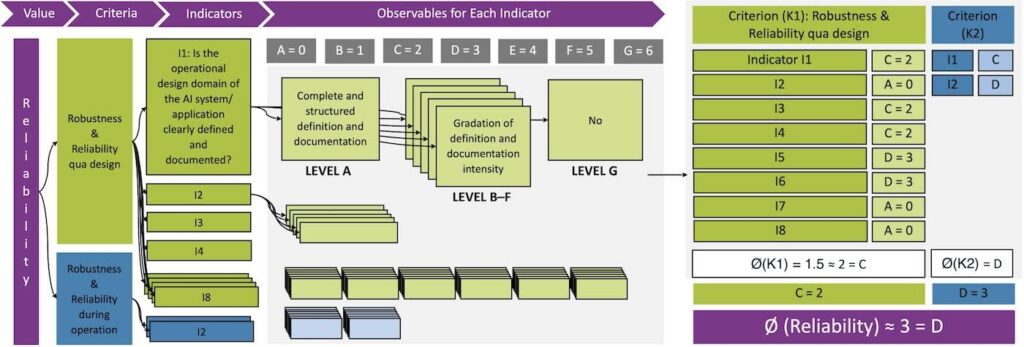

The basis for creating the AI Ethics Label for the described use case is provided by the VDE SPEC 90012 V1.0 specification from 2022. This specification considers the five values of transparency, accountability, privacy, fairness/equity, and reliability, and translates them into relevant criteria, indicators, and associated metrics within the VCIO framework.

In a joint discussion process, representatives from the AI solution development team and researchers from Karlsruhe University of Applied Sciences determined the respective ratings for the metrics using the specified compliance scale from A (fully compliant) to G (non-compliant). The evaluated system was still in the prototype stage. The expected system status at the targeted transition to productive operation served as the basis for the evaluation. Evaluations of individual metrics were then aggregated at the value level and the label created accordingly.

During application, it became apparent that the framework follows a distinctly conservative assessment approach. This proved particularly problematic when the measures queried did not appear relevant to the application context under investigation and were therefore not implemented. For example, the “fairness” criterion evaluates measures against biased data that could be harmful to people.

However, the present system does not contain any personal data, only relevant data on the rail network, such as route plans and traffic volumes, which are of crucial importance for the planning work of dispatchers. Contrary to previous considerations, a conscious decision was made not to include qualitative descriptions of individual events such as accidents, which may contain personal data such as vehicle registration numbers or similar.

Accordingly, there can be no personal bias in the data and there was thus no need to take specific measures to prevent it. In these cases, the lack of relevance led to a rating of “G,” which diminished the overall result, especially since the degree of fulfillment of the criteria is based on the average of the metrics with decimal places rounded up. Consequently, the “reliability” criterion had to be rated “D” (=3) because the two underlying indicators had received ratings of “C” (=2) and “D” (=3). The framework also allows for an approach based on the “minimum requirement” principle, which additionally accounts for the risks embedded in the application context. However, this was not applied here.

This specific application also shows that developing generic labels for AI systems is challenging. The framework’s evaluation logic left little room for the technical and organizational application context, meaning that the surrounding system landscape could not be adequately analyzed. In addition, it would be advisable to adjust the weighting of indicators to avoid equating metrics on data origin and security with those on development documentation. Fundamentally, the framework placed greater emphasis on the availability of decision documentation for data, and algorithms than on actual value implementation.

This lack of context sensitivity forces the evaluation of indicators even if they are only moderately relevant to the application context. Furthermore, the individual conducting the evaluation needs to be suitably qualified according to the VCIO framework, as different qualifications can lead to differing interpretations and evaluations. Developers, for instance, might receive a different label as a result than other employees in a company. Ultimately, it remains unclear who should ideally perform such an evaluation and what qualifications are necessary for this.

The conservative assessment approach and the limited context sensitivity of the framework raise questions regarding its acceptance and strategic usability. For instance, a negative label rating may result from assessment mechanisms that are not sufficiently context-sensitive, documentation requirements that have been treated negligently, or simply mathematically unfavorable rounding effects at the various aggregation levels.

It is also questionable to what extent developers are willing to assess their own system so honestly and critically that the overall result may be a negative label. This carries the risk of misuse. Developers who strive to incorporate ethical aspects into their development could be tempted to rate their own system more highly due to a rating perceived as too negative.

Similarly, organizations that do not attach any importance to ethical system development could use self-assessment to positively differentiate themselves from competitors in the market (so-called ethics washing).

Given the heterogeneity of industries, these challenges could be addressed in the future by developing configurable reference models that allow for context- and application-specific weighting of indicators without compromising on comparability. In order to further counteract the existing potential for abuse and enable accurate interpretations, clear regulations and roles for the label awarding process should be defined. In addition, the calculation steps should be presented transparently and benchmarks should be established.

A requirement for external auditing or review could be an effective means of reducing (conscious or unconscious) bias due to the evaluators’ self-interest. Such mechanisms should be taken into account in ongoing standardization measures such as the Joint Specification in order to make labels practicable and trustworthy in the long term.

Conclusion

AI Ethics Labels based on the VCIO framework represent a promising approach to identifying ethical aspects and making them transparent in the development and evaluation of AI systems. The application to a specific use case not only increased sensitivity to relevant ethical aspects but also revealed both specific and general challenges. Taken together, the conservative evaluation approach and a lack of context sensitivity may entail misuse and misinterpretation, making it difficult for users to classify the evaluation appropriately.

To fully achieve the label’s potential, a dynamic assessment model with clear framework conditions and transparent calculations, the existence of benchmarks for comparability, and, if necessary, accountability are indispensable. Context-specific adaptation options could ultimately lead to the label being not only trustworthy and comparable, but also practical.

This publication is part of the project “Kompetenzzentrum KARL – Künstliche Intelligenz für Arbeit und Lernen in der Region Karlsruhe” (KARL Competence Center – Artificial Intelligence for Work and Learning in the Karlsruhe Region). The project is funded by the German Federal Ministry of Education and Research (BMFTR) as part of the program “Future of Value Creation – Research on Production, Services, and Work” (funding code: 02L19C250).

Bibliography

[1] Csaszar, F. A.; Ketkar, H.; Kim, H.: Artificial Intelligence and Strategic Decision-Making: Evidence from Entrepreneurs and Investors. In: Strategy Science 9 (2024) 4, pp. 322–345. DOI: https://doi.org/10.1287/stsc.2024.0190.[2] Poszler, F.; Lange, B.: The impact of intelligent decision-support systems on humans’ ethical decision-making: A systematic literature review and an integrated framework. In: Technological Forecasting and Social Change 9 (2024), pp. 123403. DOI: https://doi.org/10.1016/j.techfore.2024.123403.

[3] Thorne, S.: Understanding the Interplay Between Trust, Reliability, and Human Factors in the Age of Generative AI. In: International journal of simulation: systems, science & technology (2024). DOI: https://doi.org/10.5013/IJSSST.a.25.01.10.

[4] Lyons, J. B.; Guznov, S. Y.: Individual differences in human–machine trust: A multi-study look at the perfect automation schema. In: Theoretical Issues in Ergonomics Science 20 (2019) 4, pp. 440–458. DOI: https://doi.org/10.1080/1463922X.2018.1491071.

[5] Lee, J. D.; See, K. A.: Trust in automation: designing for appropriate reliance. In: Human factors 46 (2004) 1, pp. 50–80, 2004. DOI: https://doi.org/10.1518/hfes.46.1.50_30392.

[6] Europäische Kommission: Ethikleitlinien für vertrauenswürdige KI. URL: https://digital-strategy.ec.europa.eu/de/library/ethics-guidelines-trustworthy-ai, Abrufdatum 16.04.2025.

[7] Spiekermann, S.: Value-based Engineering: Prinzipien und Motivation für bessere IT-Systeme. In: Informatik Spektrum 44 (2021) 4, pp. 247–256. DOI: https://doi.org/10.1007/s00287-021-01378-4.

[8] AI Ethics Impact Group: From Principles to Practice: An interdisciplinary framework to operationalise AI ethics. URL: https://www.bertelsmann-stiftung.de/fileadmin/files/BSt/Publikationen/GrauePublikationen/WKIO_2020_final.pdf, Abrufdatum 20.06.2025.

[9] Scharowski, N.; Benk, M.; Kühne, S. J.; Wettstein, L.; Brühlmann, F.: Certification Labels for Trustworthy AI: Insights From an Empirical Mixed-Method Study. In: Proceedings of the 2023 ACM Conference on Fairness, Accountability, and Transparency (2023), pp. 248–260. DOI: https://doi.org/10.1145/3593013.3593994.

[10] VDE: VCIO based description of VCIO based description of systems for AI trustworthiness characterisation: VDE SPEC 90012 V1.0 (en). URL: https://www.vde.com/resource/blob/2337818/a24b13db01773747e6b7bba4ce20ea60/vcio-based-description-of-systems-for-ai-trustworthiness-characterisationvde-spec-90012-v1-0–en–data.pdf, Abrufdatum 20.06.2025.

[11] Positive AI: The Positive AI Label. URL: https://positive.ai/the-ai-label, Abrufdatum 20.06.2025.

[12] A. Jedlickova: Ensuring Ethical Standards in the Development of Autonomous and Intelligent Systems. In: IEEE Trans. Artif. Intell. 5 (2024) 12, S. 5863–5872. DOI: https://doi.org/10.1109/TAI.2024.3387403.

[13] Businesswire – A Birkshire Hathaway Company: IEEE Standards Association kündigt Joint Specification V1.0 für die Bewertung der Vertrauenswürdigkeit von KI-Systemen an. URL: https://www.businesswire.com/news/home/20241121943713/de, Abrufdatum 20.06.2025

[14] Bakirtzis, G.; Tubella, A. A.; Theodorou, A.; Danks, D.; Topcu, U.: Navigating the sociotechnical labyrinth: dynamic certification for responsible embodied AI. In: Bi-directionality in Human-AI Collaborative Systems (2025), pp. 333–348. DOI: https://doi.org/10.1016/B978-0-44-340553-2.00019-8.

[15] Harbaugh, R.; Maxwell, J. W.; Roussillon, B.: Label Confusion: The Groucho Effect of Uncertain Standards: In: Management Science 57 (2011) 9, pp. 1512–1527. DOI: https://doi.org/10.1287/mnsc.1110.1412.

[16] Kopp, T.; Weitemeyer, R.; Beyer, J.; Ziegler, D.; Hess, R.: Künstliche Intelligenz zur Entscheidungsunterstützung in Leitstellen des Personennahverkehrs – Technische und sozio-technische Herausforderungen. In: HMD 60 (2023) 6, pp. 1156–1173. DOI: https://doi.org/10.1365/s40702-023-00996-8.

[17] Kinkel, S.; Kopp, T.: Menschenzentrierte und transparente KI-Lösungen gestalten und einführen – Erkenntnisse aus dem Kompetenzzentrum KARL. In: GFA – Gesellschaft für Arbeitswissenschaft (eds): Arbeitswissenschaft in-the-loop. Mensch-Technologie-Integration und ihre Auswirkung auf Mensch, Arbeit und Arbeitsgestaltung. 70. Arbeitswissenschaftlicher Kongress. Stuttgart, 6.–8. März 2024. Sankt Augustin: GfA-Press 2024.

Your downloads

Potentials: Leadership Training

Solutions: Process Management