Adapting AI Work Systems for Human-Centeredness |

A methodical approach for exploring the design space in transdisciplinary teams

| Journal | Industry 4.0 Science |

| Issue | Volume 42, Edition 1, Pages 44-53 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.136 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

Why adaptations matter when it comes to human-centered AI

Human-centered artificial intelligence (HCAI) is considered relevant across a broad spectrum of industries and work contexts and work systems, e.g., in architecture, engineering, construction [1, 2], healthcare [3, 4], farming [5], insurance, and finance [2, 6]. Some contexts require HCAI even more urgently than others, for example those related to high-stakes decision-making and life-critical systems: criminal justice and public policy [7], medical imaging and diagnosis [4], and aviation [8]. When failure can result in death, serious injury, or other irreversible consequences, human oversight and control mechanisms become essential.

But there is more to it than the demand for oversight and control: While AI systems have been proven to outperform humans in terms of speed and consistency in repetitive tasks such as specific pattern recognition [4], they cannot replicate human moral agency, ethical judgement, contextual understanding, and common-sense reasoning [9]. For these contexts, balancing human control and automation is therefore a key challenge in designing HCAI [7, 8].

While there is a broad consensus that HCAI refers to a design of human-AI systems that emphasize human needs, values, and capabilities, the term remains ambiguous [2, 10]. Instead of a universal definition, researchers and practitioners alike often use principles and key criteria such as fairness, transparency, and human agency to either guide the design of HCAI systems or determine the occurrence of HCAIs [10]. One principle of HCAI mentioned less often but of utmost importance for balancing human control and automation is adaptability [1, 8]. In the context of HCAI, adaptability describes a system’s capacity to be adjusted to changing contexts and human needs [1].

Empirical research underscores the general value of flexible automation approaches. For example, Onnasch et al. (2014) show that increasing automation levels may have negative consequences such as unlearning effects, automation bias, or overconfidence [11], which can be mitigated by adaptable approaches [12].

Empirical studies in the field of air traffic control show that adaptable systems increase satisfaction and reinforce positive role perceptions without impairing performance or workload [12]. Adaptation appears thus as a crucial design property for the implementation of HCAI systems in high-stakes decision-making and life-critical systems. However, while various design principles, methods, and tools already support adaptation in areas such as UX design [13], computer science [14], and safety and resilience engineering [15], there is a lack of general, large-scale approaches for contextual and configurative socio-technical systems [16, 17].

To address this gap, we follow a design science research approach [18] to develop and demonstrate a method that supports transdisciplinary teams in systematically exploring the design space for adaptations in HCAI work systems. First, we expound on how adaptations are relevant in practice by drawing on real-world work contexts studied in a transdisciplinary research project, and derive development objectives (problem identification). Then, we develop a prototype by building on existing design knowledge (conceptualization). Finally, we demonstrate the application of the method using an example from practice (demonstration and evaluation).

Problem identification: Exploring design spaces for adaptations in complex socio-technical systems with transdisciplinary teams

The transdisciplinary HUMAINE research project investigated the practical implementation of HCAI principles in various high-stakes decision-making and life-critical systems applications, e.g. lesion detection in 3D magnetic resonance imaging [19]. Another example is radiographic non-destructive testing (NDT) of welds. Welding, the joining of metallic structures with heat, is an important fabrication process in numerous industries.

In safety-critical applications, e.g., airplane fuselages and pipes for the petro-chemical industry, the safety and integrity of welds and thus recurring rigorous testing are paramount given the high costs associated with failure [20]. NDT allows detecting flaws, defects, and deviations from a norm without damaging material, components, or systems using technologies such as ultrasound or radiography. Performing NDT usually requires error-free performance under time pressure. To meet these requirements, human inspectors can be aided by modern software solutions with integrated AI-based automatic defect recognition (ADR) [21, 22].

The software typically performs each inspection first before a human—who, after all, is responsible for the overall testing result—checks the AI-generated detections, adjusts them if necessary, and gives their approval. While this approach is intended to enhance the overall inspection performance, risks can accrue from human bias and the shifting role of the inspector. If ADR performs well, the human inspector may overtrust the AI-generated results, become less attentive and consequently miss incorrect detections or undetected defects. This could ultimately lead to an increasing error rate. Furthermore, being degraded to the role of a mere verifier of AI-generated results could decrease employee well-being and work satisfaction.

Assuming that a workflow where humans detect first and AI acts as a second pair of eyes may produce better inspection results regarding error rates and be psychologically beneficial, our transdisciplinary team consisting of scholars from psychology, engineering, and computer science as well as NDT practitioners and software developers put it to the test and compared different workflow sequences within an experimental study [21]. Findings showed that the traditional sequence, in which AI precedes the human inspector, achieves the best balance between performance and psychological outcomes.

However, realizing what even minor modifications can do to the overall system, we asked ourselves about further opportunities for adaptation and encountered different challenges rooted in the nature of HCAI systems. We realized that, due to our different scientific and professional backgrounds, we did not have a shared understanding of the system we were studying, its elements, and the interdependencies between them.

Furthermore, it was unclear how adaptation mechanisms known only from narrower research focuses could be transferred to the broader focus of HCAI systems and be linked to its different types of outcomes. Ultimately, the question was how possible adaptations within a HCAI system could be identified in a structured way. To address the challenges, we developed a design method (artifact), the functionality and architecture of which are grounded in theoretical insights into work systems adaptation.

Conceptualization: Methodical support for adaptation design within HCAI work systems

According to international standards, a work system is a “system comprising one or more workers and work equipment acting together to perform the system function, in the workspace, in the work environment, under the conditions imposed by the work tasks” [23]. Various conceptual models of work systems exist across disciplines [24] such as information systems [25] or macroergonomics [26], each emphasizing different system elements and interdependencies. Because of its focus on human-centered system design, we adopt Carayon’s work system model [27, 28] and its successors [29–31] as our basis for exploring adaptation design opportunities in HCAI systems.

![Figure 1: Extended work system model for HCAI-focused adaptations based on [30] and [31].](https://industry-science.com/wp-content/uploads/2026/02/Buelow_I4S-26-1_Figure-1-1024x704.jpeg)

As shown in Figure 1, the work system itself consists of people, organizational conditions, a physical environment, AI technology, other tools and technologies, and tasks [31]. These structural elements interact with each other within the socio-organizational context and with the general external environment [30] over time [31], resulting in desirable or undesirable, proximal or distal outcomes for or experienced by different people, groups, and organizations [30]. In line with its origin in human factors studies, outcomes span system performance (e.g., efficiency, effectiveness, productivity) and human experiences (e.g., well-being and safety) [30]. For the intended use in the HCAI context, we added specific HCAI criteria from the literature as potential outcomes to be addressed by system design [10, 16].

Drawing on the cybernetic concept of feedback loops, we conceptualize adaptation as a feedback-driven process that responds to deviations from goals or environment changes. Adaptations may refer to system elements, workflows, or socio-organizational context and may vary in intentionality (e.g., intended or unintended), duration (e.g., short- or long-lasting), modus (e.g., intermittent or regular), and impact (e.g., beneficial or unbeneficial) [30, 29].

In our approach, we focus on planned adaptations, distinguishing them from spontaneous workarounds—informal, situational responses to immediate challenges [13, 32]. While recognizing the dynamic nature of work systems, identifying and designing specific planned adaptations remains difficult at the work system level. To concretize the design space, we examine approaches from lower system levels, particularly from human-computer interaction (HCI).

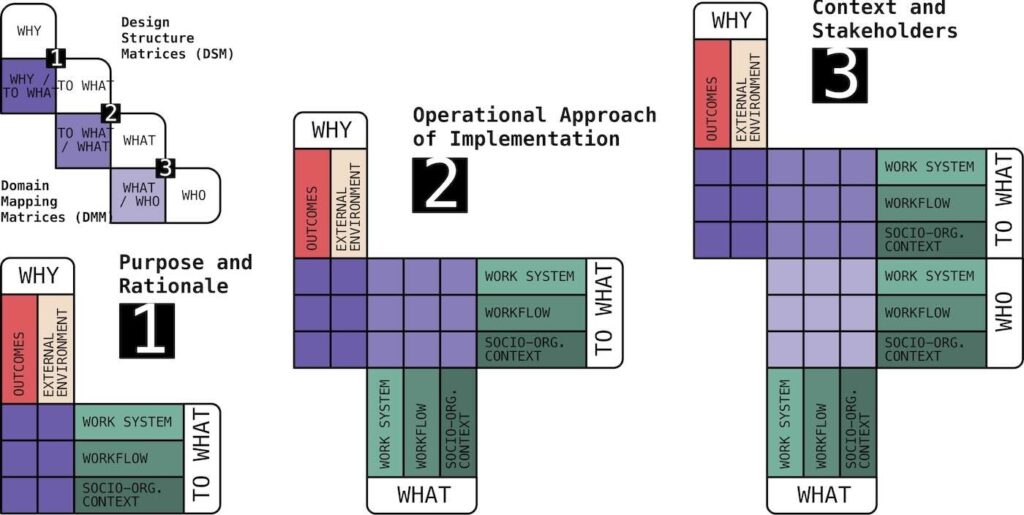

In HCI, adaptation, especially of user interfaces (UI), has been studied extensively. Abrahão et al. (2021) developed a framework for adaptation dimensions, organized using the Quintilian questions: what, why, how, to what, who, when, and where [13]. Given the intuitive and universal nature of Quintilian’s questions, it seems likely that this approach can be adopted for higher system levels, including for HCAI work systems. To increase accessibility, especially for practitioners, we group the dimensions into the three categories of purpose and rationale (why, to what), operational implementation approach (what, where, how), and context and stakeholders (when, who).

Purpose and rationale

Purpose and rationale refer to the adaptation’s objective (why) and the reference element, including the specific state of this element that renders the adaptation necessary (to what). Specifically, in the HCAI context, the adaptation rationale describes what needs to be fulfilled or improved and how it aligns with overall system goals.

Additionally, it includes adaptation goals such as trust, human agency, job loss prevention, and knowledge utilization [13, 33–35], going beyond traditional usability concerns. While the question of why an adaptation should take place is linked to the different outcomes and the external environment of the extended work system model, the question of reference element and state can be answered by any element of the work system, the workflow, and the socio-organizational context (Fig. 1).

Operational implementation approach

The operational implementation approach describes what is being adapted (what), where the adaptation occurs (where) and how it is processed (how). Tailored to the HCAI context, this can be specified as adaptation target, adaptation granularity, and adaptation automation degree. The adaptation target includes elements of technology beyond the UI, like the AI model, communication style, task allocation, or workflow sequences [14, 33–38]. In the extended work system model (Fig. 1), these are reflected in the work system’s core elements and their interaction over time, i.e., the workflow.

The property of adaptation granularity must be translated to this specific context, since granularity in the context of UI-design can refer to different software-specific elements like widgets or containers. The adaptation automation degree—varying on a 9-level continuum from fully manual to fully automated—is highly relevant for HCAI as it relates to control, transparency, and user trust [33, 39]. Other UI-specific properties from the original HCI framework (e.g., UI modality, or adaptation method) are not included here but may be considered in detailed subsystem analyses.

Context and stakeholders

Context and stakeholders define the instances that are involved in the adaptation process (who), further specified in the HCAI context by who is responsible for initiating, deciding on, and implementing adaptations. This property reflects the distributed nature of stakeholders and decision-making in socio-technical systems [33, 38, 40]. Stakeholders can be people who are involved at the core of the work system, people from the socio-organizational context, and technical systems or algorithms (Fig. 1).

They depend on the temporal context of the adaptation (when), which is in turn impacted by adaptation type(static or dynamic)andadaptation time(the specific moment or period during which the adaptation is triggered or applied). More specifically, this aspect depends on the predefined degree of freedom in adaptation (see also automation degree), which varies depending on whether characteristics like hardwired, customizable, and configurable (static attributes) or like tunable and mutable (more dynamic attributes) are at play [13, 34, 36–38, 40].

Mapping multidimensional interdependencies

To support the design process and model the relevant systemic circumstances of these adaptation approaches, we employ Design-Structure Matrices (DSM) and Domain-Mapping Matrices (DMM) [41]. These tools are well-established for modeling complex systems and allow structured representation of multidimensional interdependencies among heterogeneous elements [42]. DSM and DMM support complexity reduction by identifying clusters, revealing feedback loops, and supporting process optimization. They also allow the integration of additional attributes (e.g., costs, risks), facilitating early detection of cascading effects, and better resource allocation [41].

We synthesize four DSM related to why, to what, what and who based on the extended work system model (Fig. 1). These are then cross-referenced using the three resulting DMM (why/to what, what/to what and what/who), as shown in Figure 2, also demonstrating the three categories of purpose and rationale, operational implementation approach, and context and stakeholder. In line with the method’s structure-oriented approach, the remaining dimensions of how, when, and where are reflected in the analysis-guiding questions which evolve at the intersections within each DMM. Given that up to three questions can be raised for each intersection, it becomes clear that the prior modeling of the HCAI work system should be sufficiently detailed but not excessive.

Our design method therefore consists of the following steps: First, the work system is described using the extended model from Figure 1. Then, based on this modeling and its elements, the four DSMs and the three DMMs are set up. For each intersection in the DMMs, three questions are formulated regarding how, when, and where. These questions are then evaluated in terms of their usefulness and relevance to the specific design task and reduced to a final set, which guides the discussion and analysis of adaptation options in the given work system.

Demonstration and evaluation: Practical application of the design method

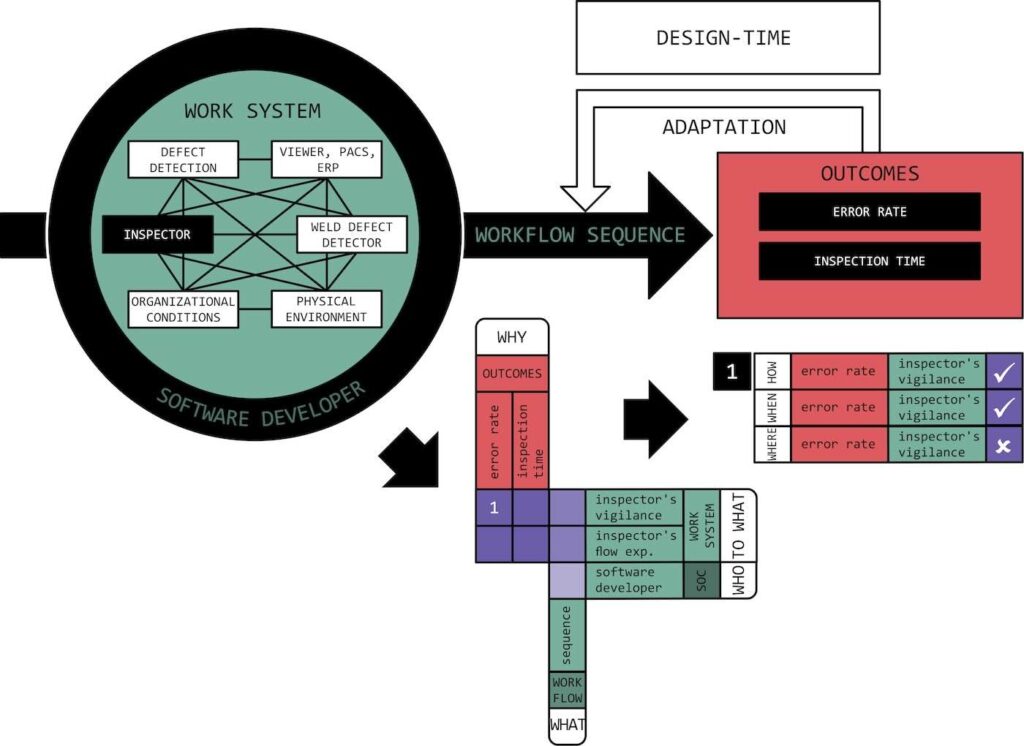

To demonstrate the developed method, we apply it to the context of workflow adaptation in the aforementioned task of weld inspection [21]. Typically, radiographic NDT of welds involve operators acquiring radiographic images, inspectors evaluating these images and deciding on acceptance, and supervisors ensuring quality and compliance (people and tasks). The inspection process relies on a complex technical infrastructure, including a picture archiving and communication system (PACS), an enterprise resource planning (ERP) system, and a specialized viewer software including all tools for manual or automatic visual inspection (AI technology, other tools and technologies) [22].

Outside of the work system, there are other people who affect the work context. In the workflow adaptation example, these could be ergonomists and software developers. Like roles, hierarchies, rules, procedures, processes, and organizational culture, these people are part of the socio-organizational context.

To keep the demonstration of our method as simple as possible, we focus on the most important elements for workflow adaptation: the outcomes error rate and inspection time as the adaptation’s objectives, their primary reference element, the inspector, with the states of vigilance and flow, and the workflow sequence as the element to be adapted. Adaptation is static and hence implemented by a software developer at design-time.

Now that we have modeled the work system, we can set up the matrices and finally derive up to 21 questions to potentially support the exploration of the design space (Fig. 3). The first DMM (2×2) results in a total of twelve questions. While the eight questions about how and when contribute to a better understanding of the adaptation’s purpose and rationale (e.g., “How does the error rate correlate with the inspector’s vigilance?”), the questions about where do not. This is also true for the questions that result from the second DMM (1×2).

While a total of six questions can be formulated, only the four questions about how and when describe the operational implementation approach (e.g., “When does the inspector’s flow experience necessitate workflow sequence adaptation?”). The last DMM (1×1) results in a total of three questions. In this case, all questions make sense (e.g., “How does the software developer change the workflow sequence?”). The question “Where does the developer change the workflow sequence?” could also be understood as part of the operational implementation approach of.

Discussion and outlook

The aim of this study was to develop a method that supports practitioners in designing HCAI work systems. Based on a real-world NDT use case, we derived design requirements, defined different adaptation properties across categories and created a prototype using matrix-based modeling. This approach proved suitable for structuring complex, transdisciplinary design challenges and identifying critical interfaces, system interactions, and potential goal conflicts in the design phase. By systematically mapping dependencies and stakeholder perspectives, the design method has potential to foster communication about the socio-technical optimization of human-centered AI integration.

Nevertheless, given the multiple occurrences of questions that do not contribute to the analysis, more practical applications are needed to evaluate the method’s functioning. Future studies should examine its usability as well as its effectiveness in fostering a shared understanding across disciplines and professions. Additionally, the potential of DSM and DMM to reveal critical interfaces and goal conflicts must be systematically explored. Beyond practical relevance, the approach may serve as a framework for organizing interdisciplinary knowledge and identifying research gaps in socio-technical systems design.

This research and development project is funded by the German Federal Ministry of Research, Technology and Space (BMFTR) within the “The Future of Value Creation – Research on Production, Services and Work” program and the “SME-innovative: The Future of Value Creation” funding measure (funding code: 02L19C200) and managed by the Project Management Agency Karlsruhe (PTKA). The authors are responsible for the content of this publication.

Bibliography

[1] Nabizadeh Rafsanjani, H.; Nabizadeh, A. H.: Towards Human-Centered Artificial Intelligence (AI) in Architecture, Engineering, and Construction (AEC) Industry. In: Computers in Human Behavior Reports 11 (2023), 100319. DOI: 10.1016/j.chbr.2023.100319.[2] Capel, T.; Brereton, M.: What is Human-Centered about Human-Centered AI? A Map of the Research Landscape. In: Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems (2023), pp. 1–23. DOI: 10.1145/3544548.3580959.

[3] Chen, Y.; Clayton, E. W.; Novak, L. L.; Anders, S.; Malin, B.: Human-Centered Design to Address Biases in Artificial Intelligence. In: Journal of Medical Internet Research 25 (2023), 43251. DOI: 10.2196/43251.

[4] Constantinides, M.; Quercia, D.: AI, Jobs, and the Automation Trap: Where is HCAI? In: CHIWORK’25: Proceedings of the 4th Annual Symposium on Human-Computer Interaction for Work (2025), pp. 1–8. DOI: 10.2196/43251.

[5] Holzinger, A.; Fister, I.; Kaul, H.-P.; Asseng, S.: Human-Centered AI in Smart Farming: Toward Agriculture 5.0. In: IEEE Access 12 (2024), pp. 62199–62214. DOI: 10.1109/ACCESS.2024.3395532.

[6] Pisoni, G.; Díaz-Rodriguez, N.: Responsible and Human Centric AI-based Insurance Advisors. In: Information Processing & Management 60 (2023) 3, 103273. DOI: 10.1016/j.ipm.2023.103273.

[7] Ozmen Garibay, O.; Winslow, B.; Andolina, S.; Antona, M.; Bodenschatz, A. et al.: Six Human-Centered Artificial Intelligence Grand Challenges. In: International Journal of Human-Computer Interaction 39 (2023) 3, pp. 391–437. DOI: 10.1080/10447318.2022.2153320.

[8] Shneiderman, B.: Bridging the Gap Between Ethics and Practice: Guidelines for Reliable, Safe, and Trustworthy Human-Centered AI Systems. In: ACM Transactions on Interactive Intelligent Systems 10 (2020) 4, pp. 1–31. DOI: 10.1145/3419764.

[9] Shneiderman, B.: Human-Centered Artificial Intelligence: Reliable, Safe & Trustworthy. In: International Journal of Human-Computer Interaction 36 (2020) 6, pp. 495–504. DOI: 10.1080/10447318.2020.1741118.

[10] Schmager, S.; Pappas, I. O.; Vassilakopoulou, P.: Understanding Human-Centred AI: A Review of its Defining Elements and a Research Agenda. In: Behaviour & Information Technology, 44 (2025) 15, pp. 3771–3810. DOI: 10.1080/0144929X.2024.2448719.

[11] Onnasch, L.; Wickens, C. D.; Li, H.; Manzey, D.: Human Performance Consequences of Stages and Levels of Automation: An Integrated Meta-Analysis. In: Human Factors 56 (2014) 3, pp. 476–488. DOI: 10.1177/0018720813501549.

[12] Rieth, M.; Onnasch, L.; Hagemann, V.: Adaptable Automation for a More Human-Centered Work Design? Effects on Human Perception and Behavior. In: International Journal of Human-Computer Studies 186 (2024) C, 103246. DOI: 10.1016/j.ijhcs.2024.103246.

[13] Abrahão, S.; Insfran, E.; Sluÿters, A.; Vanderdonckt, J.: Model-based intelligent user interface adaptation: challenges and future directions. In: Software and Systems Modeling 20 (2021) 5, pp. 1335–1349. DOI: 10.1007/s10270-021-00909-7.

[14] Alipour, M.; Moghaddam, M. T.; Vaidhyanathan, K.; Kristensen, T.; Krogager Asmussen, N.: Emotional Internet of Behaviors: A QoE-QoS Adjustment Mechanism. In: Lecture Notes in Computer Science (2023), pp. 3–22. DOI: 10.1007/978-3-031-35891-3_1.

[15] Madni, A. M.; Jackson, S.: Towards a Conceptual Framework for Resilience Engineering. In: IEEE Systems Journal 3 (2009) 2, pp. 181–191. DOI: 10.1109/JSYST.2009.2017397.

[16] Wilkens, U.; Lupp, D.; Langholf, V.: Configurations of Human-Centered AI at Work: Seven Actor-Structure Engagements in Organizations. In: Frontiers in Artificial Intelligence 6 (2023), 1272159. DOI: 10.1145/3729176.3729191.

[17] Torkamaan, H.; Tahaei, M.; Buijsman, S.; Xiao, Z.; Wilkinson, D.; Knijnenburg, B. P.: The Role of Human-Centered AI in User Modeling, Adaptation, and Personalization —Models, Frameworks, and Paradigms. In: A Human-Centered Perspective of Intelligent Personalized Environments and Systems (2024), pp. 43–84. DOI: 10.1007/978-3-031-55109-3_2.

[18] Peffers, K.; Tuunanen, T.; Rothenberger, M. A.; Chatterjee, S.: A Design Science Research Methodology for Information Systems Research. In: Journal of Management Information Systems 24 (2007) 3, pp. 45–77. DOI: 10.2753/MIS0742-1222240302.

[19] Schwarz, J.; Will, L.; Wellmer, J.; Mosig, A.: A Patch-based Student-Teacher Pyramid Matching Approach to Anomaly Detection in 3D Magnetic Resonance Imaging. In: Proceedings of Machine Learning Research (2024) 250, pp. 1357–1370.

[20] Vasilev, M.; MacLeod, C.; Galbraith, W.; Javadi, Y.; Foster, E. et al.: Non-Contact In-Process Ultrasonic Screening of Thin Fusion Welded Joints. In: Journal of Manufacturing Processes 64 (2021), pp. 445–454. DOI: 10.1016/j.jmapro.2021.01.033.

[21] Berretta, S.; Tausch, A.; Bülow, F.; Kuhlenkötter, B.; Topp, M.; et al.: Human or AI First? A Holistic Perspective on the Sequential Order of Joint Human-AI Inspection Workflows. In: Applied Ergonomics 132 (2026), 104669. DOI: 10.1016/j.apergo.2025.104669.

[22] Topp, M.; Nestler, D.; Els, C.: Critical Image Detection (CiD) and Single Image Detail Analysis (SiDA) – A practical approach to AI in NDT. In: e-Journal of Nondestructive Testing 29 (2024) 8. DOI: 10.58286/30042.

[23] ISO 6385:2016 – Ergonomics Principles in the Design of Work Systems. Standard 6385 (2016). DOI: 10.31030/2429191.

[24] Kadir, B. A.; Broberg, O.: Human-Centered Design of Work Systems in the Transition to Industry 4.0. In: Applied Ergonomics 92 (2021), p. 103334. DOI: 10.1016/j.apergo.2020.103334.

[25] Alter, S.: Work System Theory: Overview of Core Concepts, Extensions, and Challenges for the Future. In: Journal of the Association for Information Systems 14 (2013) 2, pp. 72–121. DOI: 10.17705/1jais.00323.

[26] Hendrick, H. W.; Kleiner, B. M.: Macroergonomics: An Introduction to Work System Design. Human Factors and Ergonomics Society, Santa Monica, CA, 2001.

[27] Smith, M. J.; Sainfort, P.: A Balance Theory of Job Design for Stress Reduction. In: International Journal of Industrial Ergonomics 4 (1989) 1, pp. 67–79. DOI: 10.1016/0169-8141(89)90051-6.

[28] Carayon, P.: The Balance Theory and the Work System Model … Twenty Years Later. In: International Journal of Human-Computer Interaction 25 (2009) 5, pp. 313–327. DOI: 10.1080/10447310902864928.

[29] Holden, R. J.; Carayon, P.; Gurses, A. P.; Hoonaker, P.; Hundt, A. S. et al.: SEIPS 2.0: A Human Factors Framework for Studying and Improving the Work of Healthcare Professionals and Patients. In: Ergonomics 56 (2013) 11, pp. 1669–1686. DOI: 10.1080/00140139.2013.838643.

[30] Carayon, P.; Wooldridge, A.; Hoonakker, P.; Hundt, A. S.; Kelly, M. M.: SEIPS 3.0: Human-Centered Design of the Patient Journey for Patient Safety. In: Applied Ergonomics 84 (2020), p. 103033. DOI: 10.1016/j.apergo.2019.103033.

[31] Salwei, M. E.; Carayon, P.: A Sociotechnical Systems Framework for the Application of Artificial Intelligence in Health Care Delivery. In: Journal of Cognitive Engineering and Decision Making 16 (2022) 4, pp. 194–206. DOI: 10.1177/15553434221097357.

[32] Kirk, M. A.; Moore, J. E.; Wiltsey Stirman, S.; Birken, S. A.: Towards a Comprehensive Model for Understanding Adaptations’ Impact: The Model for Adaptation Design and Impact (MADI). In: Implementation Science 15 (2020) 1. DOI: 10.1186/s13012-020-01021-y.

[33] Salikutluk, V.; Frodl, E.; Herbert, F.; Balfanz, D.; Koert, D.: Situational Adaptive Autonomy in Human-AI Cooperation. AutomationXP23 (2023). URL: https://ceur-ws.org/Vol-3394/short5.pdf, accessed 03.06.2025.

[34] Joshi, R.; Bengler, K.: Crafting Human-AI Interaction: A Rhetorical Approach to Adaptive Interaction in Conversational Agents. In: Proceedings of the 12th International Conference on Human-Agent Interaction (2024), pp. 314–322. DOI: 10.1145/3687272.3688297.

[35] De Visser, E. J.; Momen, A.; Walliser, J. C.; Kohn, S. C.; Shaw, T. H.; Tossel, C. C.: Mutually Adaptive Trust Calibration in Human-AI Teams. International Conference on Hybrid Human-Artificial Intelligence (HHAI) (2023). URL: https://ceur-ws.org/Vol-3456/short4-8.pdf, accessed 03.06.2025.

[36] Krause, F.; Paulheim, H.; Kiesling, E.; Kurniawan, K.; Leva, M. C. et al.: Managing Human-AI Collaborations within Industry 5.0 Scenarios via Knowledge Graphs: Key Challenges and Lessons Learned. In: Frontiers in Artificial Intelligence 7 (2024). DOI: 10.3389/frai.2024.1247712.

[37] Graefe, J.; Engelhardt, D.; Rittger, L.; Bengler, L.: How Well Does the Algorithm Know Me? A Structured Application of the Levels of Adaptive Sensitive Responses (LASR) on Technical Products. In: Design, User Experience, and Usability: Design Thinking and Practice in Contemporary and Emerging Technologies (2022), pp. 311–336. DOI: 10.1007/978-3-031-05906-3_24.

[38] Lindgren, H.: Emerging Roles and Relationships Among Humans and Interactive AI Systems. In: International Journal of Human–Computer Interaction [ahead-of-print] (2024), pp. 1–23. DOI: 10.1080/10447318.2024.2435693.

[39] Jiang, J.; Karran, A. J.; Coursaris, C. K.; Léger, P.-M.; Beringer, J.: A Situation Awareness Perspective on Human-AI Interaction: Tensions and Opportunities. In: International Journal of Human–Computer Interaction 39 (2023) 9, pp. 1789–1806. DOI: 10.1080/10447318.2022.2093863.

[40] Holter, S.; El‐Assady, M.: Deconstructing Human‐AI Collaboration: Agency, Interaction, and Adaptation. In: Computer Graphics Forum 43 (2024) 3, e15107. DOI: 10.1111/cgf.15107.

[41] Browning, T. R.: Design Structure Matrix Extensions and Innovations: A Survey and New Opportunities. In: IEEE Transactions on Engineering Management 63 (2016) 1, pp. 27–52. DOI: 10.1109/TEM.2015.2491283.

[42] Jung, S.; Sinha, K.; Suh, E. S.: Domain Mapping Matrix-Based Metric for Measuring System Design Complexity. In: IEEE Transactions on Engineering Management 69 (2022) 5, pp. 2187–2195. DOI: 10.1109/TEM.2020.3004561.

Your downloads

Potentials: Management