Operationalizing Ethical AI with tachAId |

Validating an interactive advisory tool in two manufacturing use cases

| Journal | Industry 4.0 Science |

| Issue | Volume 42, 2026, Edition 1, Pages 50-59 |

| Open Access | https://doi.org/10.30844/I4SE.26.1.48 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

The overall efficiency and success of AI solutions depends fundamentally on the quality and effectiveness of the resulting human-AI interaction [1]. Rapid technological adoption often outpaces the careful consideration of ethical challenges and human-centered design principles that are critical for successful implementation and agility. Key stakeholders—including strategic decision-makers, AI engineers, and user interface designers—often lack sufficient awareness of the specific pitfalls at the human-AI interface. These multifaceted challenges range from algorithmic opacity and the risk of systemic bias to data privacy concerns, premature automation, eroded human agency, and unclear responsibilities [2–5].

For industrial settings, the research focus often leans heavily towards technical feasibility and performance metrics [6, 7], relegating human and ethical factors to an afterthought. Although numerous frameworks for ethical AI have been proposed, most articulate high-level principles—such as transparency, fairness, non-maleficence, responsibility, and privacy—without offering concrete guidance for implementation [8, 9]. This leaves a persistent operationalization gap between abstract ideals and everyday AI development.

Efforts to address this gap have led to a growing number of methods and tools, yet these typically target only isolated stages of the development process or specific modalities for addressing ethical AI issues, like summaries, notions, code, and education [9]. Consequently, the landscape remains fragmented, with few approaches considering how different forms of guidance—ranging from conceptual reflection to technical implementation—can interact to support practitioners throughout the entire AI lifecycle.

In response to this fragmentation, human-centered AI (HCAI) has gained attention as an applied perspective aiming to translate ethical principles into sociotechnical design and development practices [6, 10]. While ethical AI focuses on normative principles and governance, HCAI stresses participation, usability, and the augmentation of human capabilities. Despite their overlap, the two differ in focus: ethical AI defines what should be achieved, HCAI explores how it can be realized. Yet, both continue to struggle with operationalizing these principles in practice.

tachAId (technical assistance concerning human-centered AI development) was developed to address this gap [11]. It is an interactive, web-based self-service advisory tool designed to embed HCAI considerations directly into the technical implementation process. This paper reports on the design principles and qualitative validation study of the tachAId artifact. Through two industrial case studies, it demonstrates how such a tool can begin to bridge the operationalization gap in applied AI ethics, while illuminating the related challenges.

Operationalizing human-centered AI with tachAId

tachAId originated with the aim of raising awareness about HCAI among diverse stakeholders, guiding their reflection on HCAI principles within their specific project context, and providing actionable guidance, regardless of their initial level of AI or ethics expertise. This section outlines both the theoretical foundations that inform HCAI in practice and the design of tachAId, which operationalizes these principles through interactive, stakeholder-oriented guidance.

Theoretical foundations: Grounding HCAI in practice

Developing and deploying AI necessitates a shift towards HCAI, an approach that emphasizes aligning AI systems with human values, needs, and capabilities to enhance, augment, and empower individuals rather than automate tasks [3, 6, 10]. Achieving HCAI in practice requires navigating key ethical criteria that manifest across the AI lifecycle [8]. Building on frameworks that analyze HCAI configurations in organizational contexts [12], tachAId operationalizes criteria particularly pertinent to applied ethics in industrial settings.

Human agency and domain knowledge integration

A central tenet of HCAI is designing AI systems as tools that support user control, enhance skills, and foster a sense of ownership, preventing deskilling or over-reliance [3, 4, 12]. This involves creating human-in-the-loop systems where humans remain in control and AI acts as a collaborative partner, augmenting capabilities in complex situations. By reinforcing human agency through meaningful involvement, such systems uphold responsibility, trust, fairness, and non-maleficence—ensuring decisions and thereby accountability remain transparent and preventing automation bias or unintended harm [8].

Effective AI solutions also require actively integrating domain user expertise not only in deployment, but throughout the development lifecycle—from data collection and feature engineering to model validation—ensuring tools are technically sound, practically relevant, and usable. Embedding expertise across stages further promotes beneficence and sustainability by grounding system behavior in contextual understanding and long-term societal benefit rather than short-term performance [8].

Clarification of responsibilities and processes

AI integration introduces ambiguity into existing workflows. A human-centered approach requires defining clear roles, accountabilities, and processes for data management, model training and monitoring, and handling AI failures or unexpected outcomes [12, 13]. This clarity is essential for establishing trust and ensuring safe operation.

Design and implementation of tachAId

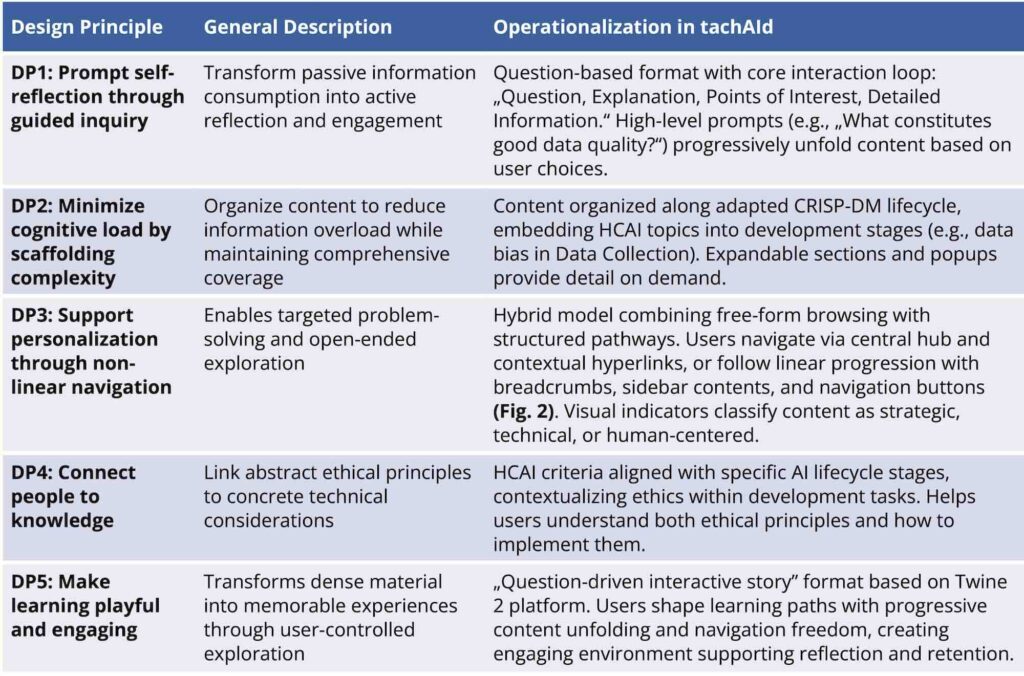

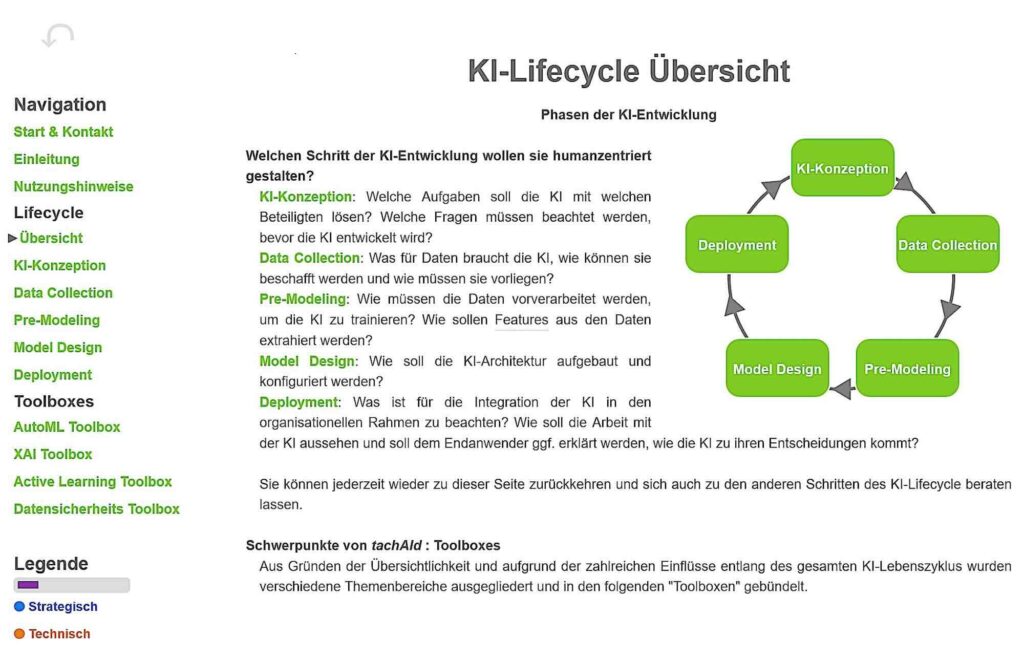

To translate HCAI criteria into a practical and engaging tool, we developed tachAId for the self-guided exploration of HCAI topics, integrating them into the technical steps of AI implementation. It targets stakeholders with diverse roles and expertise—including decision-makers, developers, and researchers—and moves beyond static formats like checklists or white papers by facilitating reflection and learning rather than merely presenting information. Drawing on Hohman et al.’s interactive article affordances [14], we derived five design principles (DPs) that guided tachAId’s creation and structure. See Figure 1 for our DPs and Figure 2 for a view of tachAId.

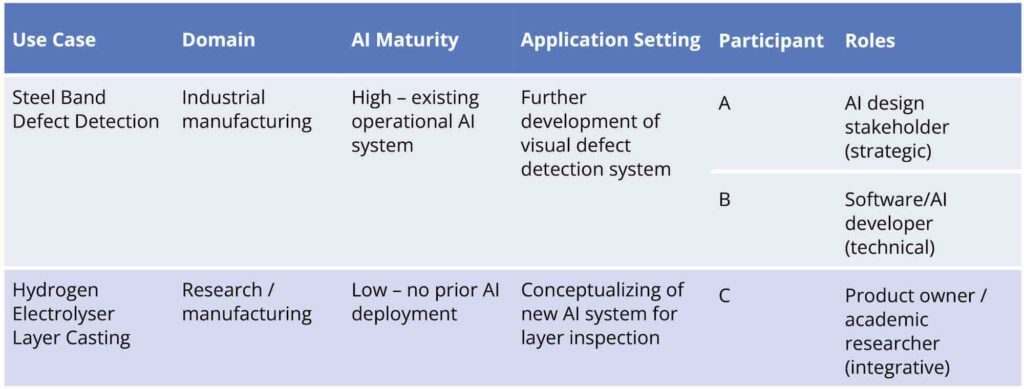

Methodology: A formative evaluation in two industrial cases

To evaluate the utility, usability, and effectiveness of tachAId, a qualitative validation study was conducted. The primary goal was to generate actionable feedback for improvement. It was a formative, naturalistic evaluation: formative because findings directly informed the tool’s next design iteration, and naturalistic because participants applied the tool to their real-world projects, increasing ecological validity. For this purpose,tachAId was evaluated in two distinct industrial manufacturing contexts, chosen to represent different points in the AI adoption journey and test the tool’s relevance across varying organizational AI maturity levels and application settings (Fig. 3). In total, three participants with different stakeholder roles were recruited.

Two-phase data collection was employed to capture both in-situ interactions and reflective feedback:

- Self-guided think-aloud sessions: Each participant used tachAId independently for 40 to 60 minutes, relating its content to their specific AI project.

They continuously verbalized thoughts, decisions, and points of confusion while interacting with the tool. This method provides direct insight into immediate cognitive processes and usability issues [15, 16]. Sessions were observed with minimal intervention; non-verbal cues were noted.

- Semi-structured interviews: Immediately following the think-aloud session, we conducted a semi-structured interview. The interview guide covered overall impressions, navigation clarity, perceived information utility, the tool’s effectiveness in highlighting HCAI aspects, and improvement suggestions.

Key observations, quotes, and feedback were synthesized to identify recurring themes related to usability, user understanding, and HCAI awareness.

Success in fostering HCAI awareness and evolving perspectives

tachAId proved effective in its primary goal: directing stakeholder attention toward critical HCAI considerations that might otherwise be overlooked in technically-focused development. Across different roles, engagement with the tool prompted reflection and, in some cases, observable shifts in participants’ perspectives on human-AI interaction. The developer (Participant B) confirmed that the interaction “helped to spark interest and engage with topics that one would not have looked at otherwise”, while the product owner/researcher (Participant C) found the sections on Key Performance Indicators (KPIs) during Conceptualization and the guidance on Feature Engineering to be highly valuable for his project.

The most compelling evidence of the tool’s impact was the observed evolution in participant thinking. The strategic decision-maker (Participant A) initially dismissed considerations of human interaction during deployment, stating it was “not our problem, but our customers’ problem”. This perspective reflects a common organizational silo where downstream human factors are externalized. However, his subsequent engagement with the tool’s content on documentation and model complexities, including his positive reception of prompts to document various development decisions, suggests a broadening perspective prompted by the tool’s structure. This shift indicates that, by embedding HCAI topics within a familiar AI lifecycle, the tool can successfully reframe abstract ethical imperatives as concrete project responsibilities.

This success appears rooted in the tool’s knowledge architecture rather than its specific interaction mechanics. While navigational issues were prevalent (as detailed in the next section), the structured presentation of HCAI topics within relevant technical phases made them tangible and actionable. This was validated by Participant B, who, despite the challenges others faced, found the interactive format “significantly more interesting than if the content were prepared linearly”, suggesting the interactive discovery was key to his engagement. These findings indicate that tachAId’s core contribution—its structured mapping of HCAI principles to the AI lifecycle—effectively surfaced relevant considerations at pertinent stages, thereby beginning to bridge the awareness gap.

Navigational dissonance: The conflict between exploration and orientation

The non-linear navigation paradigm was effective for one user but a source of significant frustration for the others. The developer (Participant B) successfully used the non-linear path as intended and found the associative structure engaging. In stark contrast, the other participants struggled with disorientation. The strategic stakeholder (Participant A) explicitly stated, “You get lost. I’d have preferred something linear” and was observed consciously trying to follow a linear path to avoid getting lost in “rabbit holes”. Similarly, the participant with limited AI experience (Participant C) expected a “clear linear guide” and found the self-directed format confusing. This preference for linearity was a dominant theme.

This disorientation stemmed from several specific usability failures. Participants confused the tool’s internal navigation arrows with those of their web browser, overlooked orientation aids like the “visited pages” legend, and were generally unaware of the option to read linearly, mentioned in the user instructions. The lack of a persistent “you are here” indicator in the tool’s hierarchy led to users feeling lost within the tree structure, unable to easily return to previous sections or understand the relationship between different topics. These issues suggest that, while designed to support exploration, the interface lacked the robust scaffolding necessary to prevent cognitive overload and maintain user orientation, especially for those seeking a structured overview.

The actionability gap: The demand for concrete, practical guidance

A universal finding across all participants was the desire for more concrete, practical, and actionable content. The tool was often perceived as too “education-heavy” when users expected “more practical/applied content”. This “actionability gap” was not a rejection of the topics presented, but rather a demand for the next level of detail. The feedback consistently centered on moving from principles to practice. Participants wanted the tool to answer, “Which problem can I solve with what?”. This manifested in several specific requests:

- Specific tool recommendations: Participants expected the “AutoML Toolbox” to contain “5 tools” or a guide on “Which steps can I automate and with which tool?”.

- Illustrative use cases: There was a desire for a “short example or use case” for concepts like extending an existing AI as well as an example of “how AI is built and trained” throughout the tool.

- Comparative analysis: The developer, in particular, wanted to get “faster to concrete suggestions (pros/cons of methods)”.

- Actionable definitions: Users requested explicit explanations for technical methods concerning data privacy like k-anonymity, l-diversity, and t-closeness, which ensure that sensitive individual data cannot be uniquely identified. Moreover, clearer and more concise explanations for complex concepts like “catastrophic forgetting” (forgetting acquired knowledge upon learning new information) were also demanded.

Awareness as a prerequisite for ethical practice

Our evaluation suggests that many HCAI challenges remain under-recognized by AI stakeholders, particularly in industrial settings where technical performance tends to eclipse human-centered concerns. In this context, tachAId successfully fulfilled its core objective: prompting reflection and raising awareness (DP1, DP4). Across roles and experience levels, participants reported new insights or shifts in perspective regarding the role of human factors in AI development.

This impact stemmed not from didactic instruction, but from embedding HCAI considerations within familiar project phases, thus reducing abstraction and fostering what can be termed situated ethics. The tool translates high-level principles (e.g., fairness, human agency) into concrete, context-relevant prompts across the AI lifecycle, helping users recognize ethics as a practical design concern rather than an external constraint. As seen with Participant A, who began to reconsider human interaction during deployment, tachAId can catalyze meaningful perspective shifts. This initial mapping of principles to practice is a crucial first step in bridging the operationalization gap and equipping teams with a shared technical understanding and ethical vocabulary for deeper, collaborative engagements.

The tension between exploration and orientation

However, our findings also highlight a central tension in the tool’s design: the friction between non-linear exploration and the need for clear orientation. While some users appreciated the freedom to browse topics based on interest, others—especially those new to AI or HCAI—experienced significant disorientation. These divergent responses suggest that the success of exploratory interaction models is highly contingent on users’ prior knowledge, digital literacy, and expectations for structured guidance.

This illustrates a common design tension: while non-linear exploration promotes engagement, it can increase cognitive load without sufficient scaffolding (DP2 vs. DP3). To address this, we enhanced the navigation menu to provide clearer, persistent linear navigation and improved onboarding by explicitly explaining the tool’s purpose and core interaction model. These changes help reduce disorientation while preserving exploratory flexibility—they combine guidance and user agency.

Toward more actionable support: From awareness to empowerment

Perhaps the most critical insight to emerge from our evaluation is the “actionability gap”. While tachAId succeeded as an entry point for making HCAI visible, it fell short in making it directly implementable. Users expressed a strong need for more practical examples, tool recommendations, and step-by-step guidance on how to address the issues identified. This feedback reflects a broader challenge in the field: awareness, though necessary, is not sufficient for behavioral change or organizational adoption. Developers, decision-makers, and researchers alike seek tangible resources to move from ethical intent to technical implementation.

This actionability gap also underscores a structural limitation inherent to advisory tools like tachAId: the demand for concrete examples, implementation templates, or tool recommendations is fundamentally context-specific. While participants valued the idea of receiving “next steps,” such resources are often tightly coupled to the specifics of a given AI use case—including organizational workflows, AI model and data types, regulatory constraints, and user demographics. This variability makes it exceedingly difficult for a generalized tool like tachAId to offer actionable content that is both detailed and broadly applicable.

Implications for the design of HCAI support tools

Our findings offer broader implications for the design of HCAI support tools beyond tachAId. First, they highlight the importance of embedding ethics into process—placing ethical reflection at the point of decision, rather than treating it as a separate or retrospective concern. Second, they demonstrate that interactivity matters: tools that actively engage users in reflective inquiry and decision-making appear more effective than static checklists or passive guidelines. Third, they suggest that multi-modal guidance—combining conceptual prompts, detailed explanations, visual cues, and concrete examples—is crucial for accommodating diverse expertise and supporting ongoing learning throughout the AI lifecycle.

These findings also resonate with Prem et al.’s [9] typology of AI ethics tools, which spans conceptual frameworks, checklists, process models, software assistants, and educational resources. As Prem et al. [9] note, such tools typically address isolated aspects of ethical AI, leaving practitioners to navigate between abstract principles and specific technical measures on their own. tachAId cross-cuts this typology by integrating orientation, reflection, and guidance into a single interactive environment that accompanies users throughout the CRISP-DM (Cross-Industry Standard Process for Data Mining) phases. This hybrid design enables users to move fluidly between levels of abstraction—from principles to processes to concrete examples—supporting more coherent ethical reasoning in practice.

The qualitative validation remains exploratory due to a small but deliberately diverse sample covering strategic, technical, and integrative perspectives. The evaluation also focused on the initial prototype; subsequent refinements have yet to be validated. Future work will assess whether the improved version better supports navigation and learning across lifecycle phases.

In summary, the development and evaluation of tachAId provides a concrete example of how interactive tools can help embed human-centered and ethical reflection into AI development processes. While our work reveals clear areas for improvement, it also affirms the potential for the interactive format of tachAId to serve as a vital bridge between principle and practice—supporting a more ethically grounded, human-centered approach to AI development in industry.

This research and development project is funded by the German Federal Ministry of Research, Technology and Space (BMFTR) within the “The Future of Value Creation – Research on Production, Services and Work” program (02L19C200) and managed by the Project Management Agency Karlsruhe (PTKA). The authors are responsible for the content of this publication.

Bibliography

[1] Rath-Manakidis, P. et al.: Label Error Detection in Defect Classification using Area Under the Margin (AUM) Ranking on Tabular Data. In: Wirtschaftsinformatik 2025 Proceedings (2025). In press.[2] Pant, A.; Hoda, R.; Spiegler, S. V.; Tantithamthavorn, C.; Turhan, B.: Ethics in the Age of AI: An Analysis of AI Practitioners’ Awareness and Challenges. In: ACM Trans. Softw. Eng. Methodol. 33 (2024) 3, pp. 1-35. DOI: https://doi.org/10.1145/3635715.

[3] Xu, W.; Dainoff, M. J.; Ge, L.; Gao, Z.: Transitioning to human interaction with AI systems: New challenges and opportunities for HCI professionals to enable human-centered AI. In: International Journal of Human–Computer Interaction 39 (2023) 3, pp. 494-518. DOI: https://doi.org/10.1080/10447318.2022.2041900.

[4] Bach, T. A.; Kristiansen, J. K.; Babic, A.; Jacovi, A.: Unpacking Human-AI Interaction in Safety-Critical Industries: A Systematic Literature Review. In: IEEE Access 12 (2024), pp. 106385-106414. DOI: https://doi.org/10.1109/ACCESS.2024.3437190.

[5] Gomez, C.; Cho, S. M.; Ke, S.; Huang, C.-M.; Unberath, M.: Human-AI collaboration is not very collaborative yet: a taxonomy of interaction patterns in AI-assisted decision making from a systematic review. In: Front. Comput. Sci. 6 (2025). DOI: https://doi.org/10.3389/fcomp.2024.1521066.

[6] Kluge, A.; Wilkens, U.; Nitsch, V.; Pfeifer, C.: Editorial: Human-centered AI at work: common ground in theories and methods. In: Front. Artif. Intell. 7 (2024). DOI: https://doi.org/10.3389/frai.2024.1411795.

[7] Berretta, S.; Tausch, A.; Ontrup, G.; Gilles, B.; Pfeifer, C.; Kluge, A: Defining human-AI teaming the human-centered way: a scoping review and network analysis. In: Front. Artif. Intell. 6 (2023). DOI: https://doi.org/10.3389/frai.2023.1250725.

[8] Joblin, A.; Ienca, M.; Vayena, E.: The global landscape of AI ethics guidelines. In: Nat Mach Intell 1 (2019), pp. 389-399. DOI: https://doi.org/10.1038/s42256-019-0088-2.

[9] Prem, E.: From ethical AI frameworks to tools: a review of approaches. In: AI Ethics 3 (2023), pp. 699-716. DOI: https://doi.org/10.1007/s43681-023-00258-9.

[10] Wilkens, U.; Cost Reyes, C.; Treude, T.; Kluge, A.: Understandings and perspectives of human-centered AI – a transdisciplinary literature review. In: Frühjahrskongress Der Gesellschaft Für Arbeitswissenschaft, Bochum, 2021. URL: https://www.iaw.ruhr-uni-bochum.de/wp-content/uploads/Wilkens-et-al.-2021-1.pdf, accessed 02.07.2025.

[11] Bauroth, M.; Rath-Manakidis, P.; Langholf, V.; Wiskott, L; Glasmachers, T: tachAId – An interactive tool supporting the design of human-centered AI solutions. In: Front. Artif. Intell. 7 (2024). DOI: https://doi.org/10.3389/frai.2024.1354114.

[12] Wilkens, U.; Lupp, D.; Langholf, V.: Configurations of human-centered AI at work: seven actor-structure engagements in organizations. In: Front. Artif. Intell. 6 (2023). DOI: https://doi.org/10.3389/frai.2023.1272159.

[13] Kallina, E.; Bohné, T.; Singh, J.: Stakeholder Participation for Responsible AI Development: Disconnects Between Guidance and Current Practice. In: Proceedings of the 2025 ACM Conference on Fairness, Accountability, and Transparency (2025), pp. 1060-1079. DOI: https://doi.org/10.1145/3715275.3732069.

[14] Hohman, F.; Conlen, M.; Heer, J.; Chau, D. H. (Polo): Communicating with Interactive Articles. In: Distill (2020). DOI: https://doi.org/10.23915/distill.00028.

[15] Fonteyn, M. E.; Kuipers, B.; Grobe, S. J.: A Description of Think Aloud Method and Protocol Analysis. Qual Health Res 3 (1993) 4, pp. 430-441. DOI: https://doi.org/10.1177/104973239300300403.

[16] Jaspers, M. W. M.; Steen, T.; van den Bos, C.; Geenen, M.: The think aloud method: a guide to user interface design. In: International Journal of Medical Informatics 73 (2004) 11, pp. 781-795. DOI: https://doi.org/10.1016/j.ijmedinf.2004.08.003.

Your downloads

Potentials: Leadership Management