secureAR – An AR Platform for Industrial Manufacturing |

Development and testing of an AR assistance system with consideration of cyber security

| Journal | Industry 4.0 Science |

| Issue | Volume 40, 2024, Edition 2, Pages 64-71 |

| Bibliography | Share | Cite | Download |

Abstract

Keywords

Article

Augmented Reality (AR) is a technology that makes it possible to integrate digital information into the real world. However, the implementation of an AR project poses various challenges that must be taken into account to ensure long-term success. All the necessary steps should be planned and considered in the right order [1].

The first step is to analyze the IT infrastructure in order to enable secure and high-performance communication. This includes planning a wireless network environment, while taking into account criteria such as cybersecurity and scalability. The second step deals with the provision of digital content (content and digital twin), i.e. the objects that are to be displayed or supplemented (augmented) with information using AR. This can include the display of assembly instructions or information relevant for maintenance, repair or quality inspection. Complex 3D models must also be simplified and provided in a suitable format.

The implementation of the actual task with AR, e.g. in the form of electronic work instructions, does not take place until the third step. It is important that the author has unrestricted access to all information required to create the instructions or guiding points. The final step involves the selection of suitable AR-enabled devices, such as smartphones, tablets or smart glasses, whereby the requirements of the use case, the costs and the acceptance of the various device form factors must be taken into account (Fig. 1).

The performance of the device plays an important role in the provision of digital content.

In addition to the required performance and sensor technology, the occupational safety guidelines for the area of use are also important when selecting a device.

AR is used in various areas, such as education, medicine, entertainment and architecture [2]. Access to necessary information can lead to security problems if the software architecture or the hardware used is not secure. How an intuitive digital worker assistance system can nevertheless be implemented was researched in the secureAR joint project.

Overview secureAR

As a result of the increasing digitalization of all areas of life, the risk of attacks on IT (information technology) systems is also increasing, leading in turn to increased demands for cybersecurity. According to the Federal Criminal Police Office (BKA), the police registered more than 130,000 cybercrimes in 2022 [3, 4]. The cybercrimes recorded by the police authorities therefore amount to over 350 per day. According to the digital association Bitkom, the resulting damage amounted to 203 billion euros in 2022 [3, 4]. By comparison, the federal budget expenditure for 2022 amounted to around 480 billion euros [5]. However, the amount of damage is probably much higher, as a large proportion of cyberattacks – up to 91.5% depending on the severity of the crime – are not reported to the investigating authorities and are therefore not recorded in the statistical analysis [6].

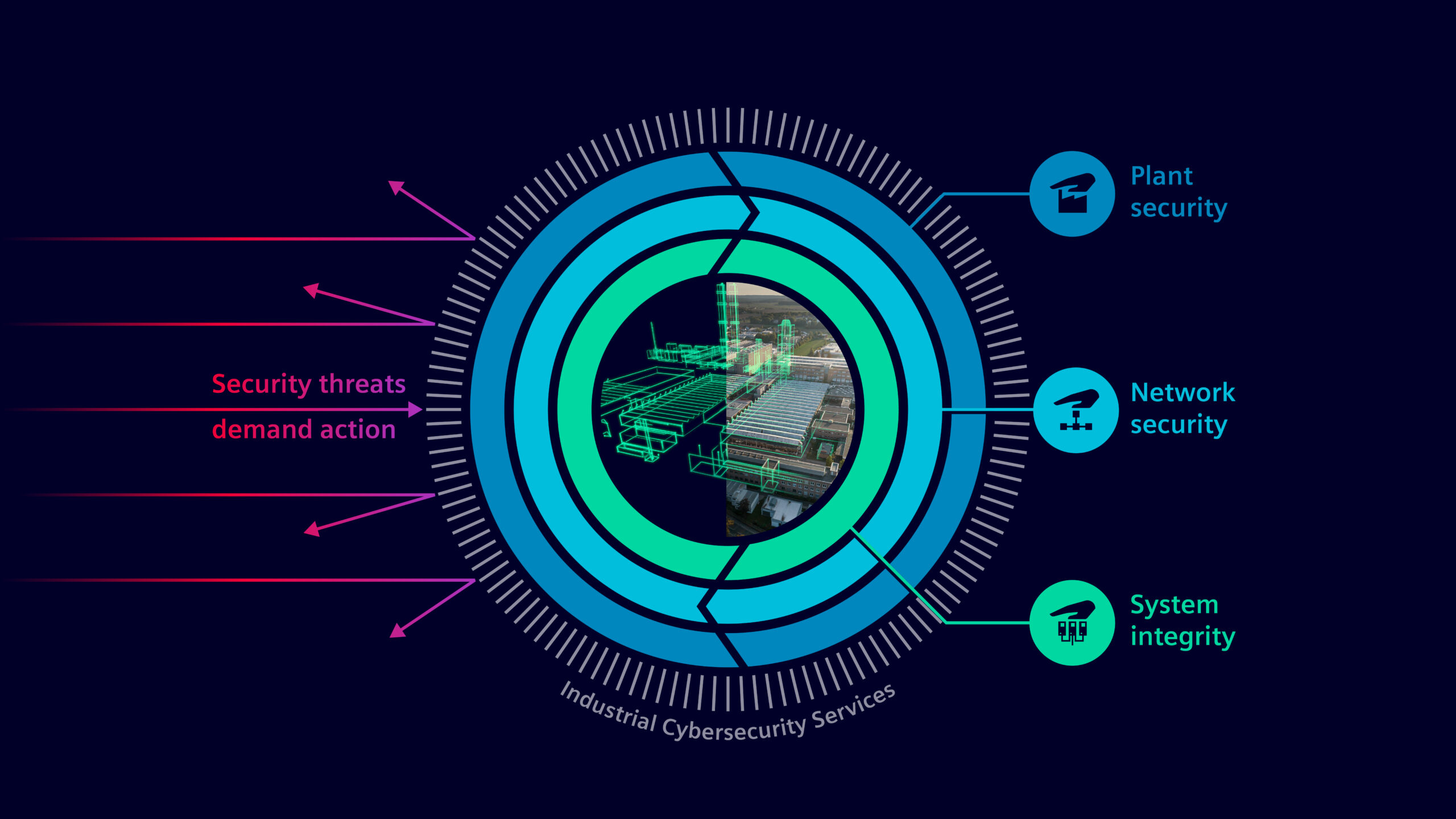

Industrial implementations of innovative software solutions regularly fail due to the security standards with which companies need to comply [7, 8, 9]. It is not only the software specifications that play a role here, but also the trustworthiness of the hardware used. Mobile end devices and head-mounted displays (HMDs) in particular require secure communication with internal company systems in order to be used in a manufacturing environment. To this end, a cross-industry and cloud-based service platform with open industry interfaces was implemented in the secureAR project. This platform collects data along the entire value chain, from planning and the manufacturing process through to plant maintenance. The data can be provided and visualized according to location and situation via a new AR assistance system (Fig. 2).

secureAR is a joint research project between seven equal partners. The Fraunhofer Institute FEP is in charge of the AR hardware, i.e. the headset and the integrated optics. TU Dresden is specifying the computing hardware in conjunction with the software architecture. Kernkonzept GmbH is researching an operating system environment for safe use in an industrial environment. The German Federal Institute for Occupational Safety and Health (BAuA) is investigating the resulting ergonomic issues.

Airbus Operations GmbH is integrating the innovative AR assistance system into a use case in aircraft assembly. Siemens AG is leading the project, developing the control electronics and able to use industrial use cases to evaluate its own software development work on worker assistance. In the project, Gestalt Robotics GmbH is investigating the requirements and resulting challenges in the implementation of a platform-independent AR-SDK (Augmented Reality Software Development Kit).

Electronic work instructions

Work instructions are an essential part of manufacturing processes, as they provide guidance to workers [10, 11]. They show workers how to perform their tasks and how to ensure that the work is done correctly and efficiently. Traditionally, work instructions are printed on paper and distributed to the workers together with 2D design sketches. However, electronic work instructions (EWI) are becoming increasingly popular [12, 13].

EWI is a computerized visual aid that animates and visually shows workers how to perform their tasks. Unlike paper-based work instructions, electronic work instructions can also include 3D models of the parts to be assembled, tooling information, and product and manufacturing information. Electronic work instructions can be interactive, allowing users to enrich the 3D view with information, watch animated assembly sequences and scroll through a sequential list of steps to be performed for each work order.

Objective of work instructions in secureAR

“Remote Support” and “Work Instruction” are two key use cases that were investigated in the secureAR project. Remote Support refers to the multimodal interaction between AR users and experts who are not on site. Work Instruction deals with animated and interactive instructions. The aim of Work Instruction is to secure experience and expert knowledge. Suitable preparation can help to counteract the shortage of skilled workers and to carry out upcoming work in a reproducible, standardized and consistently high-quality manner. In the secureAR project, work instructions are planned as AR applications that prepare the upcoming process steps.

Technical realization for the creation of work instructions

The “Work Instruction” use case relies heavily on visualization in the client and in the authoring area. The client, i.e. the software part that is executed in an HMD, renders 3D models and the associated annotations that are created in the authoring part. In addition, the general user interface required to control the work instructions is visualized. The authoring part of the work instruction, referred to below as the editor, is operated by an author at a workstation in the office. This editor offers an author a simple way of annotating a 3D CAD model (spatial or three-dimensional geometric model that has been designed using computer-aided design) and creating animations of object parts.

The editor and client were implemented using the Unity 3D engine. This 3D engine offers the software developer a platform-independent development environment that abstracts many of the hardware-related implementation tasks so that development can be limited to the actual tasks, such as loading 3D models, rendering them and creating options for animating and annotating them. These actions, along with additional short textual instructions, are used to create work instructions that are divided into short, easy-to-understand steps. The author does not need to have any special skills other than basic computer knowledge to operate the finished editor.

Target achievement for the creation of work instructions

Work instructions can be divided into small, manageable steps and worked through by the workers one by one. These steps are defined in the editor. Each step has a name, a description and a timeline on which actions can be arranged chronologically. The actions include animations such as translate, rotate and scale, but also color highlights, callouts (text information with an arrow pointing to an object) as well as the integration and display of additional media such as videos and images or sub-objects, which can be hidden or shown.

All the functions described were implemented and tested in a specially developed editor. The basic functions include authentication and authorization with a server as well as the creation, selection and loading of work instructions. Other functions include the ability to rename and load 3D models and other media, as well as settings that tell the AR-SDK which AI model to use for object recognition and pose determination. A preview mode can also be used to validate the current step in the 3D view in advance.

A so-called gaze control developed for the HMD user is used in both the “Work Instruction” and the “Remote Support” use case clients. Gaze means “to stare”, “to fix” or “to look fixedly in one direction”. By staring at UI elements (user interface elements denote all elements of the graphical user interface, such as windows, checkboxes, text fields or drop-down lists that are used to control or operate the HMD), the user interacts with them. The cursor is the center of the field of view, which is represented by a small dot. It helps the user to “stare” at the correct UI element. If an element is “stared at” for two seconds, it is selected. The duration of a stare is indicated by the filling of the corresponding object, similar to a progress bar.

Head Mounted Device

The AR data glasses currently available on the market are very complex in their design and cannot be individually adapted to the respective conditions of use. In addition, these proprietary systems are dependent on the manufacturer’s hardware and software components, and this extends to the event of changes requested by users or innovation leaps to a successor system or an alternative manufacturer, which has so far prevented their widespread use in everyday industrial applications.

The modular secureAR concept (Fig. 2) therefore ensures that the individual components (cameras, control electronics for the optics, impact cap and, if necessary, sensors) are interchangeable or freely configurable, that they can be easily repaired and that only individual components, rather than the entire system, would need to be replaced in the event of an innovation leap. This modular design concept is more sustainable, more cost-effective and can serve as a bridging technology or in research and teaching, offering an excellent opportunity to support the need for digitalization in Germany with intelligent and affordable concepts.

Cybersecurity

Security and sovereignty over data play an essential role in industrial manufacturing [14, 15] and are therefore a key to success (Figure 3).

As AR applications are always sensor-based, the companies using them have no control over whether the sensor data (camera, GPS) is passed on to third parties. This is because AR-enabled devices (smartphones, tablets, smart glasses) are usually connected directly to the internet. The secureAR operating system is based on the modular L4Re system platform, which is also used in other security-critical areas such as government laptops and high-security network gateways.

L4Re is a microkernel-based operating system framework. By encapsulating processing in virtual machines, functionalities are modularized and dependencies between them are reduced. Among other things, this ensures that the data collected by the sensors can be processed and transferred to the cloud safely and securely and that no uncontrolled communication with the internet can take place.

In addition, the development of a dedicated container-based and cloud-capable backend server infrastructure prevents information from being stored in uncontrolled environments during “normal” data processing. This means that “secureAR” offers significantly greater protection for the data being processed than conventional systems.

AR-supported solution with a focus on safety and innovation

The solution developed is based on the latest research findings from the fields of machine vision, machine learning and data security. An important aspect is the integration of SLAM technology (Simultaneous Localization and Mapping – determining the position of an object, e.g. an HMD in a spatial environment) and image-based object recognition for precise pose determination and fast implementation of tracking and recognition algorithms. AR plays to its strengths here, particularly in maintenance and repair (Fig. 4).

Thanks to AR glasses, technicians can keep instructions and helpful information directly in their field of vision while working on machines. They are also assisted with the burdensome task of troubleshooting. Remote support is also facilitated by the technology. Experts can assist remotely as though they were on site. In this way, AR solutions can also facilitate the programming and control of robots, for example, and speed up the commissioning and integration of robots into existing processes [16].

The prospects for secureAR’s innovation lie in the widespread distribution of the solution, particularly through integration into the protective equipment already in use and the public availability of subcomponents such as the AR-SDK and the operating system platform. The modular hardware and adaptability to different requirements in the industrial environment offer potential for broad application. The implementation of sustainability and reparability through the modular concept of secureAR meets future EU ecodesign requirements.

The secureAR research and development project on which this article is based was funded as part of the “Internet-based services for complex products, production processes and systems (Smart Services)” funding program of the German Federal Ministry of Education and Research (BMBF) under the grant number 02K18D010 – 16 and was supervised by the Project Management Agency Karlsruhe (PTKA), Production, Services and Labor at the Dresden site.

Special thanks go to the project partners Airbus Operations GmbH, the Federal Institute for Occupational Safety and Health, Fraunhofer FEP, Gestalt Robotics GmbH, Kernkonzept GmbH and the Technical University of Dresden, as well as all employees in the use cases at Airbus Operations GmbH and Siemens AG for their constructive cooperation.

Bibliography

[1] Augmented Reality – verstehen und anwenden. Podcast. URL: open.spotify.com/show/3qB8mZi6iT1fKiUXtGLXpY?si=84a7fde6b21340df, Abrufdatum: 27.02.2024.[2] Knoll, M.; Stieglitz, S.: Augmented Reality und Virtual Reality: Einsatz im Kontext von Arbeit, Forschung und Lehre, In: HMD 59, 6–22 (2022).

[3] Bundeskriminalamt: Pressemitteilung Bundeslagebild Cybercrime 2022. URL: www.bka.de/SharedDocs/Pressemitteilungen/DE/Presse_2023/pm230816_BLB_Cybercrime.pdf, Abrufdatum 16.08.2023.

[4] Bundeskriminalamt: Bundeslagebild Cybercrime 2022. URL: www.bka.de/SharedDocs/Downloads/DE/Publikationen/JahresberichteUndLagebilder/Cybercrime/cybercrimeBundeslagebild2022.html, Abrufdatum 12.07.2023.

[5] Bundesministerium der Finanzen (BMF): Vorläufiger Abschluss des Bundeshaushalts 2022, Monatsbericht des BMF. URL: www.bundesfinanzministerium.de/Monatsberichte/2023/01/Inhalte/Kapitel-3-Analysen/3-3-vorlaeufiger-abschluss-bundeshaushalt-2022.html, Abrufdatum 26.01.2023.

[6] Dreißigacker, A.; von Skarczinski, B.; Wollinger, G. R.: Cyberangriffe gegen Unternehmen in Deutschland. Ergebnisse einer Folgebefragung 2020, Kriminologisches Forschungsinstitut Niedersachsen e. V. (KFN), KFN-Forschungsberichte No. 162.

[7] Pelkmann, P.: Unternehmen scheitern oft an den eigenen Security-Anforderungen. URL: www.security-insider.de/unternehmen-scheitern-oft-an-den-eigenen-security-anforderungen-a-b2dc41bd30548af3f6a46dd9db7bf3c5/, Abrufdatum: 13.03.2023.

[8] CyberCompare, A Bosch Business: Der CyberCompare Cybersecurity Benchmarkreport 2023: Die häufigsten Handlungsbedarfe und Themen mit dem höchsten Reifegrad. Whitepaper. URL: cybercompare.com/the-cybercompare-cybersecurity-benchmark-report-2023-the-most-common-needs-for-action-and-topics-with-the-highest-maturity-level/, Abrufdatum 02.02.2024.

[9] Menges, M.: Warum Industrie-4.0-Projekte nicht umgesetzt werden. URL: embedded-data.de/de/blog/warum-industrie-4.0-projekte-nicht-umgesetzt-werden, Abrufdatum 10.06.2022.

[10] Ritter, A.: Arbeitsanweisungen. URL: www.haufe.de/arbeitsschutz/arbeitsschutz-office-professional/arbeitsanweisungen_idesk_PI13633_HI2652309.html, Abrufdatum 29.02.2024.

[11] Bundesanstalt für Arbeitsschutz und Arbeitsmedizin (BAuA): Handlungshilfe zur Erstellung von Arbeitsunterlagen für die Prozessführung. URL: www.baua.de/DE/Angebote/Publikationen/AWE/Prozessfuehrung.pdf?__blob=publicationFile&v=1, Abrufdatum 30.06.2010.

[12] Eversberg, L.; Lambrecht, J.: Evaluating Digital Work Instructions with Augmented Reality versus Paper-based Documents for Manual, Object-specific Repair Tasks in a Case Study with Experienced Workers. In: The International Journal of Advanced Manufacturing Technology Volume 127 (2023), S. 1859-1871. URL: doi.org/10.1007/s00170-023-11313-4, Abrufdatum 29.02.2024.

[13] Pokorni, B.; Zwerina, J.; Hämmerle, M.: Human-centered Design Approach for Manufacturing Assistance Systems Based on Design Sprints. In: 30th CIRP Design 2020. URL: publica.fraunhofer.de/bitstreams/64fb8567-4da4-4d76-9643-712407e7f9e8/download, Abrufdatum 29.02.2024.

[14] Forschungsbeirat Industrie 4.0: Aufbau, Nutzung und Monetarisierung einer industriellen Datenbasis. URL: www.plattform-i40.de/IP/Redaktion/DE/Downloads/Publikation/Datenbasis.pdf?__blob=publicationFile&v=5, Abrufdatum 05.12.2022.

[15] Hack, S. u. a.: Challenges and Opportunities in Securing the Industrial Internet of Things. In: IEEE Transactions on Industrial Informatics 17 (2021) 5, S. 2985-2996.

[16] KUKA Aktiengesellschaft: KUKA.MixedReality Assistant. URL: www.kuka.com/-/media/kuka-downloads/imported/87f2706ce77c4318877932fb36f6002d/kukamixedreality-assistantde.pdf?rev=555c53f69436430eb5c867d2b0a968b1&hash =BB7E02D1102EE332C0DBDFEEB93ED048, Abrufdatum 19.06.2023.